Final Project Requirements

The final project represents the integration of all skills developed during Fab Academy. It must demonstrate the design, fabrication, programming, and integration of a complete embedded system.

Design & Development

- Define project purpose and functionality

- Develop original system design

- Apply 2D and 3D modeling

- Design custom electronics (PCB)

Fabrication

- Use additive and subtractive processes

- Fabricate structural and electronic components

- Assemble and package the system

System Integration

- Integrate hardware and software

- Implement microcontroller programming

- Include input and output devices

- Enable communication between subsystems

Documentation

- Explain design decisions and processes

- Include BOM (Bill of Materials)

- Provide design files and code

- Document results and evaluation

Deliverables

- Final project webpage

- Presentation slide (1920×1080)

- 1-minute video demonstration

- Complete documentation and source files

Project Development Status

Project idea and system concept defined.

Architecture and subsystems under development.

Custom PCB and hardware design pending.

Mechanical parts and enclosure not started.

Control logic and interface pending.

System integration and testing pending.

System Architecture

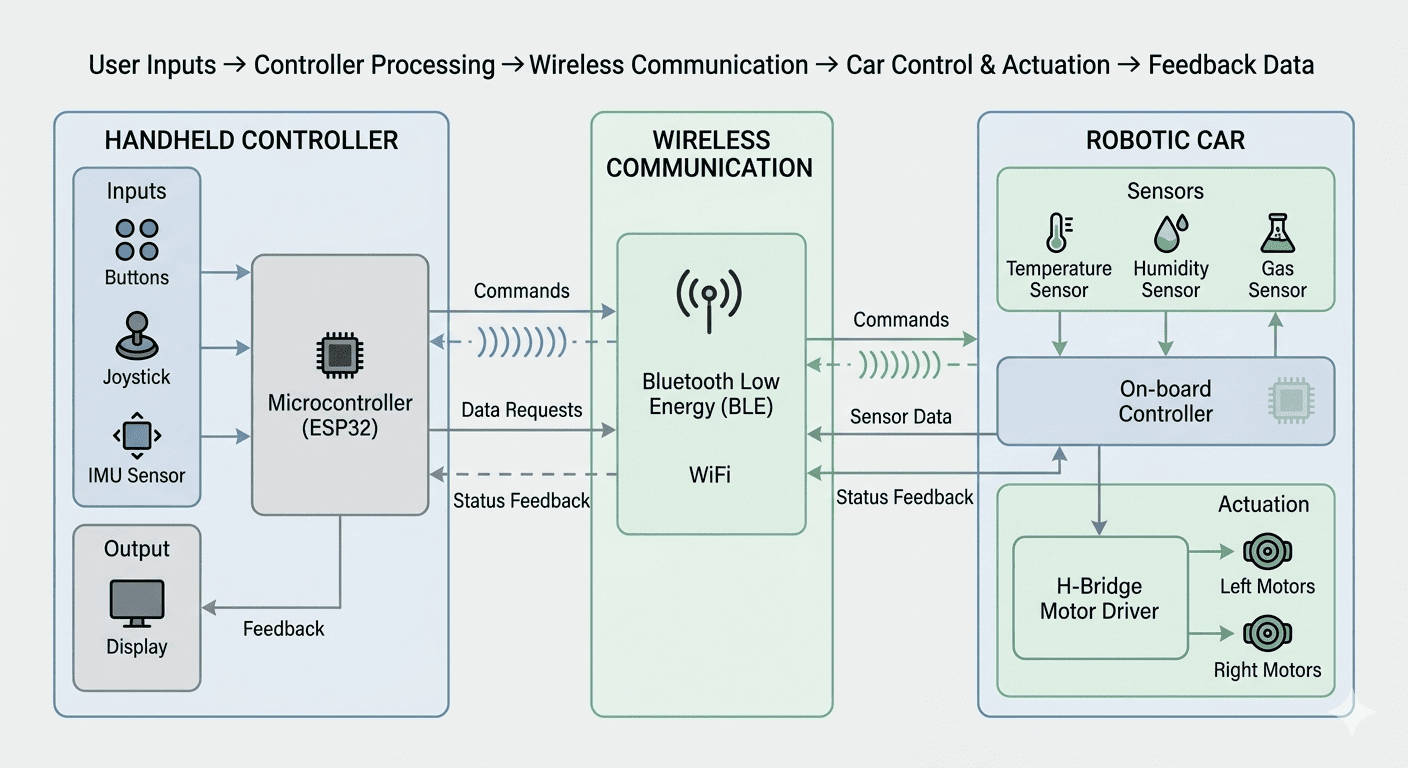

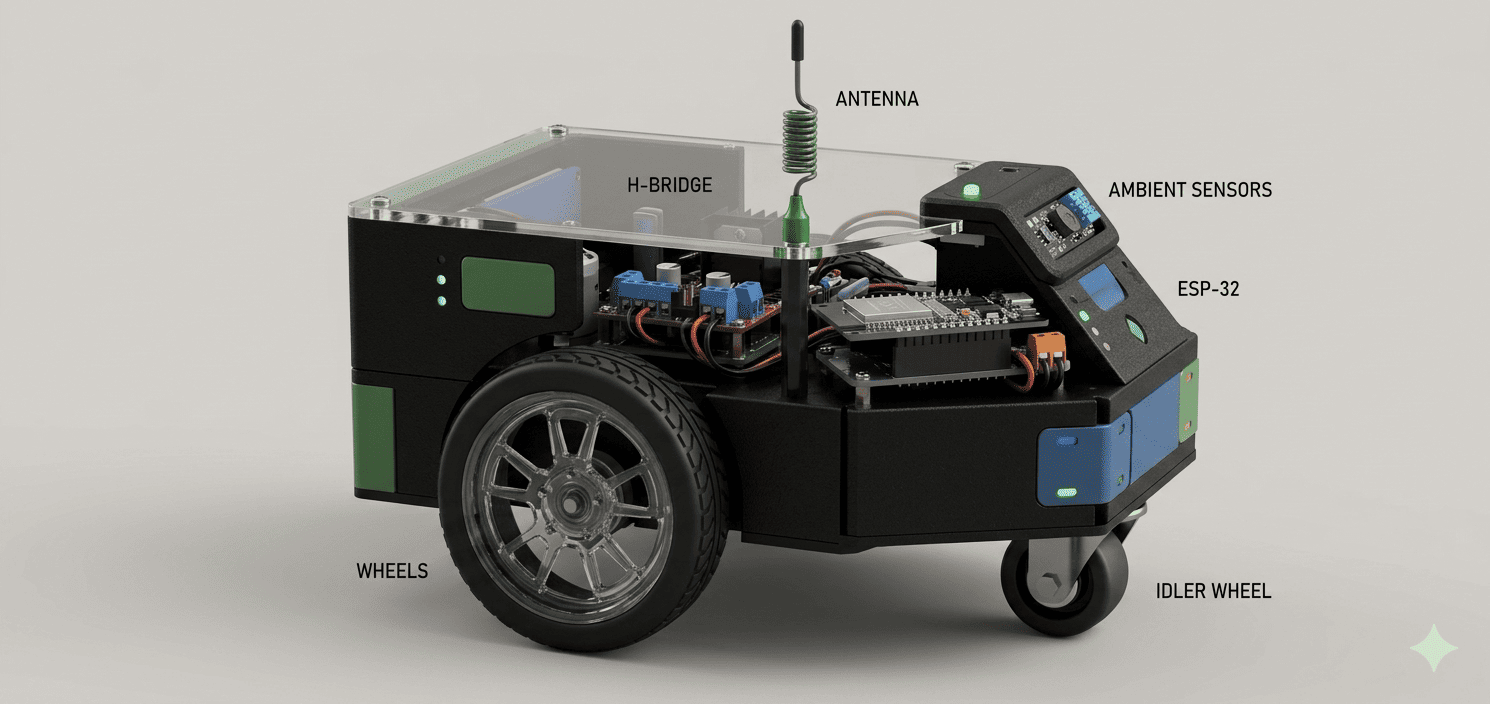

The GameLab Controller is designed as an interactive embedded system composed of two main subsystems: a handheld controller and a robotic car. These subsystems communicate wirelessly and operate together to create a real-time interactive learning platform.

What does it do?

GameLab Controller is an interactive cyber-physical system that connects user input, embedded processing, and real-world actuation. It allows users to control a robotic platform in real time through a custom-designed handheld controller.

The controller captures multiple input modalities, including joystick movement, button interactions, and motion data from an IMU sensor. These inputs are processed locally and transmitted wirelessly (via BLE/WiFi) to the robotic car.

The robotic car interprets these signals to perform physical actions such as movement, navigation, and response behaviors. At the same time, it collects environmental data (temperature, humidity, gas levels), which can be transmitted back to the controller, enabling real-time feedback and interaction.

This bidirectional interaction transforms the system into a learning platform where users can explore embedded systems, control logic, communication protocols, and real-world sensing in an intuitive and engaging way.

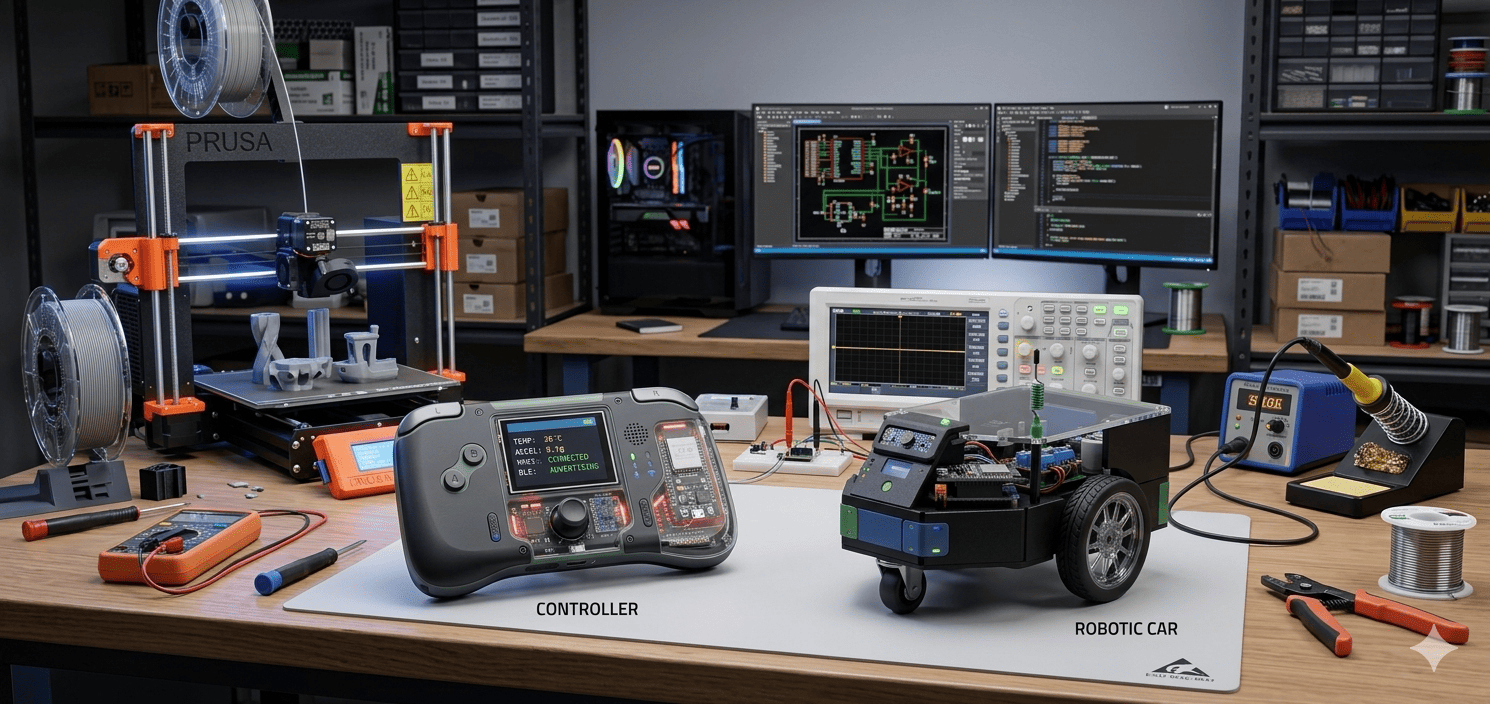

GameLab Controller system: handheld controller and robotic car interacting in a lab environment.

System architecture: controller inputs → wireless communication → robotic car → sensor feedback.

Existing Solutions

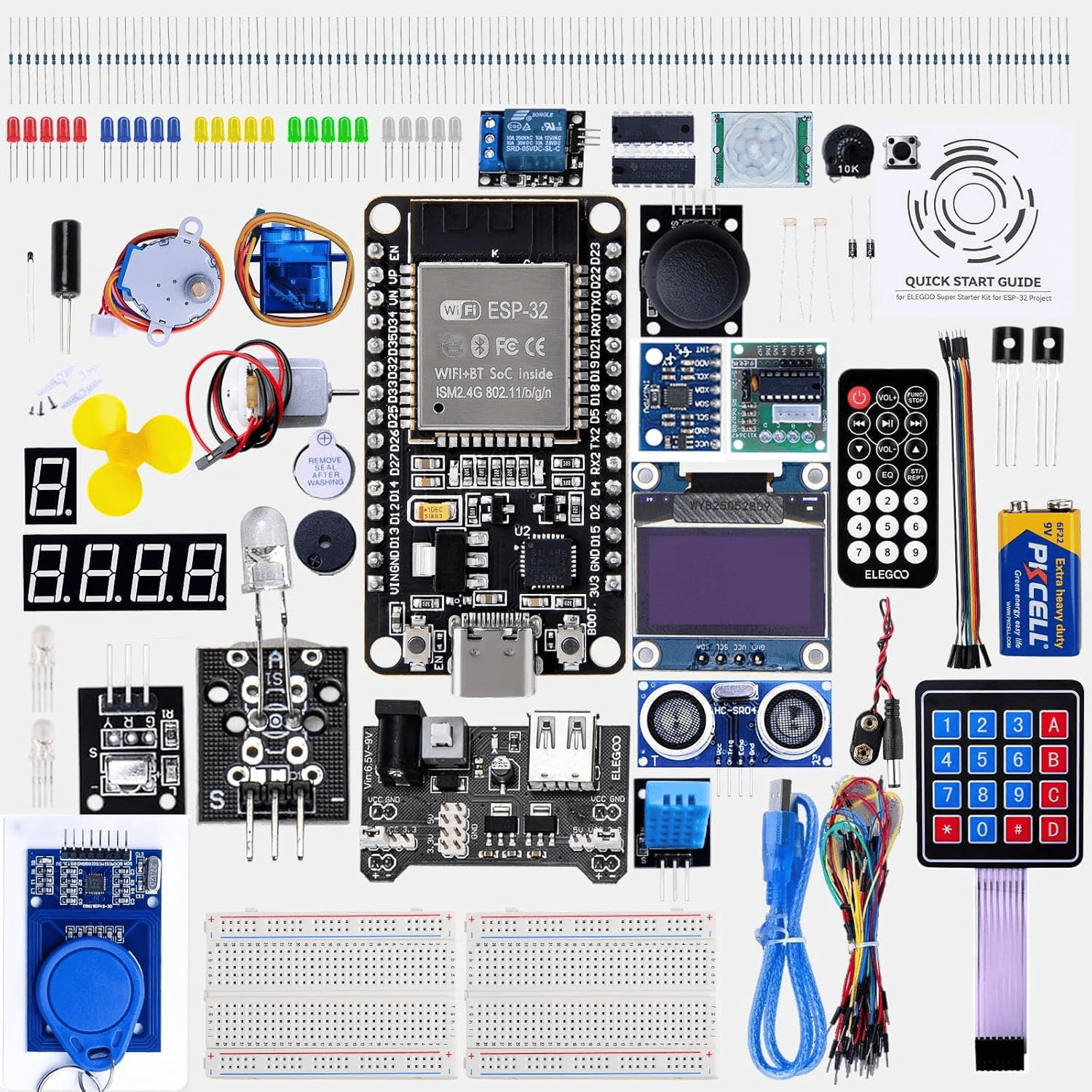

Currently, there are numerous commercially available starter kits based on platforms such as ESP32, Arduino, and Raspberry Pi Pico. These kits typically include a wide variety of input and output modules such as sensors, buttons, LEDs, and actuators.

While these kits are valuable for learning, they often expose beginners to a large number of components at once. This can create additional challenges, including managing physical connections, understanding circuit design, and interpreting datasheets without a structured learning path. :contentReference[oaicite:0]{index=0}

ESP32 Starter Kit (reference example). Source: Amazon.

Traditional ESP32 Starter Kits

These kits typically include dozens of independent modules such as LEDs, sensors, displays, and actuators. They are designed to expose users to a broad range of electronic components and programming examples.

However, they require the user to manually assemble circuits, understand pin configurations, and interpret datasheets for each component. This can become overwhelming, especially for beginners who are still developing fundamental skills in electronics and system integration.

As a result, learning often becomes fragmented, focusing on isolated experiments rather than a cohesive system.

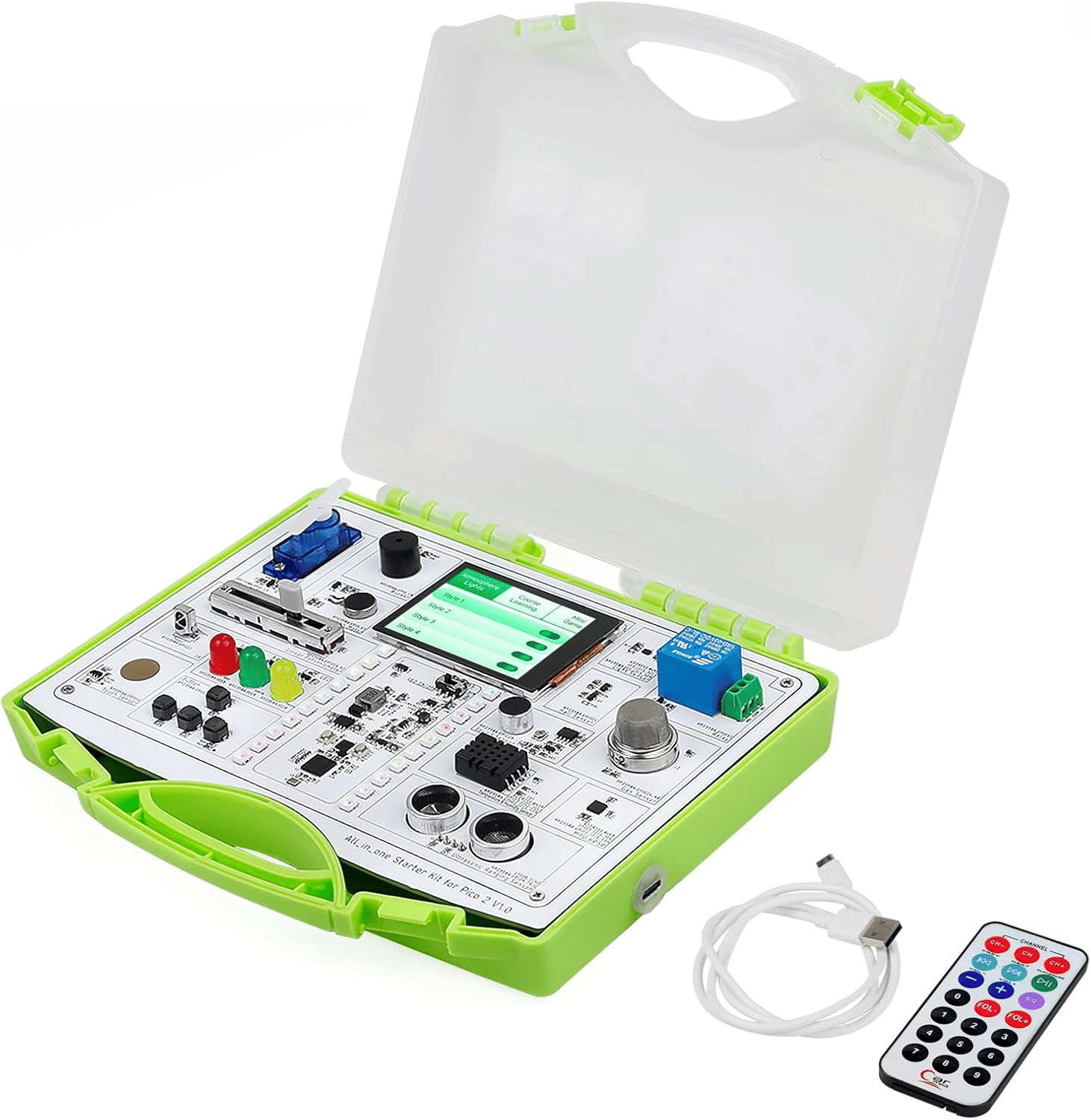

View Product →Integrated Learning Platforms

More recent solutions, such as integrated development boards, attempt to solve these issues by combining multiple peripherals into a single platform. These systems often include displays, sensors, buttons, and actuators pre-connected to the microcontroller.

This reduces the complexity of wiring and allows users to focus more on programming and system behavior rather than hardware setup. In this sense, they function as compact, portable electronics laboratories.

However, this level of integration limits flexibility, as users have less control over hardware configuration and system architecture.

View Product →

Integrated development platform with embedded peripherals. Source: Amazon.

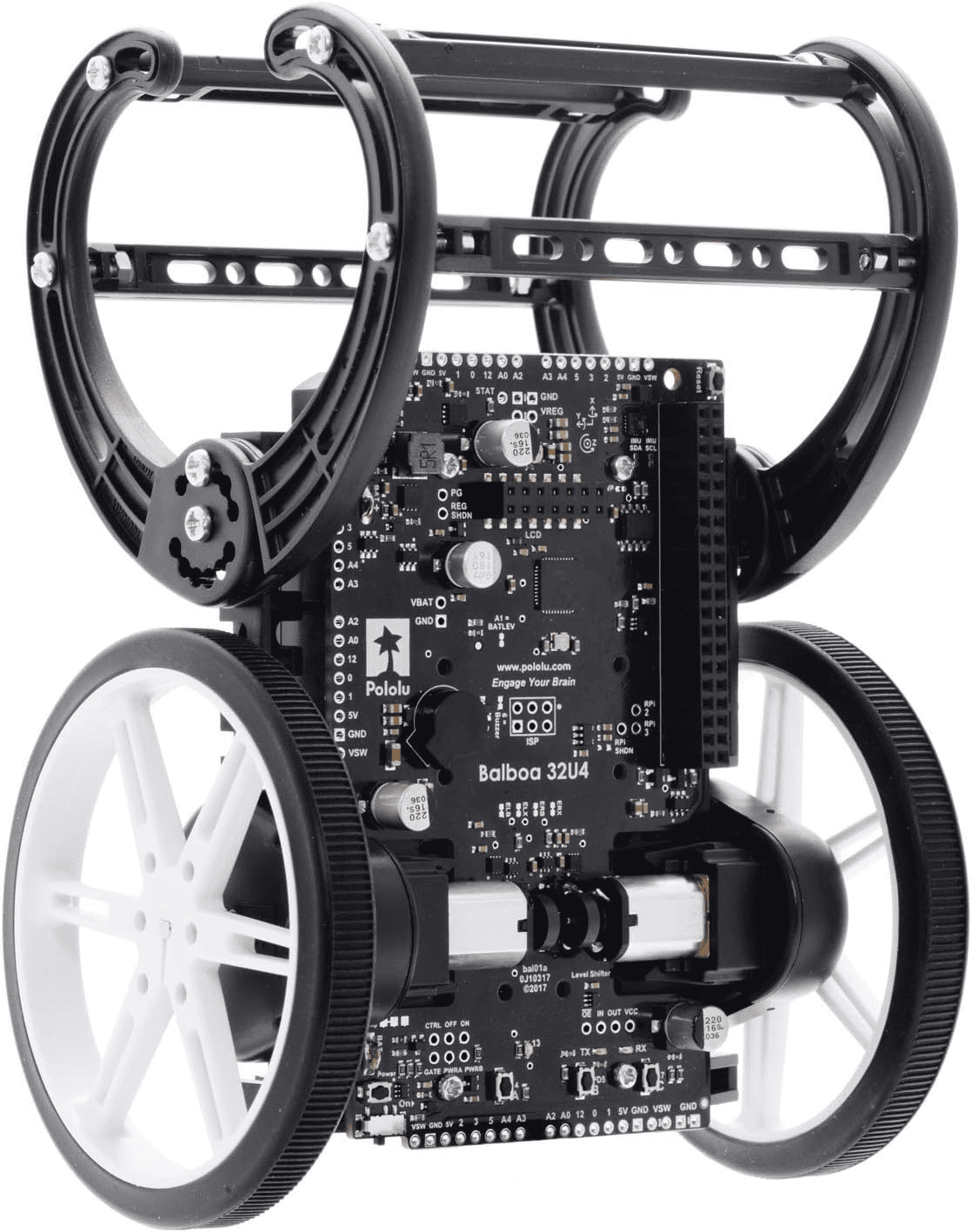

Integrated robotic platform with embedded control system. Source: Amazon.

Integrated Robotic Platforms

Robotic platforms such as the Pololu Balboa 32U4 represent a more advanced approach, integrating microcontrollers, motor drivers, and mechanical systems into a single robotic unit.

These systems are highly optimized for specific applications, such as balancing robots, and provide reliable performance with minimal setup.

However, they are often tied to proprietary ecosystems, limiting compatibility with external components and reducing their adaptability for broader experimentation and learning scenarios.

View Product →Project Differentiation

The GameLab Controller addresses these limitations by proposing an open-source, modular, and educational platform. Instead of overwhelming users with disconnected components, it organizes interaction through a unified system composed of a controller and a robotic platform.

Additionally, this project integrates multiple areas of digital fabrication, including 3D printing and PCB design, making it not only an electronics platform but also a comprehensive DIY development system.

By combining intuitive interaction (game controller) with physical response (robotic car), the system creates a more engaging and didactic learning experience. This makes it suitable for educational environments at different levels, including schools, universities, and hands-on workshops.

What did I design?

The project involves the design of both hardware and system architecture, including:

- A custom handheld controller with integrated inputs, display, and sensors

- A robotic car platform with actuation and environmental sensing capabilities

- Wireless communication between subsystems (BLE/WiFi)

- Modular interfaces for expansion and experimentation

Sources and References

The development of this project is based on knowledge acquired throughout Fab Academy, as well as prior experience in embedded systems and mechatronics. Additional references include microcontroller documentation (ESP32), sensor datasheets, and open-source hardware design practices.

Materials and Components

The system is built using a combination of electronic and mechanical components, including microcontrollers, sensors, actuators, and fabricated structural parts.

- ESP32 microcontrollers (controller and car)

- Joystick and push buttons

- TFT LCD Display (1.8", 128x160)

- IMU sensor (MPU6050)

- Motor driver (H-Bridge)

- DC motors and wheels

- Environmental sensors (temperature, humidity, gas)

Component Sourcing

Most electronic components are sourced from the Fab Lab inventory, while additional modules may be obtained from local suppliers. Structural components will be fabricated using digital fabrication processes available in the lab.

The following section illustrates the transition from initial concept sketches to a refined digital prototype, highlighting the evolution of the system design.

Concept & Peripheral Selection

The initial design phase focused on defining how users would interact with the system and how that interaction would translate into physical behavior. Instead of selecting specific components from the beginning, the system was first conceptualized in terms of functional blocks, allowing a clear understanding of the required inputs, outputs, and system responses.

This approach makes it possible to design the system around user experience and interaction flow, rather than being constrained by specific hardware choices. Once the functionality is clearly defined, the appropriate components can be selected to implement each block.

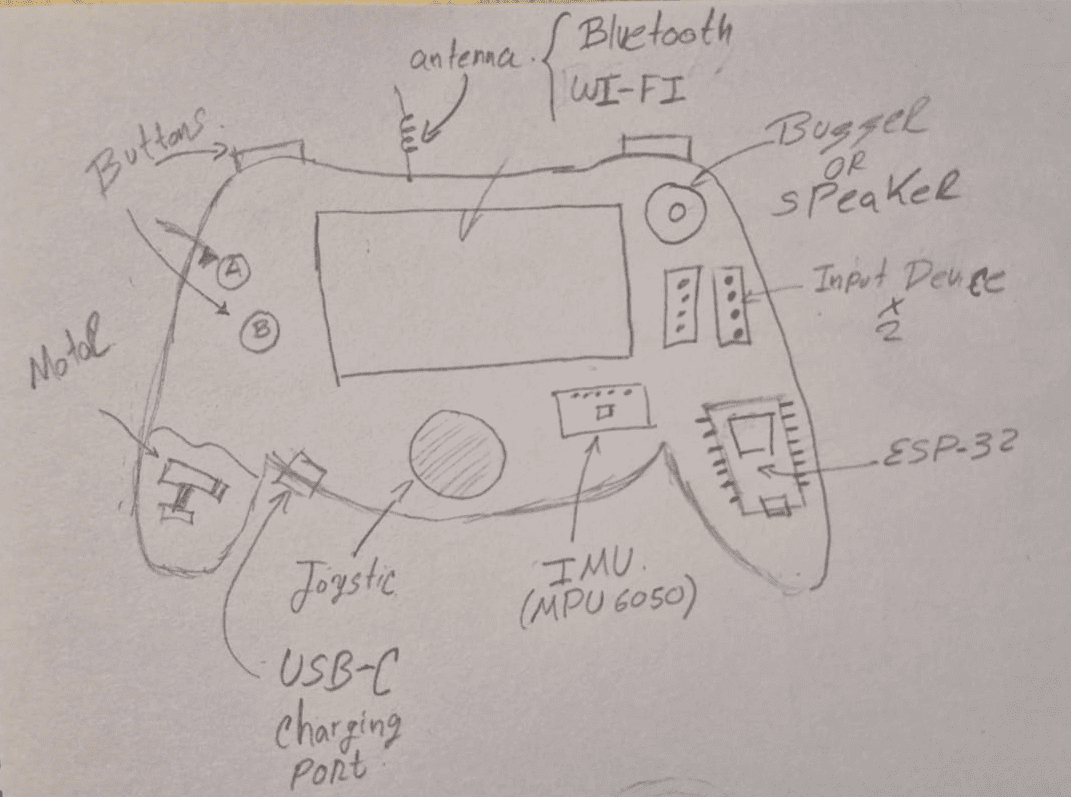

Controller Concept

The controller was designed as the primary interaction interface between the user and the system. Its purpose is to capture different types of input and translate them into control signals that can be sent to the robotic platform.

At the conceptual level, the controller was divided into functional modules:

- Display: Provides visual feedback and system information

- Motion sensor (IMU): Enables gesture-based interaction

- Environmental sensor: Allows real-time monitoring

- Joystick: Controls direction and movement

- Push buttons: Enable discrete commands and interactions

- Audio feedback: Enhances user experience

By defining these elements as functional blocks, the controller design remains flexible and scalable, allowing future expansion and adaptation to different learning scenarios.

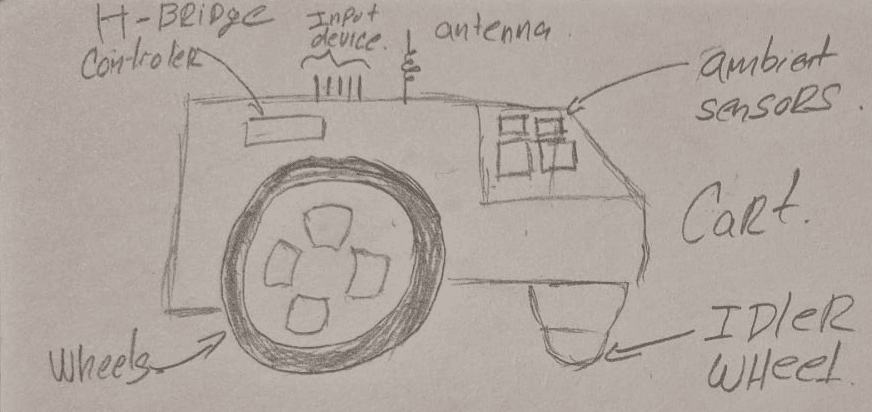

Robotic Platform Concept

The robotic platform was conceptualized as the physical response system. Its role is to receive commands from the controller and translate them into motion and environmental interaction.

Similar to the controller, the system was defined in terms of functional capabilities:

- Motor actuation: Enables movement and navigation

- Environmental sensing: Collects real-world data

- Wireless communication: Connects with the controller

- Power system: Ensures autonomous operation

This abstraction allows the robotic system to be understood as a modular platform, where each function can be independently developed, tested, and improved.

Hardware Selection

After defining the functional architecture of the system, the next step consisted of selecting specific hardware components capable of implementing each subsystem. The selection process was based on criteria such as size, number of available GPIOs, communication capabilities, power consumption, and ease of integration.

This stage translates the conceptual design into a real embedded system, where each functional block is mapped to a physical component.

Controller – ESP32-S2 Based Design

The controller requires a microcontroller capable of handling multiple inputs, real-time interaction, and user interface elements. For this reason, the ESP32-S2 architecture was selected.

This microcontroller provides a higher number of GPIO pins compared to smaller alternatives, as well as integrated wireless communication, making it suitable for managing multiple peripherals simultaneously.

The conceptual modules were implemented using the following components:

- TFT LCD Display (1.8", 128×160): user interface and feedback

- MPU6050 IMU: motion sensing and gesture interaction

- DHT22 Sensor: environmental monitoring

- Joystick module: directional control

- Push buttons: discrete interaction inputs

- Buzzer / speaker: audio feedback

This configuration allows the controller to function as a complete interaction device, capable of capturing user input, processing it, and communicating with the robotic system in real time.

Robotic Car – XIAO ESP32-C3

The robotic platform requires a compact and efficient microcontroller capable of handling motor control, sensor acquisition, and wireless communication. For this purpose, the XIAO ESP32-C3 was selected.

This board offers a small form factor, integrated WiFi/BLE connectivity, and sufficient GPIO capabilities, making it ideal for embedded mobile applications where space and power efficiency are critical.

The system integrates the following components:

- XIAO ESP32-C3: main processing unit

- Motor driver (H-Bridge): controls motor direction and speed

- DC motors: provide movement and actuation

- DHT22 / Gas sensors: enable environmental data acquisition

This configuration allows the robotic platform to act as a responsive and autonomous system, capable of executing commands and generating real-world feedback through sensors.

Project Planning

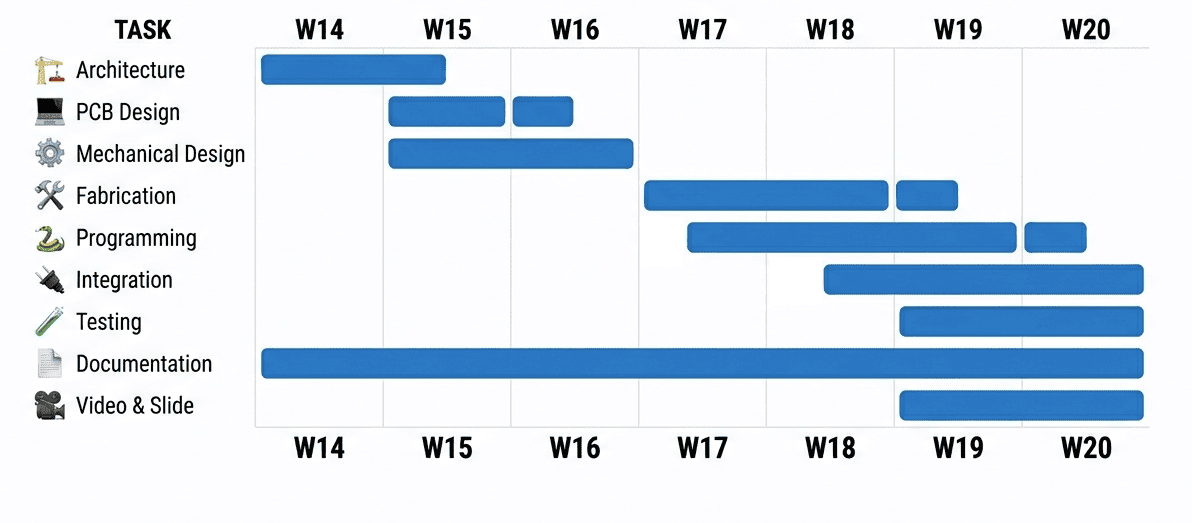

The development of the final project is structured over the remaining weeks of the Fab Academy, focusing on a progressive workflow that integrates design, fabrication, programming, and system integration.

The planning strategy prioritizes early definition of the system architecture, followed by fabrication and iterative testing, ensuring that sufficient time is allocated for debugging and refinement before final delivery.

Development Phases

- Week 14: Final system architecture and detailed design

- Week 15: PCB design and mechanical design

- Week 16: Fabrication (PCB production and 3D printing)

- Week 17: Programming (controller and robotic system)

- Week 18: System integration and testing

- Week 19: Debugging, optimization, and final adjustments

- Final Week: Documentation, video, and presentation

Project timeline showing development phases from design to final delivery.

Development Strategy

The project follows an incremental development approach, starting from a Minimum Viable Product (MVP) and progressively adding functionality. This ensures that the system remains operational throughout the process while allowing iterative improvements.

A dedicated debugging phase is included to address integration issues, ensuring system stability and reliability before final submission.

Reflection

Errors & Fixes

Downloads

The following files are related to the development of this week, including the template used for the website and the reference guide provided for its implementation.

📦 Fab Academy Template

Compressed file containing the base structure used to develop the website. This template simplifies the documentation process and provides reusable components.

📄 Template Guide

Quick guide explaining how to use the template, organize files, and structure weekly documentation effectively.