Project

Final Project

Final project presentation

slide (presentation.png)

video (presentation.mp4)

PoTone - Live demo version (3 min 22 seconds)

Final project documentation

Table of content

- Concept

- Architecture

- Design

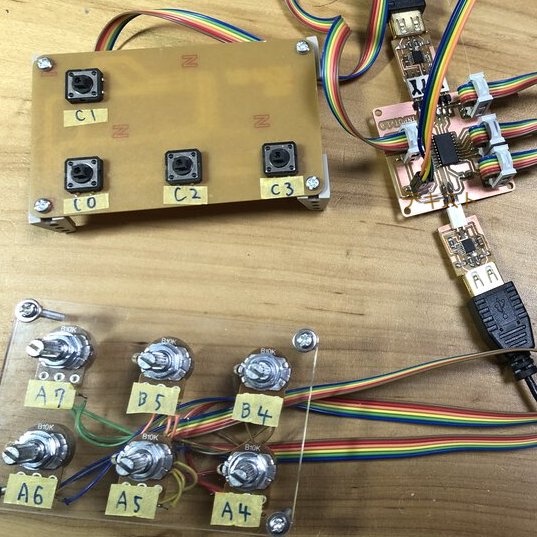

- Input Devices

- Networking / Output device

- Application Programming

- Assembling

- Bill of Materials

- Project Development

- Evaluation

- Archives

- Files

- Additional projects

Concept

What will it do?

PoTone is adaptive, interactive and real time musical MIDI instrument.

Players can make sounds and play rhythm sequence just by putting things on top of the instrument device. Like cooking and eating “Nabe(鍋, hotpot in Asian country)”, multiple players can play one device at the same time.

Anyone can:

- play easily - simple interface

- play with anyone - multiple players

- play anywhere - portable

Who's done what beforehand?

- James Fletcher, FA2014 made an Attiny44 based sound modular device

- Fiore Basile, FA2014 made a tiny MIDI device as an artifact of "input device" week.

- Xiaofei Liu, FA2019 made a disc player that can translate graphics into music!

- Deepti Dut, FA2017 who made Soft MIDI Controller explains about the coordination via Device - loop MIDI - SerialMIDI - DAW(Ableton)

- Rectable(youtube video) is the one that put object and make sounds with more highly graphical and complex way.

- This youtube video explains how to change Arduino device as an USB MIDI Device updating the firmware using "Arcore" library

- A youtube video of DIY Arduino MIDI controller project explains how it works and how to make it.

I want to add my idea of "making sounds just by putting object" and "multiple players can play one device at the same time" to above existing excellent projects.

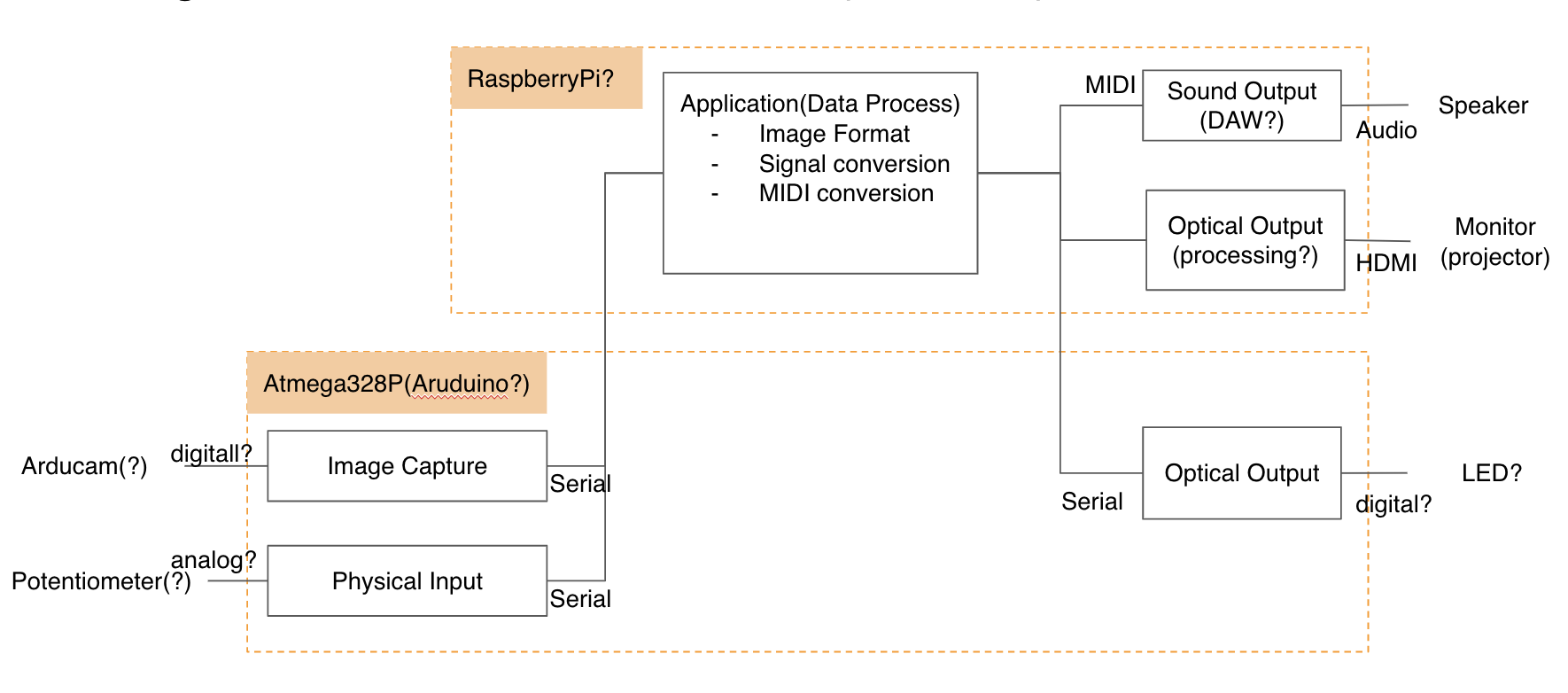

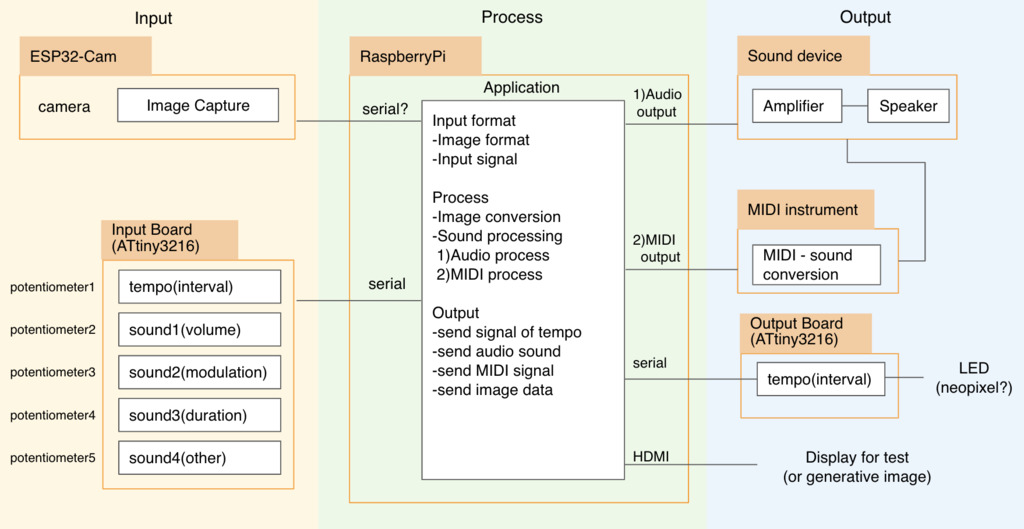

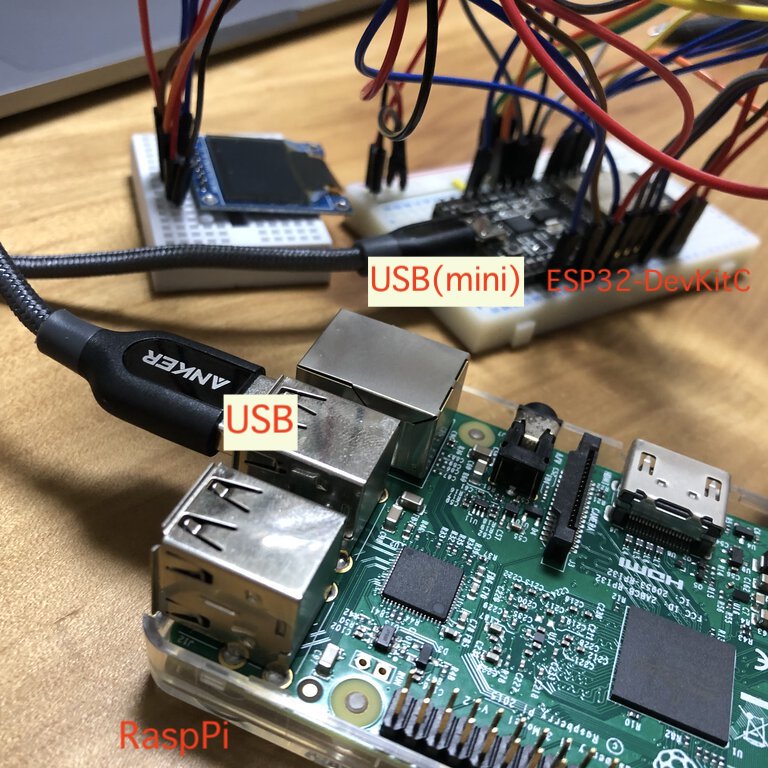

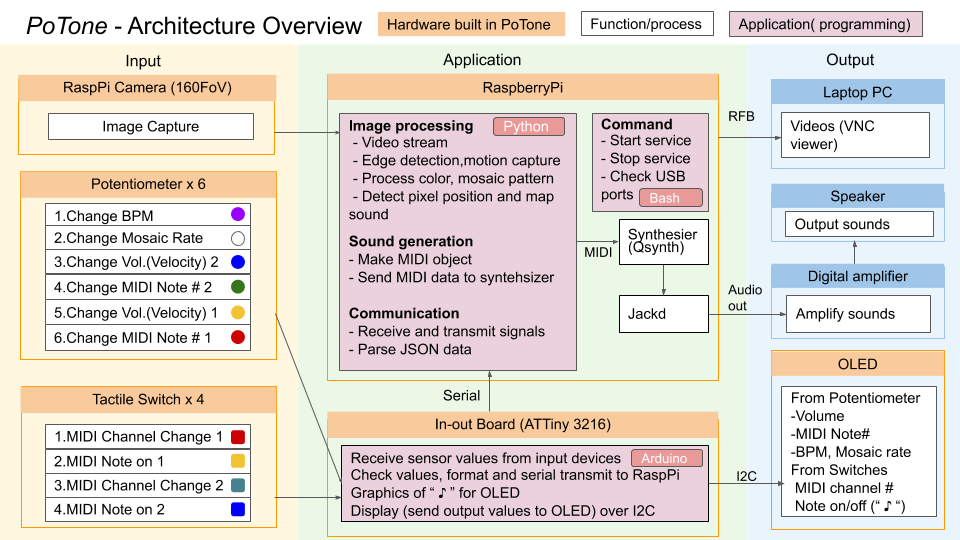

Architecture

Architectural decision

The reasons why I decided to make/abolish/use//unuse the parts are as follows.

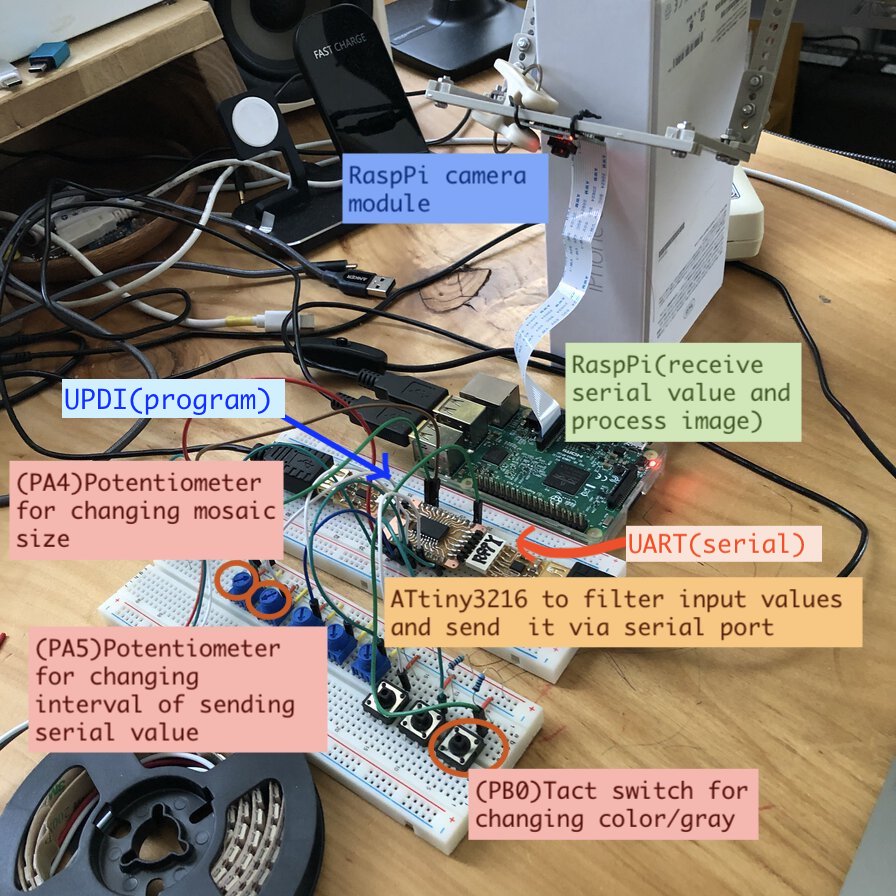

Use Camera for capturing the shape of object on top

-

- For checking the shape of object in real time, I selected camera because of easy implementation combining with software. Using open source software libraries (ex. OpenCV) allows developers to implement image recognition instead of using sensors or remote IC based solution as following alternatives.

- Alternatives

input device what and how to capture the object shape evaluation RFID proximity with tag in object side RFID requires tag at the object side. it's not relevant to the solution for "putting anything and capture the shape" Capacity Respose step response by current amount by touching Capacity responce requires bio-metrics that can hold current like finger touch or water etc, it's not relevant to the solution for "putting anything and capture the shape" Light sensor/photocell sensor brightness captured by light amount responding to resister value, or color using resonating LED color It’s difficult to capture multiple detail segmented position ( imagine that 50 beans are thrown on the table and you want to capture positions or each bean and change them into MIDI signals. It could be done by 50 photocell sensors on the table, but I do not think it’s intelligent). Use RaspPi Camera

-

- As the central application is on Raspberry Pi, the easiest way to connect camera to application and capturing frames in real time is using Raspberry Pi camear module though RaspPi camera module is relatively expensive than the other modules like ESP32-cam.

- Alternatives:

- Arduino camera is relatively cheap. There are some examples to use on Arduino-based system. However, the performance of Arduino camera module is not good for capturing image in real time. Also, transmitting image to main application will be slow process.

- ESP32 Cam can support web camera. Web server is launched on ESP32 and capture video stream from web server is one of options. However, this project does not need web camera (Camera will be embedded in the box of material). ESP32 Cam is not applied a "certification of conformance to technical standards(技適: 技術基準適合証明)" in Japan.

- Or, the other option is to wire a camera module (like OV2640) to ESP32 module using ESP32 camera library. However, wiring camera module requires architecture to transmit images to the main application and it would bring performance overhead and complexity.

The role of potentiometers and tactile switches

-

- Use potentiometers for "linear" input values like BPM(tempo), sound velocity(volume), scale(MIDI note number) or mosaic ratio(resolution).

- Use switches for "discrete" input values like MIDI channels(instruments) or trigers to make sound(MIDI Note on-off).

- Alternatives: Slide type potentiometer or capacity response take space though they can realize great experience.

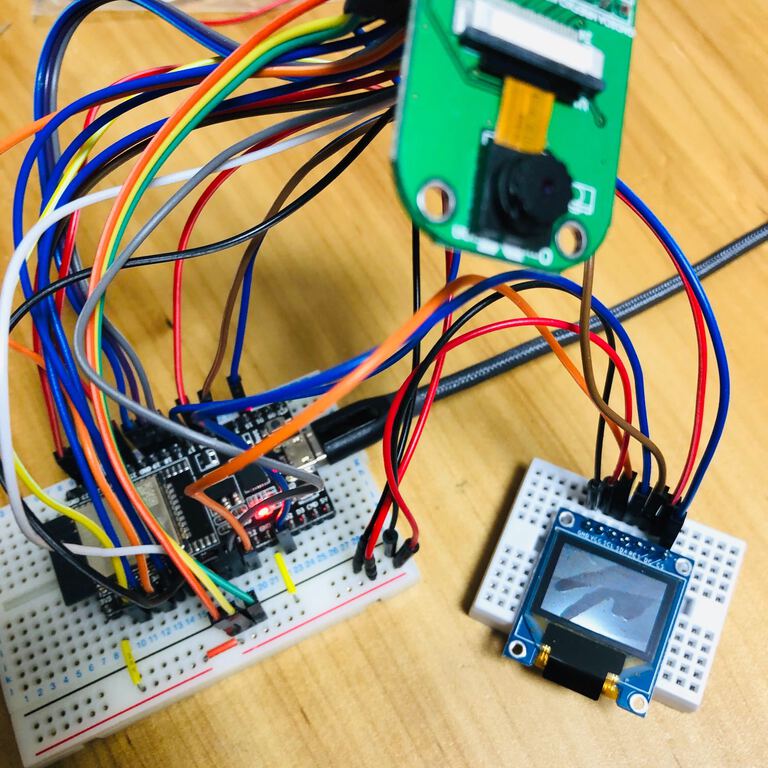

Use micro controller (ATTiny3216) for handling input/output values

-

- AVR 1-Series is higher performance microcontroller embedding bigger memory than existing ATtiny serries of AVR microcontroller.

- Supporting internal clock and programing by UPDI interface(1 pin), assembling to the board is easier too.

- 20 pins supported by ATTiny3216 can be assigned to 10 input devices(6 potentiometers and 4 tactile switches), I2C interface to OLED(SCL, SDA), interfaces for UPDI and FTDI-Serial communication by 1 in-out board. Many pins support colorful configuration to change sounds and images.

- Alternatives: I could not try ARM microcontrollers as the examples in the internet is still not many though ARM would support higher performance with energy efficiency

Use Raspberry Pi for the central application

-

- Because this project needs to handle image processing and sound generation simlataniously, I need to implement pararel processing system. Main application also needs to receive signals from input devices at the same time. . Raspbian OS on Raspberry Pi can support multi process or multi thread program.

- Alternatives:

- AVR microcontrollers or ESP32/ESP8266 modules does not support multi process/multi thread application. Some libraries like "Protothread" in Arduino looks to support multithread, but they have limitation to realize multi threading. For example, "Protothread" is not sufficient for deviding heavy process into multiple threads in terms of its behavior.

- Make multiple boards and assigning function to separated board might solve pararel processing. However it would add complexity to the entire system.

Use python and its libraries for application to receive signals, image processing and sound generation

-

- I need software libraries to implement image recognition and MIDI processing. These libraries are supported in python library packages like OpenCV, rtmidi, Mido etc. Also, python has multi threading libraries like concurrent futures (even though it is virtually supported).

- Alternatives: C++ supports multi threading, image recognition or MIDI processing by its libraries. As I have used python in my job while mine is quite limited experience, I decided to use python. C++ would perform faster than Python so it would be next

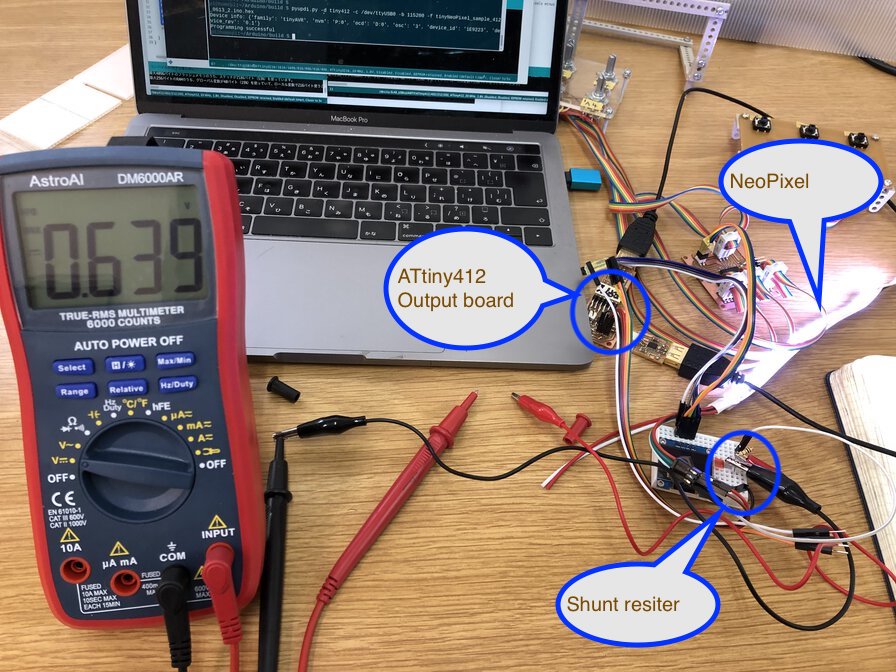

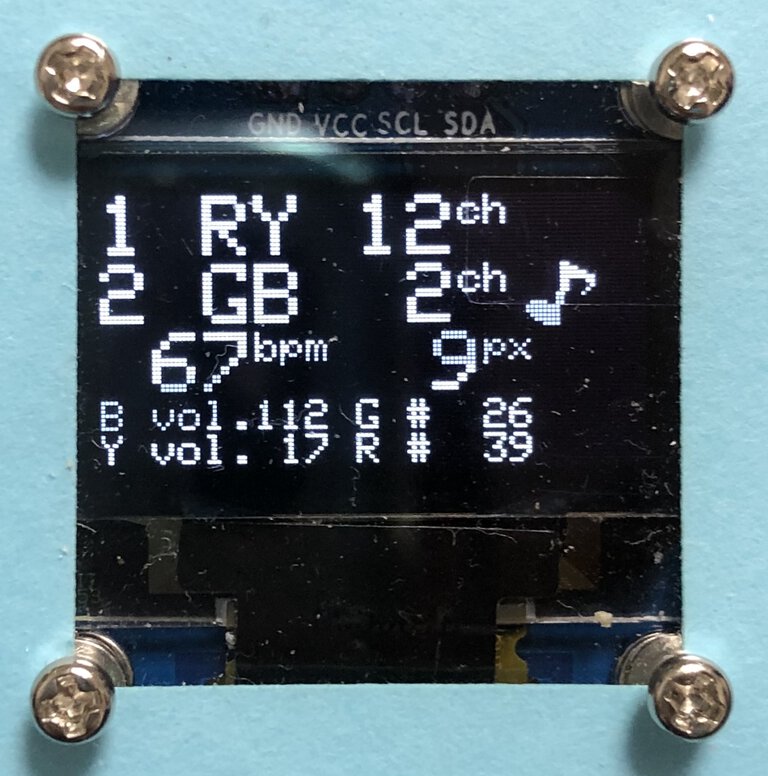

Use OLED to display values from input devices

-

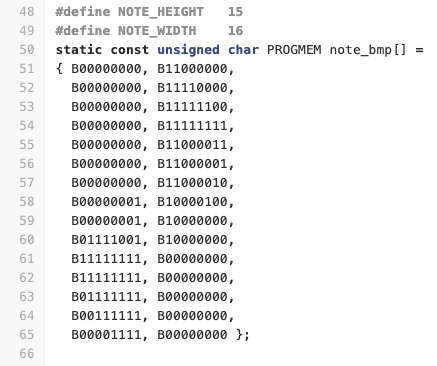

- For displaying values from potentiometers and switches and tracking it in the middle of music performance, I decided to use OLED(SSD1306) as that device allows to make tiny charactors and graphics by 128x64 pixels.

- Alternatives: Firstly I tried to use NeoPixel as an output device for showing tempo of music (circulate the LED arrays sequentially expressing the rhythm) by making the other output board separately from in-out board. NeoPixel is synchronous process and controller needs to wait for finishing every controlled arrays light process. It would be difficult for NeoPixel to control to express musical rhythm concisely. Also, I could not control NeoPixel as I intended by ATtiny412 board by unknown reason.

Use Qsynth as software synthesizer

-

- I use Qsynth(GUI application of Fluid synth) as it contains soundfont(sound source) and supports full composition to make sounds from python application to output audio (like MIDI sequencer (python application) > Synthesizer (Fluidsynth) > Sound server (jackd) > Linux Kernel (ALSA) > Hardware (sound card)) > audio output).

- Alternatives: timidity++ plays MIDI file in real time on Raspberry Pi but not actively supported by community(maintenance only).

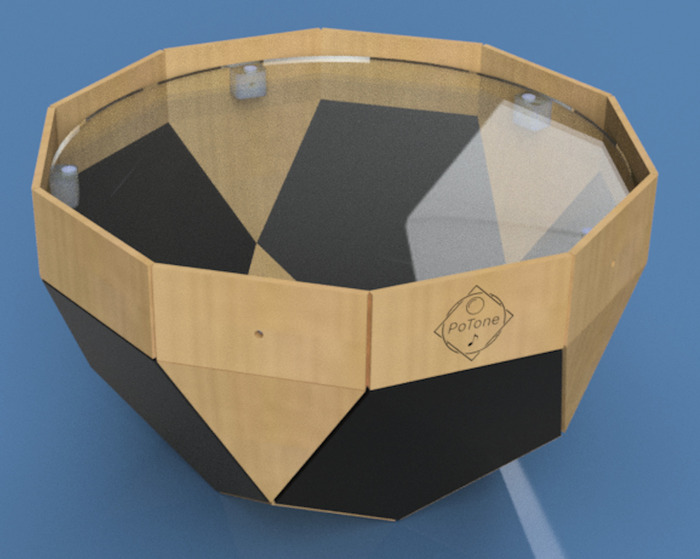

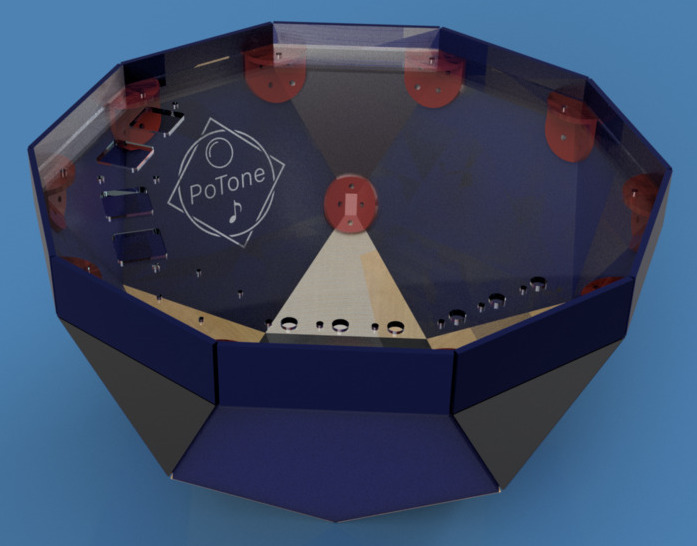

Design

Input devices

Camera module

RaspPi Camera (160 FoV)

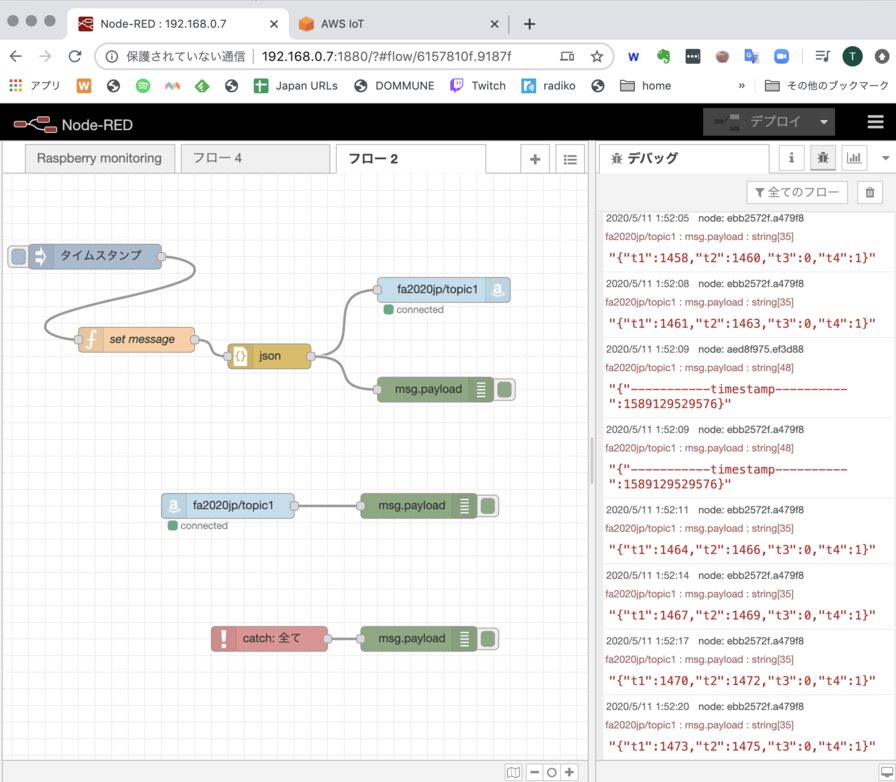

Networking / Output device

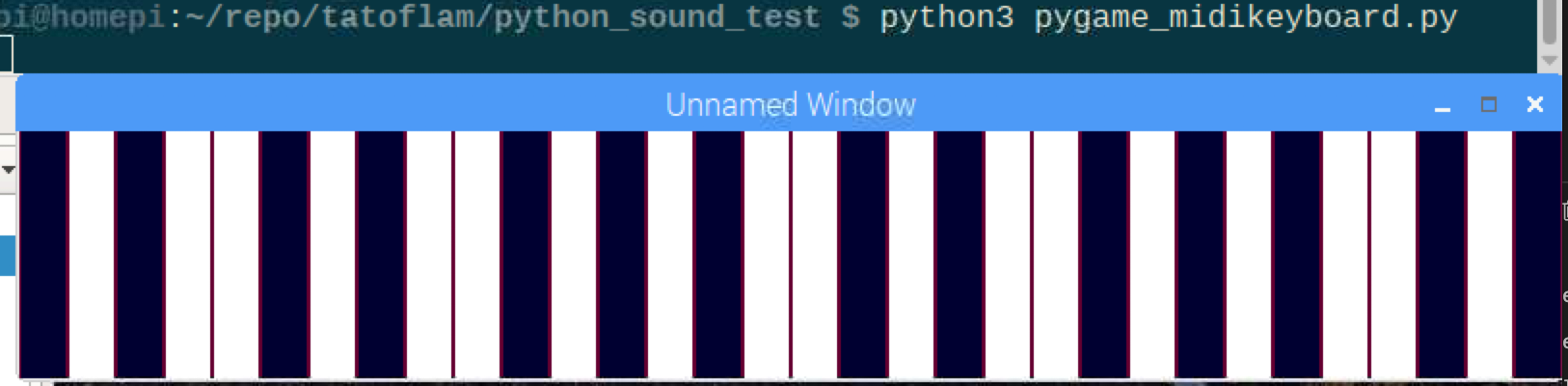

Application programming

Assembling

PoTone Making Process in 1 minutes

Bill of Materials

Link to BoM

Project Development

what tasks have been completed, and what tasks remain?

As of May 2020, I checked the tasks that I completed and re-planned project development in spiral development as I wrote in project planning in midterm proposal. Because I did not have access to lab by the end of May 2020 under COVID-19 situation, the physical work at lab is pushed but I managed to continue software work as I planned.

what has worked? what hasn't?

NeoPixel did not work at the environment of ATtiny412 output board maybe because of electronics or size of IC's memory's problem. As light up function is not essential to PoTone project and light brought busy impression at the experiment, I omit to use NeoPixel at my final project. Except NeoPixel, every component worked as I intended.

what questions need to be resolved?

Refer to Q&A at midterm proposal. Final decisions on architecture are "Architectural decision" part in this page.

what will happen when?

Final presentation is planned to 15 July 2020. As this project is music instrument played by children, I need to make not only the functional and process video and presentaiton, but also take nice music performance that shows child-friend user experience.

what have you learned?

Thorough FabAcademy, I have learned the mind of "Make it to do with what's around us. If I don't have, make it quickly" and skillset around that mind. I like to expand this more thorough making anything in the future.

Evaluation

- What does it do? -> Concept

- Who’s done what beforehand? -> Concept

- What did you design? -> Architecture, Design

- What materials and components were used? Where did they come from? How much did they cost? -> Bill of Materials

- What parts and systems were made? Architecture, Architectural decision, Design, Input Devices, Networking/output devices, Application Programming

- What processes were used? -> Design, Input Devices, Networking/Output Devices, Application Programming, Assembling

- What questions were answered? -> Architecture, Architectural Decision

- What worked? What didn’t? -> Project Development

- How was it evaluated?

Can player make rhythm sequence (or more sound patterns) just by putting things on the surface of the instrument device? -> YES

Can it be played easily with simple interface using potentiometer and putting things? -> YES

Can it be played by multiple players? -> YES

Is this a portable device? -> Halfly, YES

PoTone is handy sized musical instrument. It does not require external computer to play as Attiny3216 in-out board and RaspPi are embedded in Pot. However, it requires external AC Adapter for powring it and audio speaker. In the future, I want to embed battery and audio amplification system with speaker in the Pot for the perfect portability.- What are the implications?

- Portability: Embedding powering system, audio amplification and speaker in the Pot.

- Cost: Minimize system cost - Downsize Raspberry Pi or find the other boards to make multi process system.

- Input interface: More input channels increasing Potentiometer (current system shares the same potentiometer as volume controller for different channel(switch and video). For more various expression, it's better to use separated knobs for diffrent chanels.

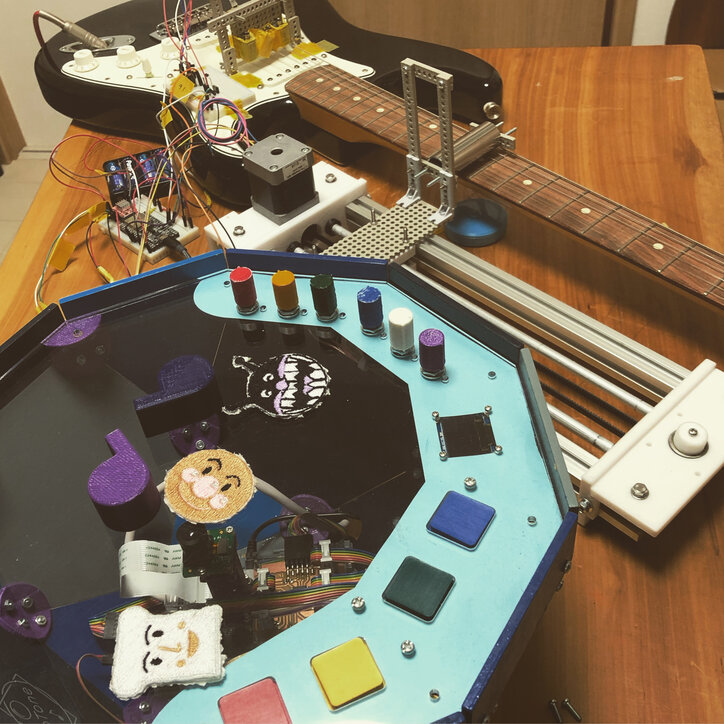

- Output interface: It's possible to connect music instrument machine to PoTone. One candidate solution is machine over MQTT system that I made at mechanical design and machine building project so that PoTone application can publish message to drive slide guitar machine instead of NodRed.

For the future project, I want to seek several perspectives to improve:

Archives

Files

Design files

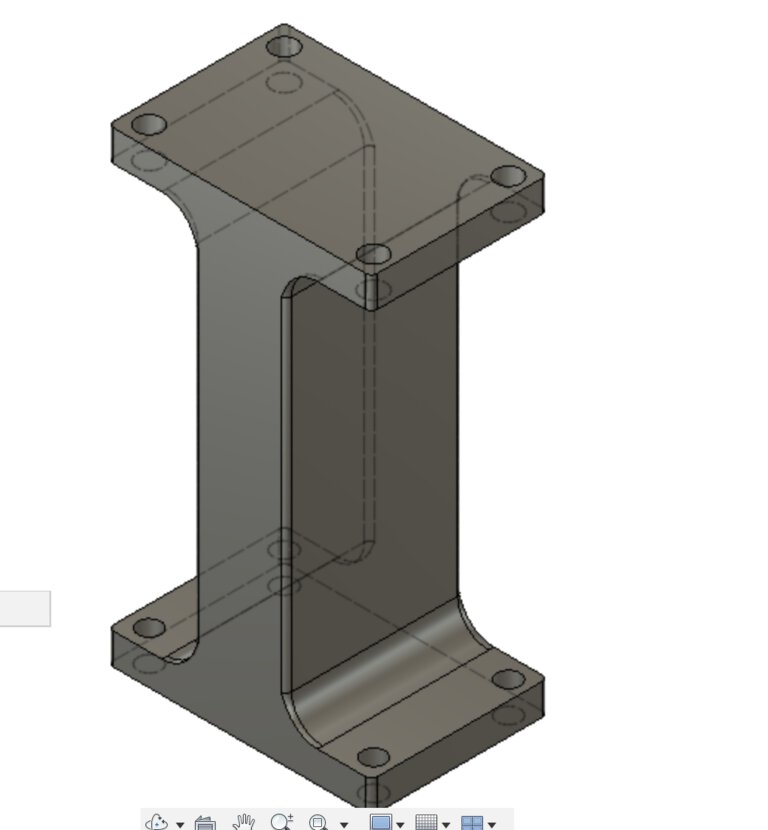

- PoTone Design

- 2D/3D design of PoTone : link to Autodesk A360, f3z

-

- Outline

- Joint

-

Fusion 360 models of joints are included in f3z file of 2D/3D design of PoTone

- Covers and parts

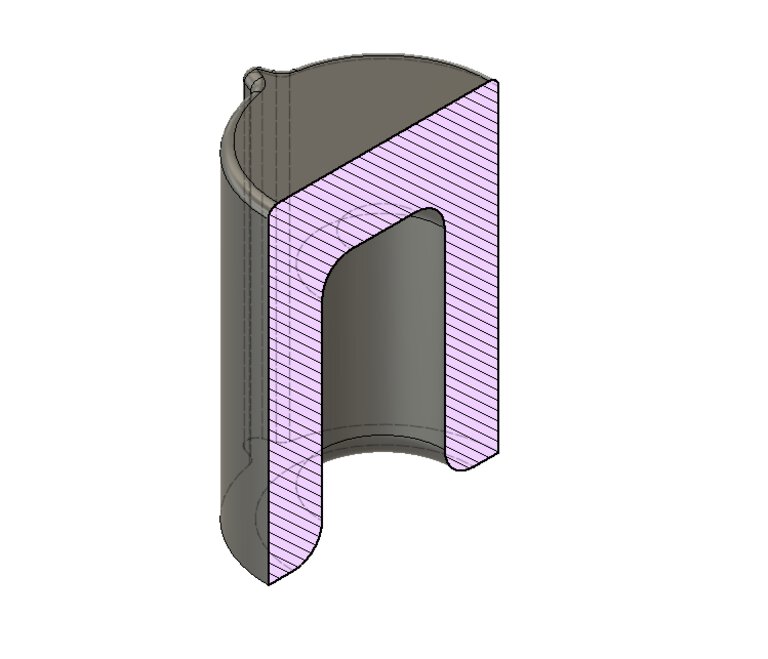

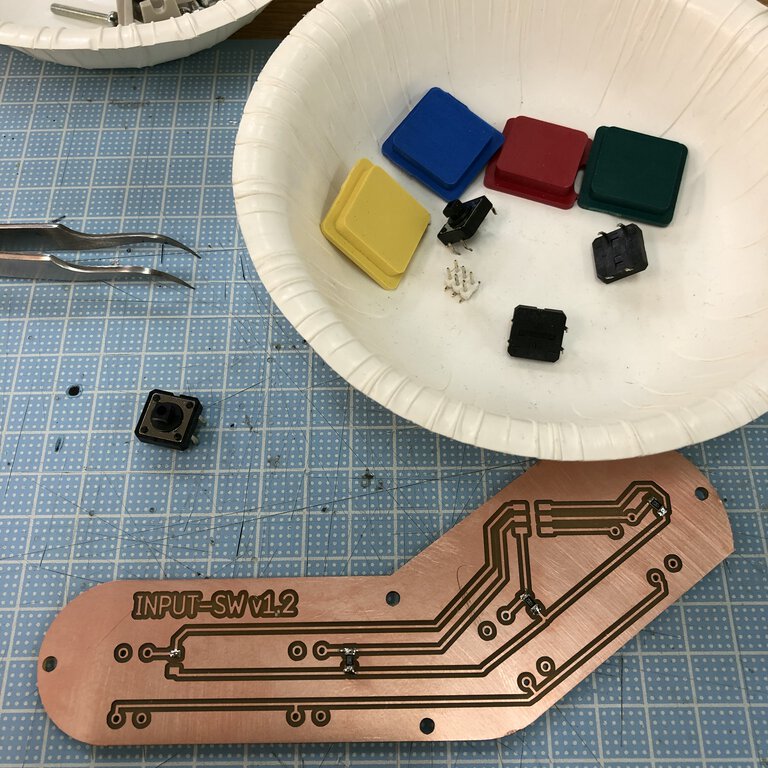

- Covers for tactile switch(molding and casting)

-

- 3D models for pad, cast and mold : link to Autodesk A360, f3d

- STL file for wax : wax.stl

- Tool path - an exported file from MODELA player : wax.mdt

- RaspPi case

- Speaker

-

- 2D/3D design : link to Autodesk A360, f3d

- SVG files : w07_speaker_svg.zip

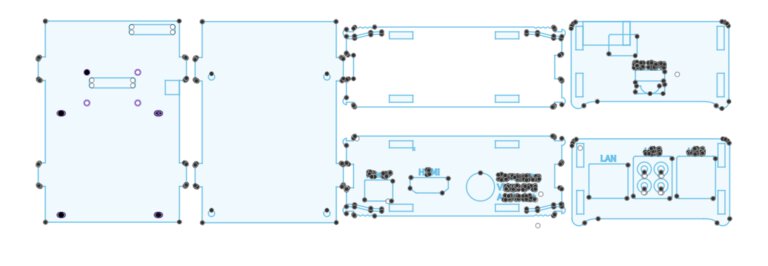

Board Design

- In-out board

- Input-switch board (the other board for switches)

Source code

- Arduino (ATTiny3216)

- Python

-

- potone.py - main thread

- image.py - stream video and process images

- communication.py - receive serial on concurrent worker thread

- sound.py - send MIDI on concurrent worker thread

- utility.py - generic utility code like JSON encoder/decorder

- constants.py - definition of constants

- bash (operational tool)

_x600_FAweek2_20200211.jpg)