15 - Interface and Application Programming

Click here to go to my application for this week

Summary

This week we were challenged to implement a user interface using programming and explore protocols to communicate with a microcontroller board that you designed and made.

Approach

Since I have quite a bit of programming experience this week I wanted to have some fun. My focus was on learning 3D animation on the web while also challenging myself to let AI take the reigns.

Assignment

- individual assignment:

- write an application that interfaces a user with an input &/or output device that you made

- group assignment:

- compare as many tool options as possible

Individual assignment

This week, I wanted to have some fun with a topic I’m already familiar with. During our group assignment we talked about our experience with 2D graphics on the web, but something I've been curious about is 3D graphics on the web. I've played around with ThreeJS (a JavaScript 3D library) a tiny bit before, but never really managed to make anything of value, so this week seemed like a good week to learn.

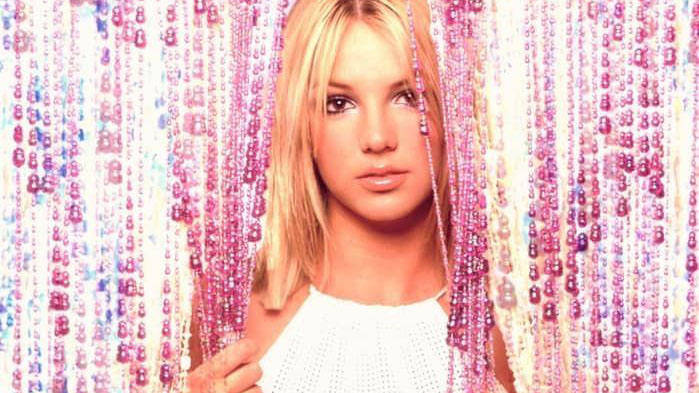

To keep focus on my final project, a interactive curtain, and make something useful I decide to try and model the interactions of my final project in 3d. This could give me a cool simulation to program my light patterns and interactions with before trying them out on the physical model.

Britney Spears, Robert Sebree 2000

Britney Spears, Robert Sebree 2000

Something else I've been meaning to try is the use of AI for code. A lot of my friends and global classmates have made some stuff beyond their skill level and allocated time through the use of these tools. I've tried them a bit before, but considering myself some what of craftsmen as a coder, I really didn't like it. I have trouble letting go of control, therefore the edits I ask for are so tiny it just feels like it takes for ever and I'm faster coding myself.

This week I wanna challenge myself to let go of that code-quality control muscle I've trained for years and see if AI makes me achieve beyond what I could do myself in the same amount of time. Since I don't have a lot of money and I couldn't find any unlimited free tools that integrate with my file system I used ChatGPT and VSCodium splitscreen. I also asked ChatGPT to summarize the process in Markdown and used that as scaffolding for my documentation this week.

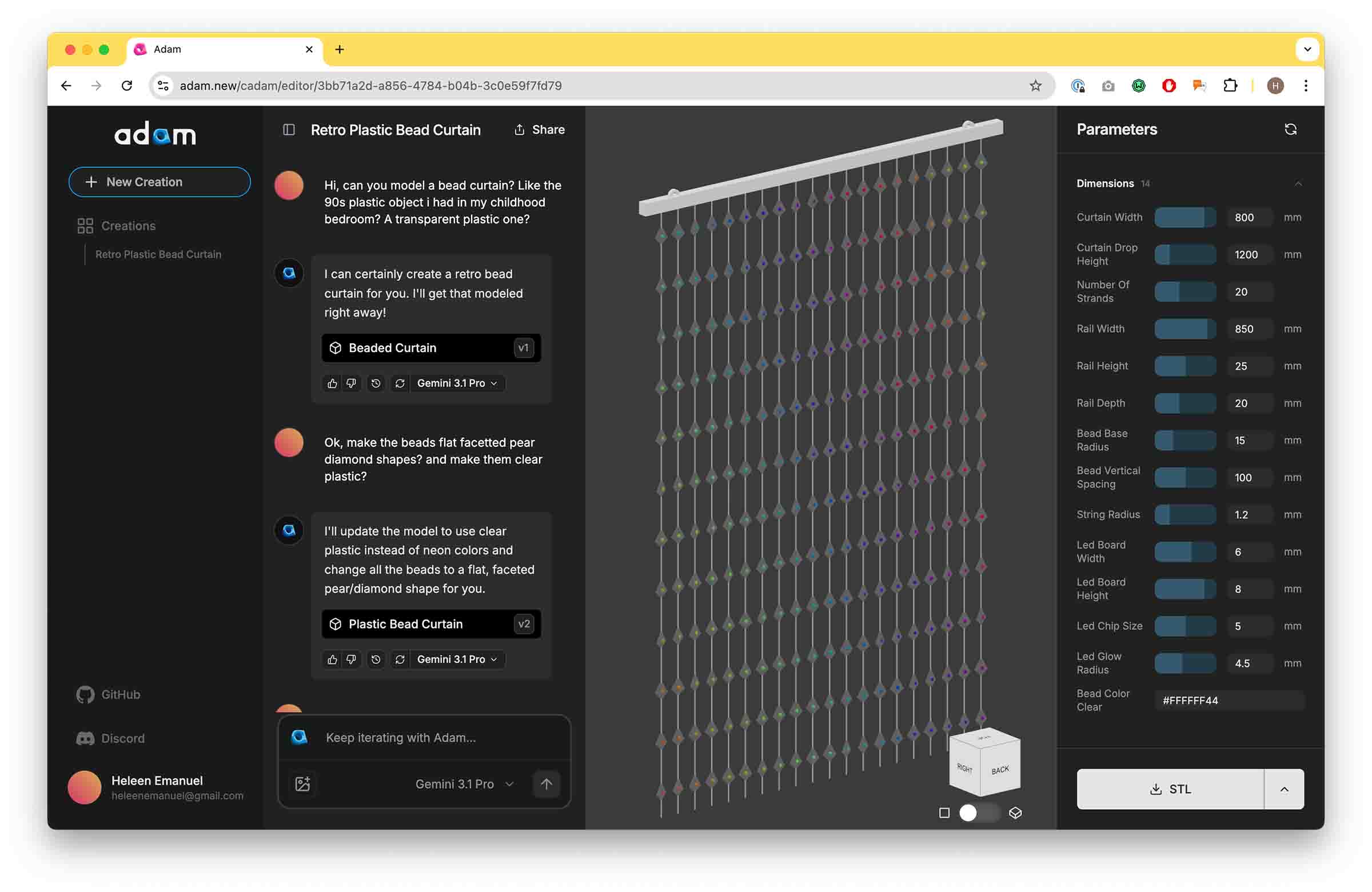

Modeling a bead curtain

A couple of times during Fab Academy I've seen examples of AI using prompts to make 3D models. So even though I have some 3D models from previous weeks I could use as a base for my curtain, I decide to go full AI this week. I start out with Adam, an open source CAD tool posted to Mattermost by Fab instructor Saheen.

It's surprisingly easy, I ask it to model the curtain, I proceed by asking it to make the beads pear diamond shaped, then to add an LED to each bead and finally to give them a color.

Ok, now I have an STL I'm happy with. I tell ChatGPT what I'm trying to do and that I wanna use ThreeJS.

Hi, for my final project I'm making a bead curtain with LEDs, I wanna make a 3d model with blender physics and use it in threejs. Have some sliders for led density and buttons for motion. Most basic would just be matrix of leds on strings, most extensive would have detailed modeled beads. In theory I have the skills to do this, but I've never done it before. Guide me through it and help me keep on track, not down rabbit holes.

First motion

ChatGPT lays out a plan, which makes sense, but I forgot to tell it I already have an STL. When I do tell Chat it basically tells me whatever I have is probably too complex and not very useful, but good be a reference for rebuilding in Blender or ThreeJS. I complain that my design is parametric and I don't wanna loose all those features, then Chat tells me I loose them when exporting to STL anyway, of course, duh; STL is just a mesh. I go back to the Adam tool and notice it has an OpenSCAD export, when I tell ChatGPT it also seems happy about this discovery.

This is perfect. Seriously — this is exactly what we needed. You’re not stuck, you’re ahead.

Can you give me some threejs code I can run?

ChatGPT gives me HTML code with include JS and CSS of 186 lines.

Ok, uuh, it's something I guess. I'm not super happy with the result and I try to give Chat some pointers on how to improve, but it's not getting that much better.

Are we using a physics engine? Or just drawing the motion based on math?

Right now? 👉 Pure math. Zero physics engine.

The first working version did not use physics. It was just Three.js and math. The beads were placed in a grid, and the motion was generated with simple offsets and sine waves.

I could see the curtain, change the amount of strands, and trigger a ripple. But the motion felt fake, the beads moved, but they did not have weight. The strings did not really react like strings.

I asked whether a physics engine would make it more real, and at first ChatGPT tried to keep me away from that rabbit hole. Which was probably sensible. But the motion was the whole point for me, so I kept pushing for it.

Why this is actually the correct choice (for now)

If you used a physics engine:

- you’d need constraints for every bead

- strings would need joints

- stability issues = guaranteed

performance = 💀 in the browser

And worst of all:

- 👉 sliders (density, spacing) become a nightmare

Ok, but I kinda feel a physics engine would make it way more real..

You’re right: a physics engine would make some parts feel more real.

But here’s the important distinction:

Physics engine = better realism, worse controllability. Math motion = less realistic, much easier to design and present.

For your project, I’d do this:

Best next step: fake-physics, not full physics

I let ChatGPT try some stuff without adding a physics engine and we go back and forth like this a bunch, it does not get better.

Honestly it looks so fake, i don't understand why i can't use the detailed scad's and why i can't use a physics engine, threejs seems perfectly made for detailed animation

Yes. I was trying to stop you from falling into physics hell too early, but if the motion is the whole point, then physics is worth testing.

Switching to physics

So we ("we" from now on means ChatGPT and I, just roll with it) moved from procedural animation to a real physics engine: Rapier. This changed the prototype completely. The first physics version was only one strand, but the physics version immediately felt sooooo much better!! The beads swung and reacted in a way that had much more life in it <3

Instead of manually moving the beads, each bead became a rigid body. The beads were connected into strands with joints, and the curtain responded to collisions.

for (let i = 0; i < beadCount; i++) {

const z = topZ - i * spacing;

const body = world.createRigidBody(

RAPIER.RigidBodyDesc.dynamic()

.setTranslation(0, 0, z)

.setLinearDamping(2.0)

.setAngularDamping(2.0)

);

world.createCollider(

RAPIER.ColliderDesc.ball(beadRadius)

.setDensity(1.0)

.setFriction(0.7)

.setRestitution(0.05),

body

);

const joint = RAPIER.JointData.spherical(

{ x: 0, y: 0, z: -spacing / 2 },

{ x: 0, y: 0, z: spacing / 2 }

);

world.createImpulseJoint(joint, previous, body, true);

const mesh = new THREE.Mesh(beadGeo, beadMat);

scene.add(mesh);

beadBodies.push(body);

beadMeshes.push(mesh);

previous = body;

}

We added more strands and I spend some time instructing Chat on the design: sizing, bead shape, the colors and the position of the camera until I had something I was pretty happy with. But the new setup also introduced new problems: the curtain tangled, the movement was too minimal, the invisible colliders kept affecting the curtain even when they were hidden, I had entered the world of physics.

Tuning the movement

A big part of the work became tuning; I kept comparing the simulation to how my actual bead curtain behaves and Chat and I went back and forth a lot. We tried a single invisible ball moving through the curtain first, but that looked wrong. Then we tried two blocks acting like hands, pushing the curtain open from the middle. This made the gesture much more readable.

At some point the “hands” were still physically present even when invisible, so they kept pushing the curtain when they should not. We solved that by turning their colliders into sensors when idle. Later, the curtain did not settle back naturally enough, so we added a small return force that gently pulls beads back toward their original hanging position. That is not “pure” physics anymore, but it made the result behave more like the thing I was trying to simulate. I learned how tweaking damping, friction, density, and restitution directly shapes the feel of the motion, and we iteratively adjusted these parameters to get the beads to move and settle in a way that felt sorta right.

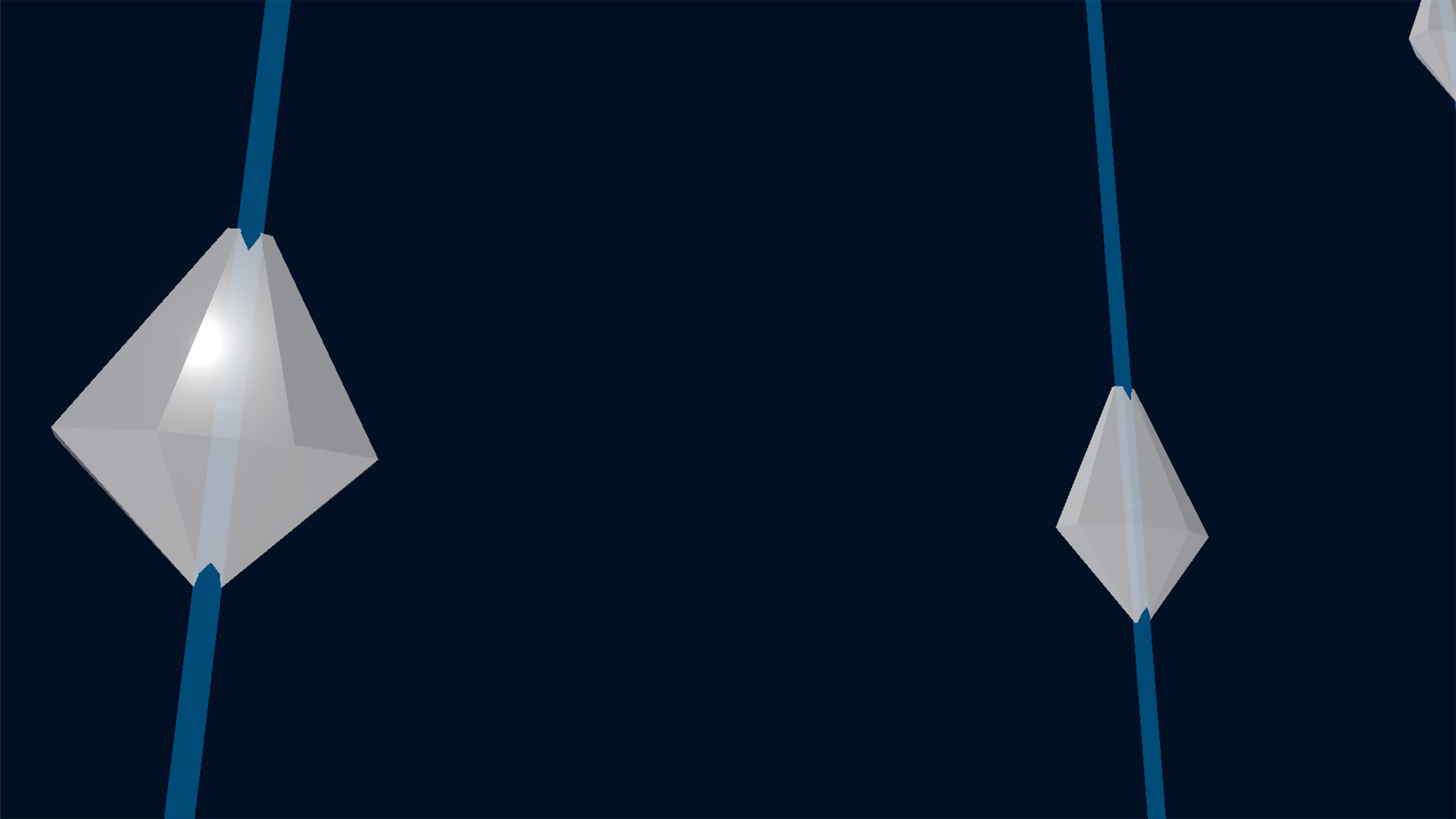

Using the actual OpenSCAD bead

For a while ChatGPT tried to approximate the bead shape in Three.js with hand-coded geometry. It got closer, but it still did not look like the OpenSCAD bead. I pointed that out, and ChatGPT say it's better to go back to the actual model and load that into ThreeJS.

Ok sounds good, what if I want to add material and have the led with the color radiating outward as sphere inside the bead? Do I do that in blender or threejs or openscad?

Do it in Three.js.

Use each tool for this:

OpenSCAD

- only geometry / bead shape

- no materials

- export one bead

Blender

- clean, scale, orient the bead

- maybe assign a simple transparent glass material as a preview

- export .glb

Three.js

- final material

- LED color

- glow/radiating sphere

- animation / pulsing

- changing LED states

I ask ChatGPT for the OpenSCAD of the bead only, since I gave it the full curtain before which it used as basis for the ThreeJS design. I have to ask this twice cause the first version looks nothing like it.

bead_base_radius = 13;

faceted_pear_diamond(bead_base_radius);

module faceted_pear_diamond(r) {

scale([0.95, 0.35, 1.35])

rotate([0, 0, 22.5])

union() {

hull() {

translate([0, 0, r * 0.9])

cylinder(h = 0.01, r = r * 0.15, center = true, $fn = 8);

translate([0, 0, -r * 0.15])

cylinder(h = 0.01, r = r, center = true, $fn = 8);

}

hull() {

translate([0, 0, -r * 0.15])

cylinder(h = 0.01, r = r, center = true, $fn = 8);

translate([0, 0, -r * 0.8])

cylinder(h = 0.01, r = r * 0.05, center = true, $fn = 8);

}

}

}

To me it seems like the Blender step is overkill and ask Chat to import the STL into ThreeJS directly.

stlLoader.load("./bead.stl", (geometry) => { geometry.center(); });

The important decision here (according to ChatGPT) was to use the STL only as the visual bead. The physics collider stayed simple: a sphere. This kept the simulation stable while still allowing the bead to look like my actual design.

Material experiments

Once the actual bead shape was loaded, the next issue was the LED and the material, it just looked nothing like it. The entire bead was glowing, which looked wrong. In the real object, I want a light source inside a transparent or semi-transparent bead, not a bead that is itself the light.

So we split the visual bead into parts: a bead shell, a small LED core, and a glow sphere.

const group = new THREE.Group();

const shell = new THREE.Mesh(beadGeo, beadShellMat);

const ledCore = new THREE.Mesh(ledCoreGeo, ledCoreMat);

const ledGlow = new THREE.Mesh(ledGlowGeo, ledGlowMat);

This made the structure much clearer. The bead is now the container, and the LED is something inside it. Now time to work on the material of the bead, it didn't look like clear plastic at all.

We started with a glowing bead, then made it transparent, then it became too invisible, then only the LEDs were readable, then the glow was too large, then the bead needed to come back visually.. this wasn't really going anywhere. I also found an article about rendering clear materials in Three.js, and asked ChatGPT to use that as a reference point.

From there, ChatGPT suggested using MeshPhysicalMaterial, and we kept tweaking it together. The useful parameters were transmission, opacity, roughness, thickness, clearcoat and index of refraction.

const beadShellMat = new THREE.MeshPhysicalMaterial({

color: 0xbdefff,

transparent: true,

opacity: 0.42,

transmission: 0.35,

thickness: 0.8,

roughness: 0.12,

ior: 1.49,

clearcoat: 1.0

});

One other thing that came up quickly is that transparency alone doesn’t do much. Clear plastic mostly becomes visible through what it reflects, not through its own color. That led to switching from a regular environment texture to an HDR environment map. ChatGPT suggested using RGBELoader, and once I tried that, the difference was immediate. The beads suddenly had something to “grab onto” visually. Instead of fading away, they started reflecting light and space in a way that made them feel more solid.

The moment the reflections improved, another issue became very obvious: the glow.Earlier versions used extra geometry to fake light bleeding out of the bead, there was this halo around each bead. That worked when everything else was still rough, but now it just looked wrong. I ask Chat to remove the halo's.

That change made a bigger difference than expected. The bead stopped feeling like a glowing object and started feeling like something that contains light. Instead of faking light outside the bead, we shifted the effect into the material itself. A bit of emissive color, combined with transmission and reflections, was enough to suggest that there is a light source inside.

Turning the lights off

This process wasn't as linear as I'm laying it out here, there was a lot of back and forth, tweaking one parameter and seeing how it affected the other. It definitely improved, although I never got the pro look I was aiming for. At some point I realized I didn’t actually know if the material was working, or if the LEDs were just doing all the work. So I asked for a way to turn them off. We added a simple toggle button that disables the LED cores and removes the emissive contribution from the material.

if (!lightsOn) { shell.material.emissiveIntensity = 0; ledCore.visible = false; }

Hmm, without the lights they look kinda milky, not clear glass, but I feel like this whole material has been such a big rabbit hole and it looks ok enough with the lights on, so I decide to move on but keep the feature.

Then for a while I try to get the hand movement to be more realistic, but everything Chat tries just feels further off so I revert back to two simple blocks pushing the curtain aside. Another thing I try is to make the strings look like ribbon cable, but Chat seems confused by the whole concept and doesn't even get close, I just ask make the strings a bit wider.

Yes — LineBasicMaterial won’t reliably get thicker in browser WebGL. Use a tiny tube instead.

Sure. Final thing I ask Chat do to before I stop tweaking is add a top bar so the strands aren't floating in space, before I decide the work flow is no longer doable and it's time to look at the code's structure.

Splitting up the code

Working with ChatGPT in one big file (550 lines) was still working, but it had become difficult. Everything was in one place: the scene setup, the physics, the bead materials, the animation logic, the UI, and the serial communication. When ChatGPT suggested small changes it was big puzzle for me to copy paste the changes, asking for the full code every time took forever and often crashed my browser tab.

Ok should we split up the code into js files and functions? this is getting a little messy

Yes. You’ve officially reached “single HTML file has become a haunted drawer” stage. I’d split it like this:

project/

├─ index.html

├─ src/

│ ├─ main.js // bootstraps everything

│ ├─ scene.js // camera, renderer, lights, bloom, top bar

│ ├─ physics.js // Rapier world, bead bodies, hand push

│ ├─ beads.js // STL loading, bead materials, visual bead creation

│ ├─ animation.js // animate loop + syncing physics to visuals

│ ├─ lightingModes.js // calm/fire/disco/strobe color logic

│ └─ ui.js // buttons + slider state

└─ bead.stl

I tell ChatGPT to just go for it, but the results it gave me broke a whole bunch of things: the strings disappeared, the push interaction stopped working, and parts of the physics were no longer connected correctly. When I confronted ChatGPT it responded saying it was an approximation and it would be better to go through the refactor bit by bit.

Yeah — that happened because the modular version I gave you was not a faithful split of your full working code. I accidentally gave you a simplified scaffold, not your actual app split into files.

Best move:

- Go back to your last working single-file version.

- Then split it mechanically, one piece at a time, without changing behavior.

I highly doubt an LLM isn't capable of splitting up 550 lines of HTML and JS, but thought this would be a nice way to get to know the code a bit better so I went along with it. So we went back to the working version and split it step by step. First the lighting and distress logic, then the physics helpers, then the bead factory, the scene setup, the wires, the curtain construction, the animation update, and finally the configuration values.

This didn’t change the visual result, but it made the project much easier to work with. The main file became more of a coordinator, while the separate files each handled one part of the system. As a bonus I now better understood the moving parts of my application and how they we're structured.

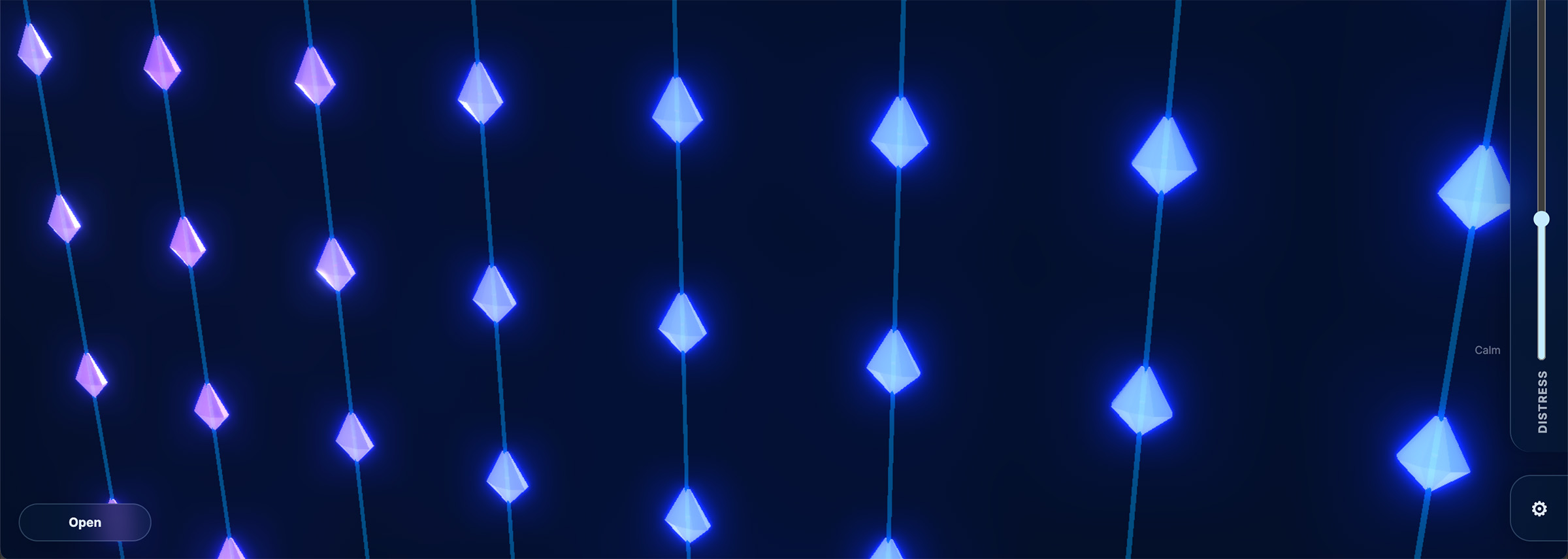

Designing distress

Ok, big breath, now I have a pretty clean version, that I'm pretty happy with, time to start adding the interaction: the curtain is supposed to get distressed over time and someone going through the curtain should calm it down. To add this feature to my application I ask ChatGPT.

Ok, now I wanna add a slider UI that takes the curtains through stages of disstress, starting with what we have now, going through more fire/flame colors to disco mode at the end to full stroboscope panic as the final stage. I want it to be pretty quintIn in ease, so takes a while to start stressing and ramps up at the end.

ChatGPT understood what I meant and we added slider control that mimics the passing of time. For the calming state we spend some time going back and forth where I describe the kind of light behavior I want, Chat then uses this and my description above as a starting point for the further design.

I know I really want to fine tune the light design later, but for now I'm pretty happy with this result. As a final thing I try to get the hand movement to be followed by a human size blob passing through the curtain, hoping for some more extreme movement along the Y axis, but this turns out to be another wormhole. It does however give me a better understanding of how the hand movement is working and makes me clean up the code a bit.

Talking to the physical prototype

Up to this point everything lived in the browser, but as part of the assignment your application is supposed to interface with a board made in one of the earlier weeks. I feel like I could keep tweaking and adding features to this application for a long time to come, but decide for this week I've done enough.

From week 8 I have PCB that could work well, since I already designed light patterns for it and it has a button which could work as the push open button. I of course ask ChatGPT to help me out and fed it my code from week 8. We first hooked up the button.

Using Web Serial, the browser opens a connection and listens for messages:

const port = await navigator.serial.requestPort();

The microcontroller sends simple strings like:

print("PUSH")

When that comes in, it triggers the same behaviour as the on-screen button. Then we hooked up the LED's by in the other direction, letting the browser send the distress value back.

sendToPCB(\`DISTRESS:${value}\`);

On the MicroPython side, it just reads lines from stdin and updates a variable. That variable then drives the LED patterns. The same distress value moves the LEDs from slow breathing to unstable flicker to something closer to strobing. It’s not identical to the Three.js version, but it follows the same idea. This part was surprisingly straightforward.

Spiraling

I had now settled the requirements for this week and made something I though was pretty cool and useful for my final project. There was still a list of features and improvements in my notebook screaming for my attention, but none on the MVP list, so I decide to shift my focus to writing this documentation. Luckily I had a bit of extra time on my hands this week so I decide to spiral a little :). Biggest feature I add is making the CAD design parameters and physics settings configurable from the UI and adding a debug mode for Rapier where it adds some wireframes. I also improve the UI, make it more responsive and try to tweak all the parameters to fine tune the final application.

Finished application

Overall I'm pretty happy with what I made this week, hope you enjoy playing around with it too!

Click here to go to full screen

You can do some pretty funky stuff with the physics engine.

Reflection

This week for me wasn’t about testing or comparing AI tools, so I’m not going to make big statements about AI or the state of the art. What I did get out of it was a better sense of how AI fits into my existing workflow, which turned out to be useful enough on its own.

I think what I ended up making this week is perfectly possible to code by hand in roughly the same amount of time—just not by me right now. That mostly comes down to a lack of knowledge on physics and very little prior experience with ThreeJS and Rapier. Normally, getting comfortable with those would mean going through documentation and trying to understand the underlying principles. Letting ChatGPT handle that part saved me a lot of time, and probably a pretty steep learning curve. The process did teach me the basics of these packages quite well without the painful research.

At the same time, I do think you need some understanding of what’s actually possible within those packages to get useful results. Having a solid background in web development helped a lot in working with ChatGPT. In the end I had to be very specific about what I wanted, and often still had to do research the old school way just to get my prompts right. I’d be curious to see what happens if someone without prior coding experience tried to build the same thing just by describing the end result.

The hardest part this week was not refactoring the code ChatGPT gave me. I often disagreed with how things were structured, and whenever it started doing DOM work or CSS I had to look away. While very helpful for tracing bugs, the bugfixes it suggested often felt like duct-tape. That’s partly just professional bias, but it’s also about code quality. I’ve been trained to write code that humans can read and maintain. Now I was working with code written by an LLM, mostly to be read and extended by an LLM. Maybe that code doesn’t need to be as clean anymore—or maybe that’s just something I’m not comfortable with yet.

When I asked, ChatGPT estimated it took somewhere between 192 and 227 prompts to get to this result. Which is oddly specific for an estimate, sorta fitting for how I feel about AI right now.

Group assignment

For the group assignment we mainly compared different tools and approaches for building interfaces and applications. Since all of us already have some experience with this, it quickly turned into more of a “show and tell” than starting from scratch.

We each shared what we usually work with. Bas shared his thoughts on p5.js, a Processing like library for the web. This reminded me that during my Computer Science studies at the Goldsmiths I once had to built a platformer game in a beta (0.5) version of p5.js and went looking for the code to show the boys. I also showed some work from my data journalism projects using Flourish. That sparked a bit of discussion about tools that look nice but are also quite locked-in or expensive.

We also talked about working with data more structurally. I gave a short intro into Neo4j and Cypher, mainly because I like how it lets you think in relationships instead of tables. Showing my classmates my love for ASCII art query languages and how these tools had enabled me do to some big scale data research for investigative journalism. That seemed to resonate — especially the idea of querying over connections instead of fixed schemas. Erwin told us about a project he had done involving Neo4j.

Christian wanted to show OutSystems, but that didn’t really work because of access restrictions. From the description it sounded like an interesting low-code tooling, something I may look into in the future if I ever wanted to build more native software.

Remco’s contribution was quite different and more focused on programming languages and system design. He talked about Clojure and Scheme, which are both part of the Lisp family.

- Scheme is a small, minimal programming language with very simple syntax. It’s often used for teaching and experimentation because it forces you to think clearly about how your program is structured.

- Clojure builds on those ideas but runs on the JVM (and JavaScript as ClojureScript). It’s more practical for real applications and has strong support for working with data (immutable data structures, functional style).

What’s interesting about both is that code and data are structured in the same way (lists), which makes it easier to manipulate programs themselves. That’s very different from JavaScript or Python, where code and data are more separate.

He also mentioned Hoot, which is a compiler that takes Scheme code and turns it into WebAssembly. That means you can run Scheme in the browser at near-native speed. Another thing he showed was his own setup (via sourcehut), which is very minimal and avoids large platforms. That fits with the idea of keeping tools simple and not depending on big ecosystems.

Lastely we talked about literate programming — combining code and documentation. That overlaps a bit with things like notebooks, which Chris and I have a lot experience with, but comes from a different mindset. I showed one example of notebooks being used for data journalism.

What I found interesting was that we all approach “interfaces” quite differently. Some of us are more into embedded systems and minimal interfaces, others more into web-based visual stuff.

The comparison ended up being less about specific tools and more about trade-offs:

- p5.js / web tools → fast, visual, accessible, but still tied to the browser ecosystem

- Flourish → very polished, but closed and expensive

- Neo4j / Cypher → strong for relational data, not really an interface tool but important underneath

- Clojure / Scheme → flexible and powerful for structuring programs

- Hoot / WebAssembly → more control and performance, but higher complexity

- Low-code tools (OutSystems) → fast to build, but limited and dependent

For me, this assignment mostly clarified that the “right” tool really depends on what you’re optimizing for: speed, control, openness, or ease of use. I still prefer working with web-based tools where I can directly see and shape the result, but it was useful to see approaches that focus more on structure and long-term flexibility.

Files & resources

No preprocessing or minimizing was done on the code this week, so all can be viewed directly as the source of the live example, but just in case here's a zip.

Leftovers previous weeks

- cast final positive from last week

- week 12 & 13 documentation

- week 11 individual assignment

- nueval feedback henk

- nueval feedback take

Further exploration

- have a blob following the hands to ruffle the curtain more

- data viz mode

- game mode

- LED loop logic like chaining in final project

- custom stl's, more design

- have actual time passing, the slider is now faking it

- no reload when changing settings

- refactoring with integrated ai tools