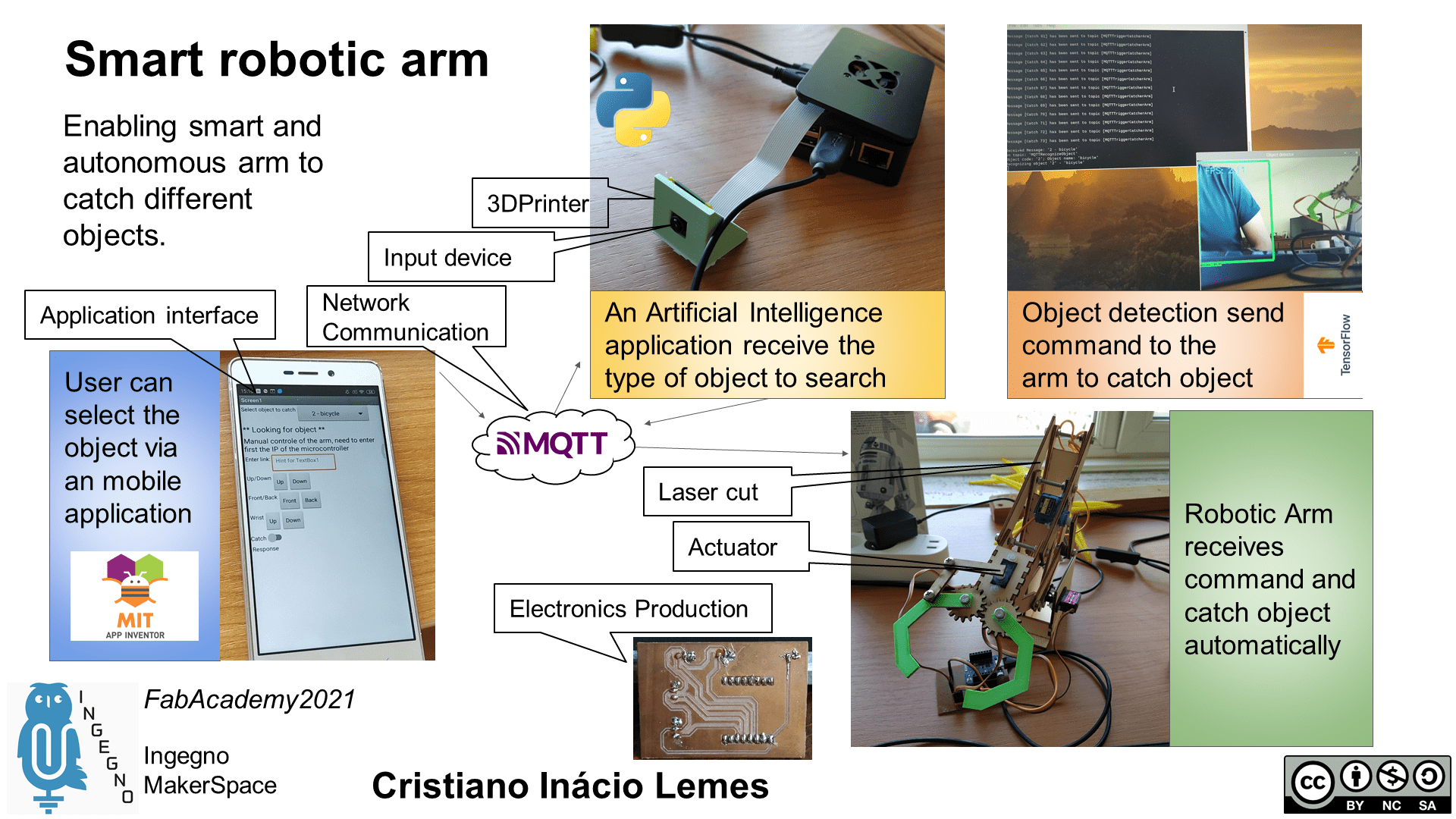

Autonomous and Intelligent Robotic Arm¶

The idea of the final project for the Fabzero course is to design a robotic arm that is able to recognize an object and take it automatically. The arm will drop this object off in a place to be defined later (it can be a constante place or a variable setup by the user). This project differs from the existing ones because will integrate a camera in the the robotic arm and use artificial intelligence to recognize the desired object to catch. Attaching the arm in an autonomous vehicle will allow it to move in the environment to catch the desired object and also to drop it off in a desired location. This arm could be used in healthy care to locate and bring a medicine without the need of the patient to move and to look for it.

This project is split in five main parts. Some of them has sub tasks to make it easier the design of this project.

- Design the robotic arm.

- Start with an arm with two points of movement.

- Include as much point of movement as necessary.

- Design a mobile app.

- Design the image recognition.

- Design the mobile vehicle.

- Integrate all parts together.

Steps for the final project¶

Design the robotic arm¶

This step intends to design a prototype of the robotic arm that will be used in the next steps. It is an essential step, since the next steps rely on the quality of the movement of the arm. The circuits to move the arm should be designed in this step as well.

Design a mobile app¶

Although this is another step, it is very connected to the previous one. This mobile application will send the commands to the arm to move. Then, we can prove the utility of the prototype of the robotic arm. The mobile application will not be part of the final solution, since the project aim an automatic robotic arm.

Design the image recognition¶

Image recognition play an important role in this project. It will be necessary to attach the camera in the arm and use it to capture images from the enviroment. A state-of-the-art object detection algorithm will be used to process the images and identify the desired object.

Design the mobile vehicle¶

The autonomous vehicle will enable to move the arm in the environment capturing pictures and identifying the desired objects to catch. The mobile application might be used here as well, to prove the vehicle works as it was expected.

Integrate all parts together¶

After all steps done, it is time to assemble the robotic arm and the vehicle and start the tests in a real scenario. It seems a lot to do in one semester, but the idea is to develop as much as possible, applying the concepts learned in the course.

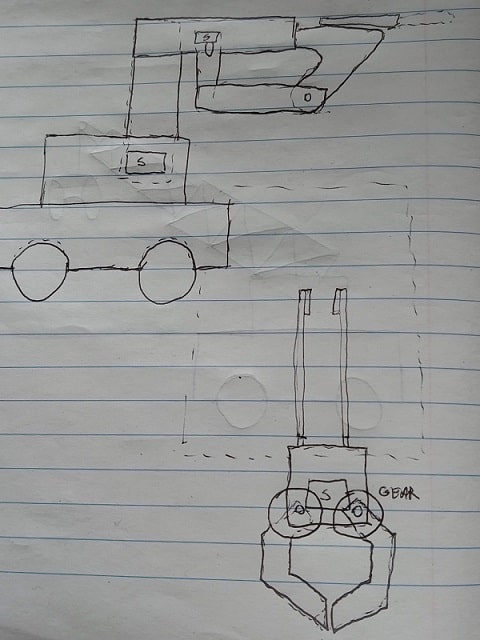

Drawing arm¶

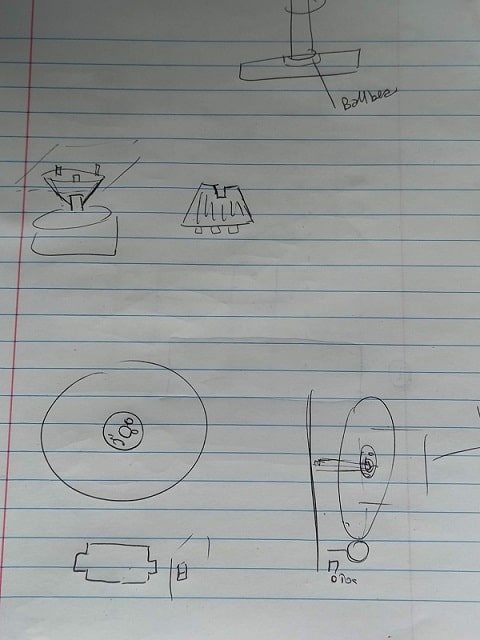

First step of the project I made some drawings on how I thought it would look like the robotic arm. From this drawing, I could design the first prototype of the arm.

First prototype¶

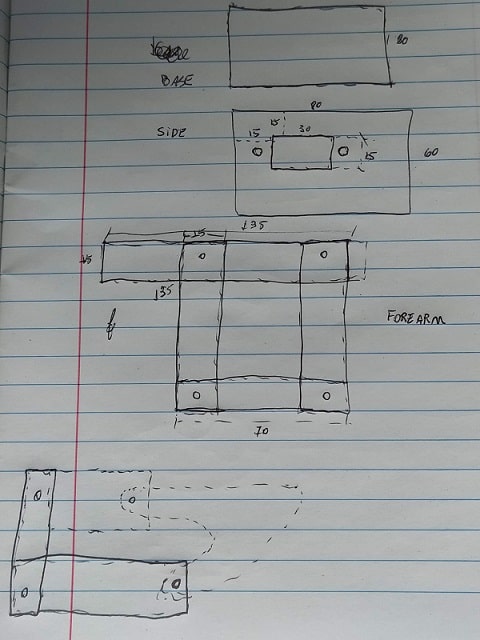

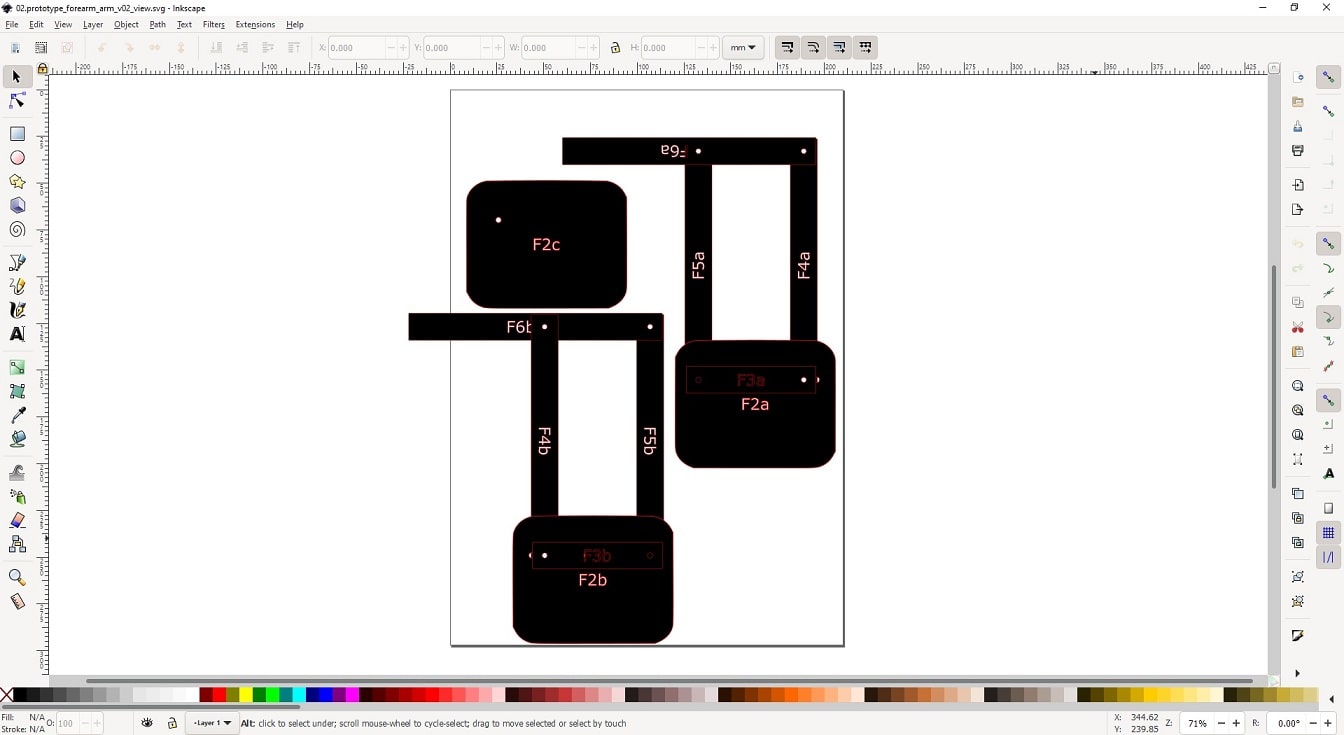

2D Design¶

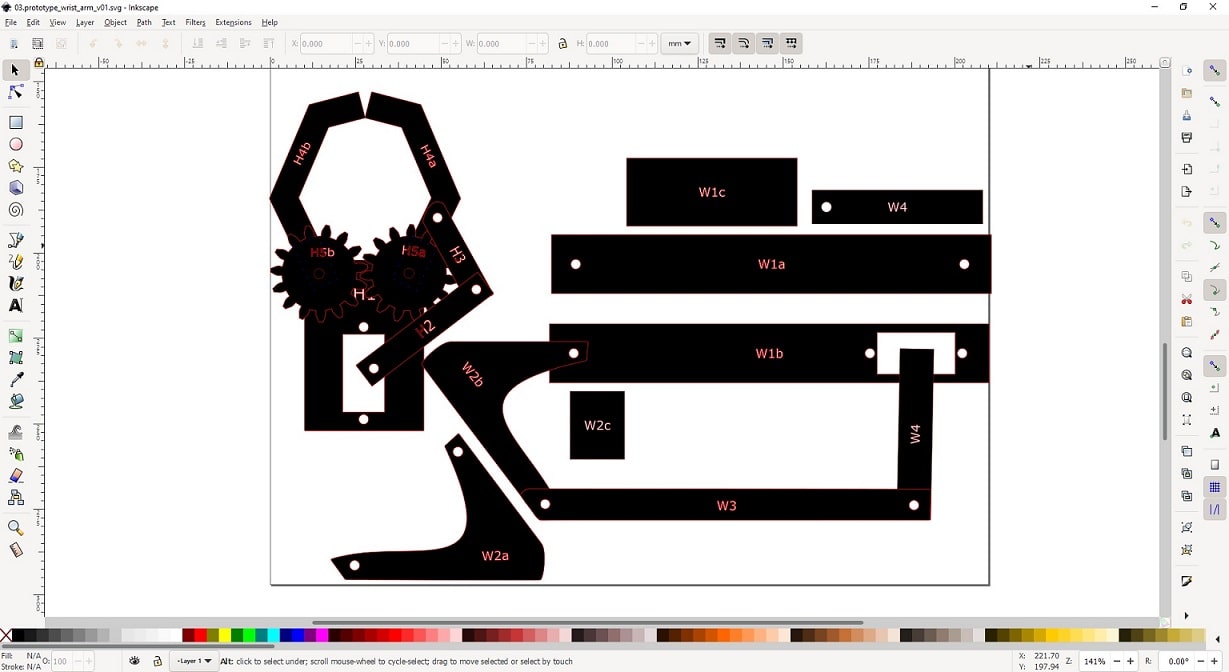

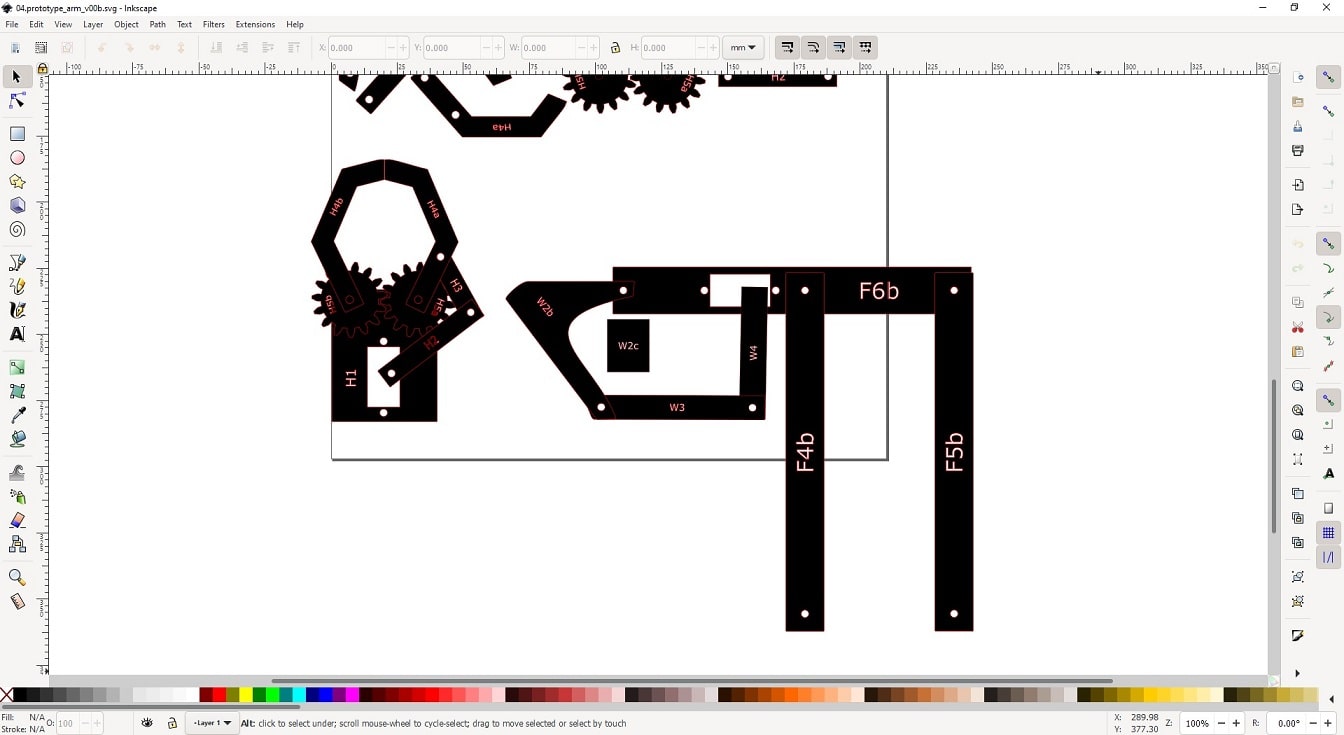

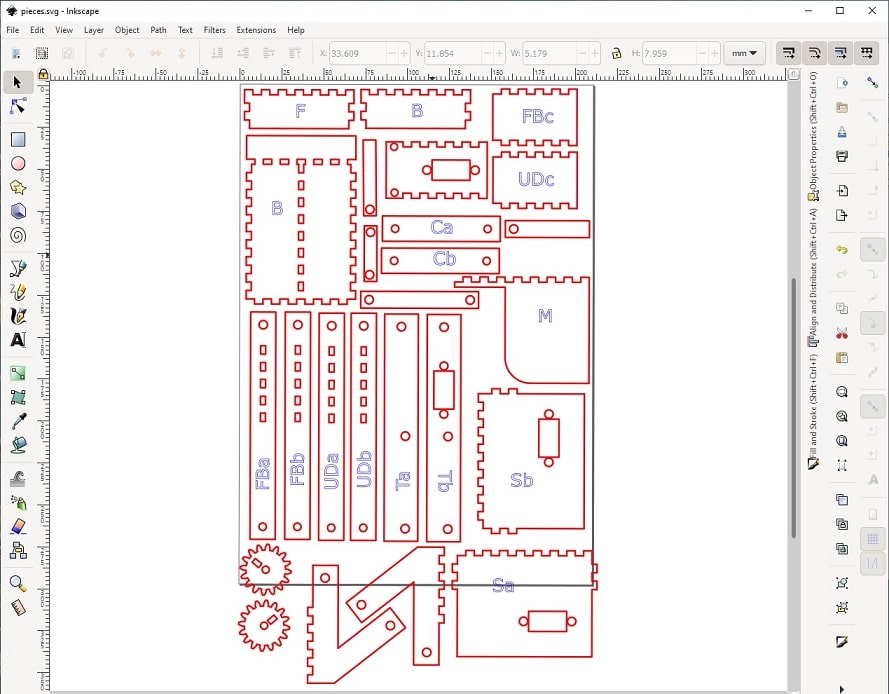

For the first design, I did not have experience with 3D software model, so I designed entirely in Inkscape. I used the drawings to design this first prototype and adjusting the width of the pieces along the time. I did not use any technique to duplicate the common pieces, so it was not the best prototype. However, it allowed to see if the drawing of the arm would work as expected. To reach in the images bellow, it took some time, redesigning some pieces that was not entirely good in size or shape.

Download the ZIP file containing the files generated from this design.

Laser cut¶

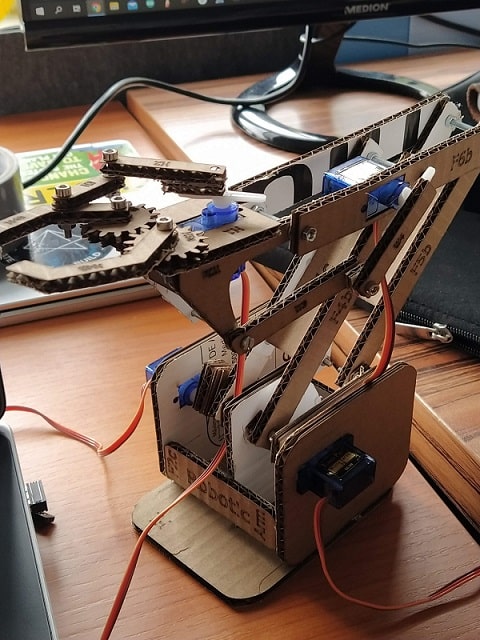

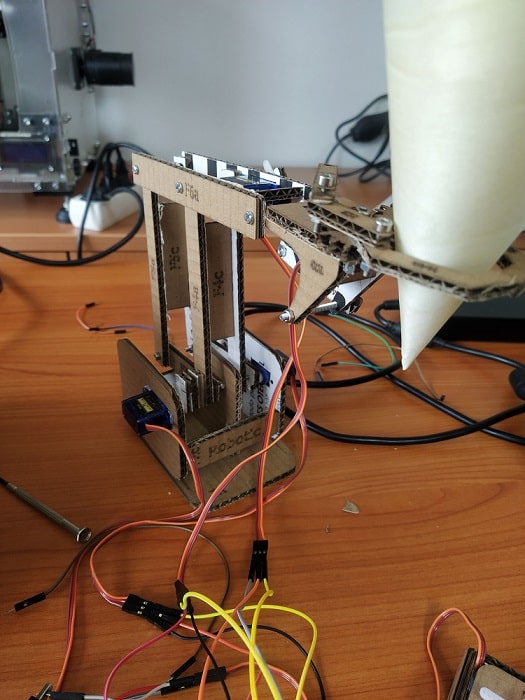

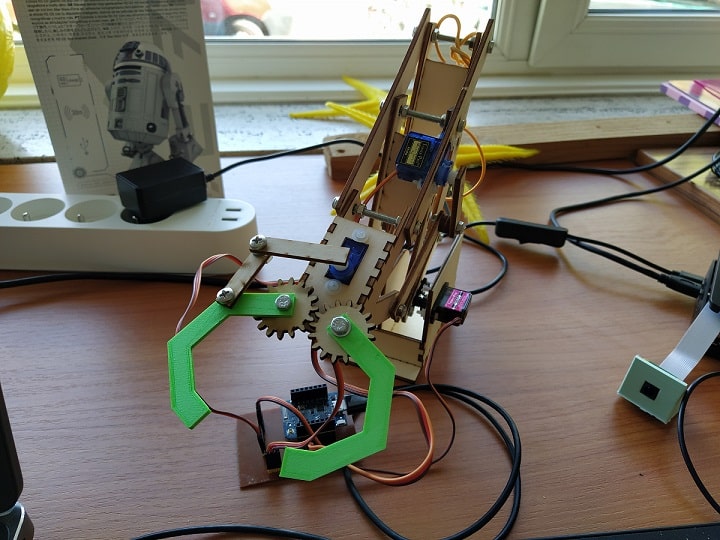

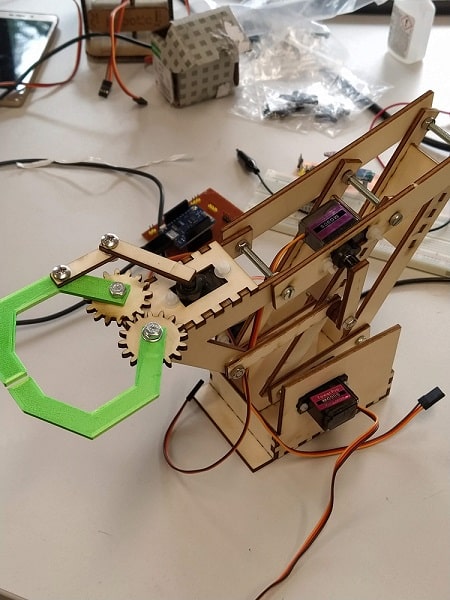

From the previous design I have generated the lines to use it in the laser cut to make the first prototype of the arm. I used cardboard for this prototype to make sure the arm works before using a more expensive and better material. I have done a couple of interactions between the laser cut and redesign of the arm, which is normal when we prototype. Bellow there is the resulted prototype. I have to make a couple of adjustments, because some dimentions were not good and also not easy to update in the design. I decided to not spend time in this part, because I just need to see if the arm moves in a good way that can catch the object. The idea is to redesign later the arm using a 3D software modeling.

Assembling¶

I have used the following components to design this prototype:

- 4 (four) servos SG90

- one microcontroller containing ESP8266 board (WiFi). I used arduino Uno with the WiFi board.

Design Mobile app to move prototype¶

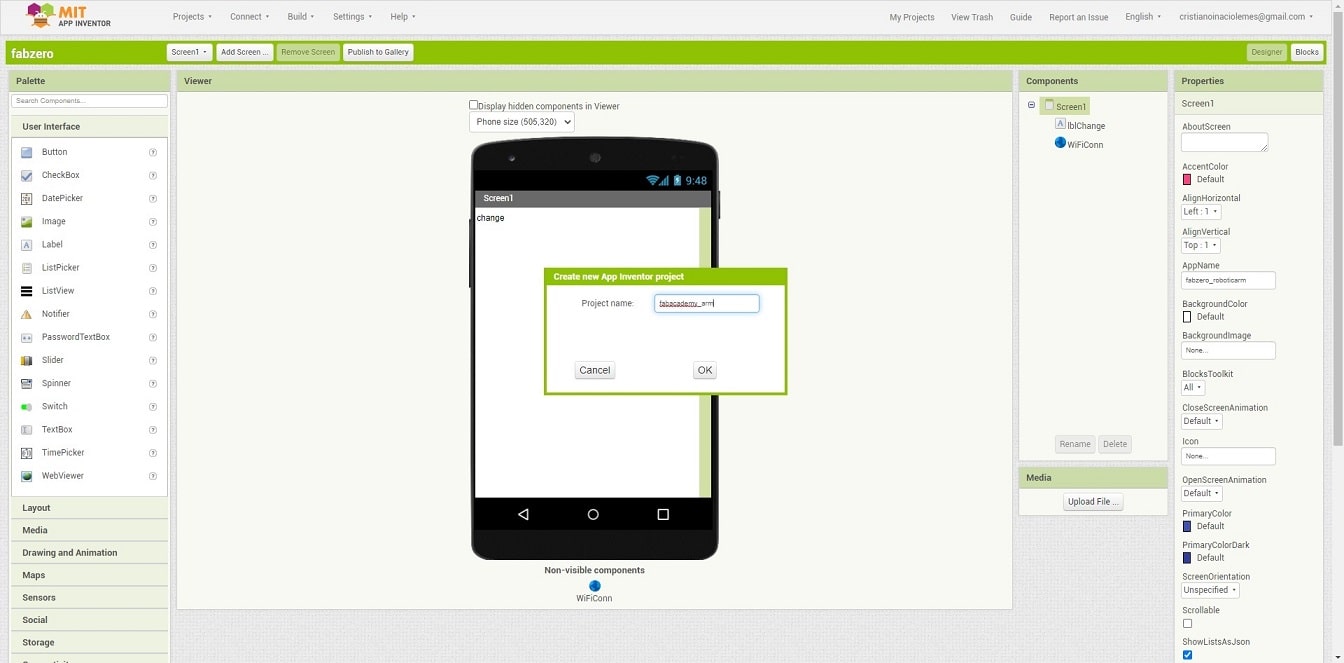

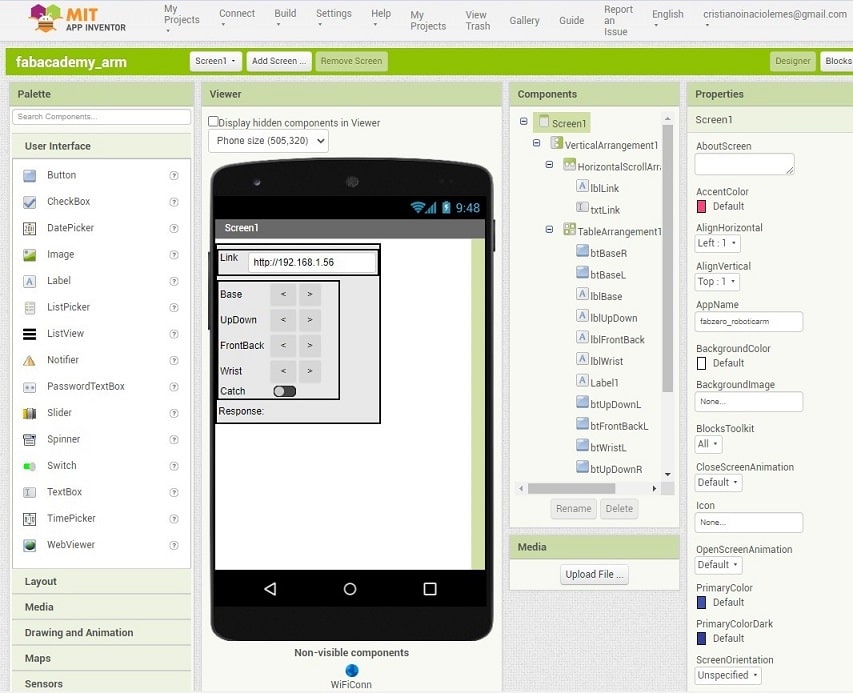

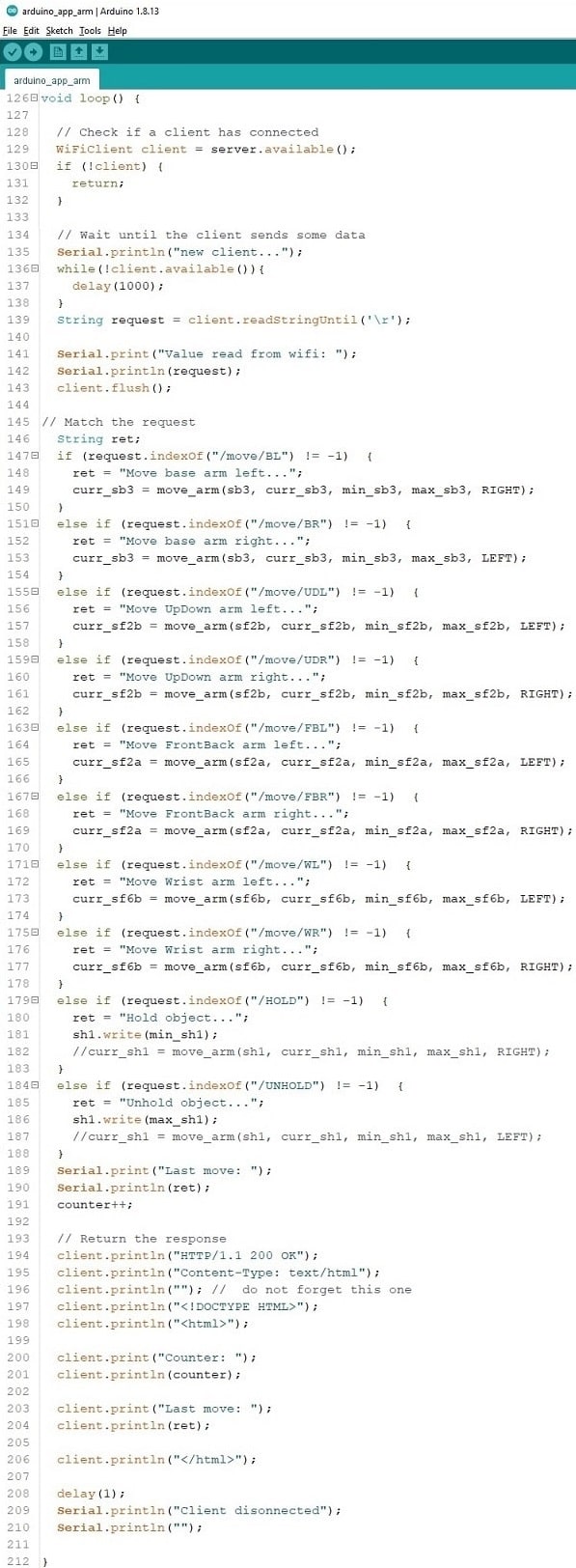

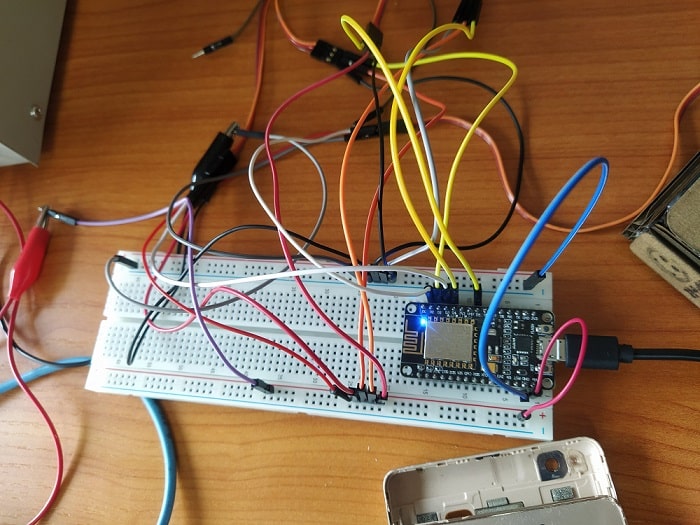

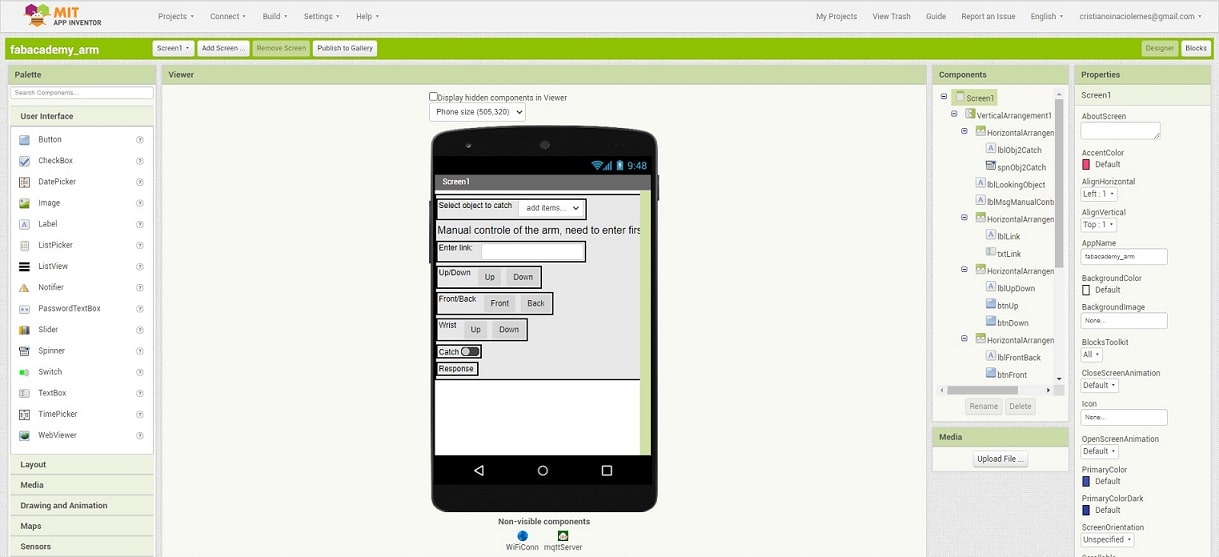

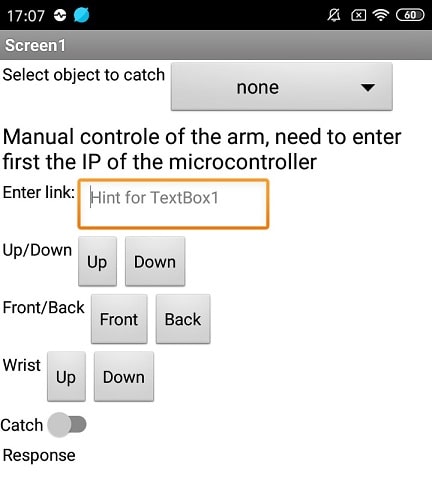

To know if the arm moves as expected, I have designed a simple mobile app that performs the possible movements of the arm. I used the AppInventor to design this application, as shown in the Interface and Applications assignment week. I have used the Wemos as microcontroller and ESP8266 board to enable the communication between the app and the microcontroller.

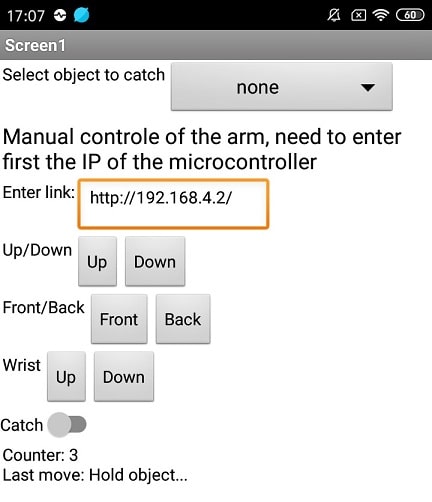

Mobile app v1¶

Create a new project in the MIT App Inventor.

The app contains the following components:

- Field for the user type the IP address (and link) of the microcontroller. This link will be used to send requests to the microcontroller via REST (e.g. http://192.168.1.56/move/ARM).

- Two buttons to move the base of the arm right and left.

- Two buttons to move the arm up and down.

- Two buttons to move the arm front and back.

- Two buttons to move the wrist of the arm up and down.

- A switch to move the catcher of the arm open and close.

- Additionally I added a field to contains the response of the request.

- I also used the component not visible WiFi that enable the mobile application to connect in the network and send HTTP request to a server (that will be configurate in the microcontroller).

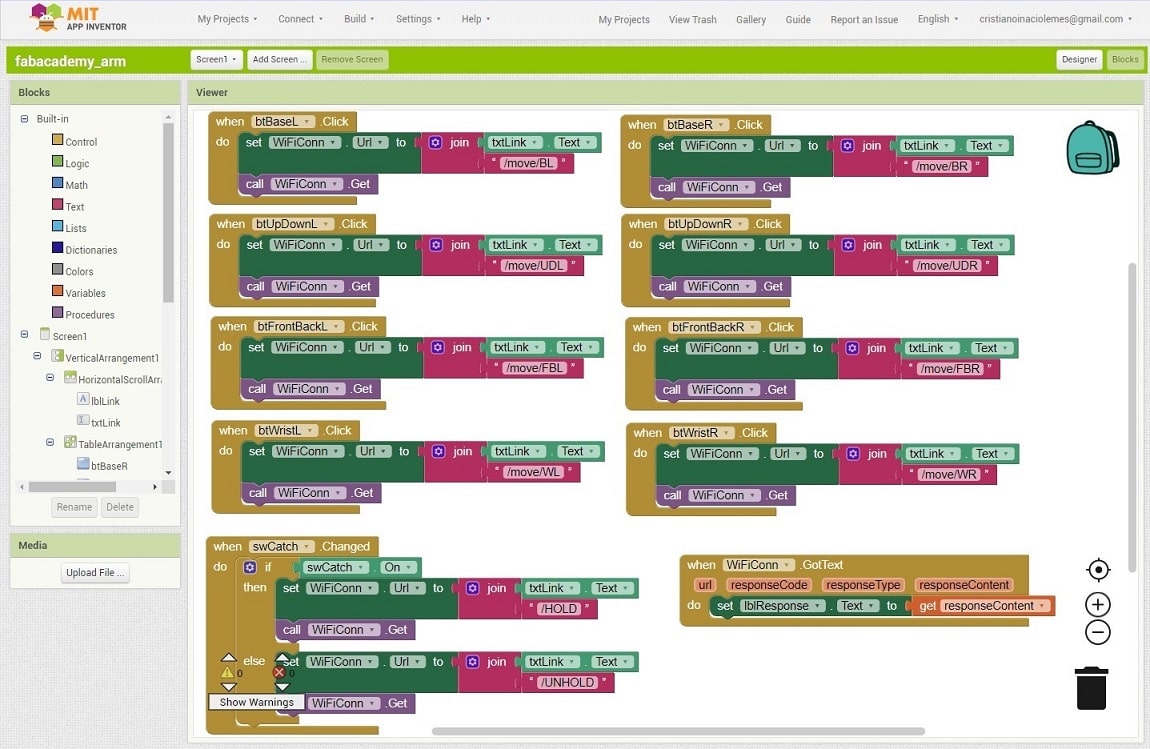

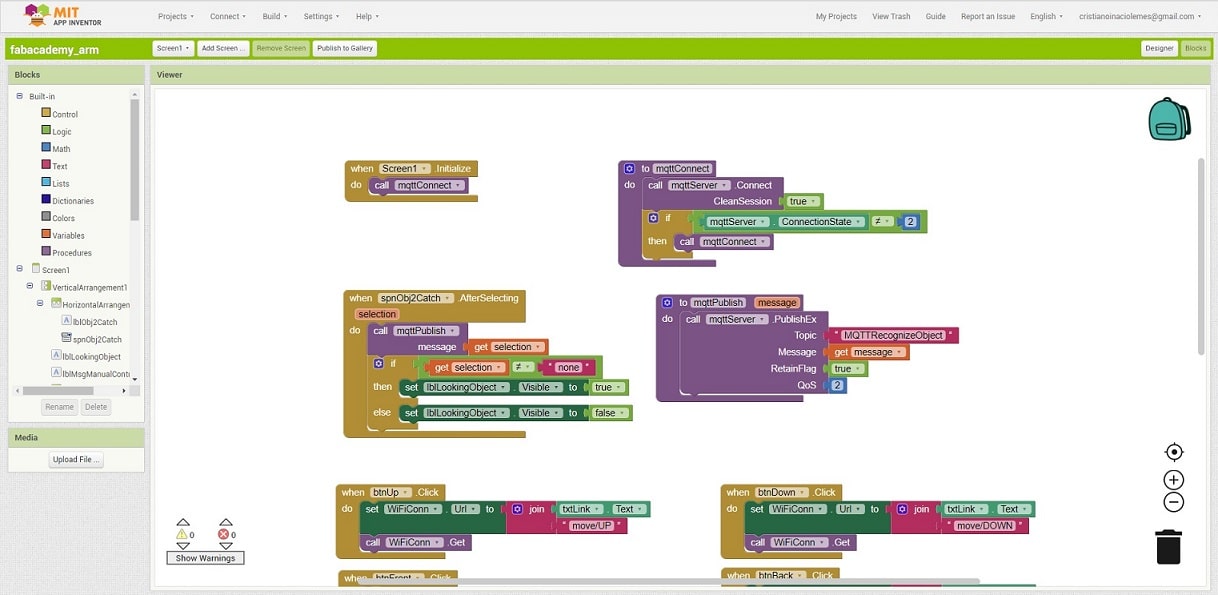

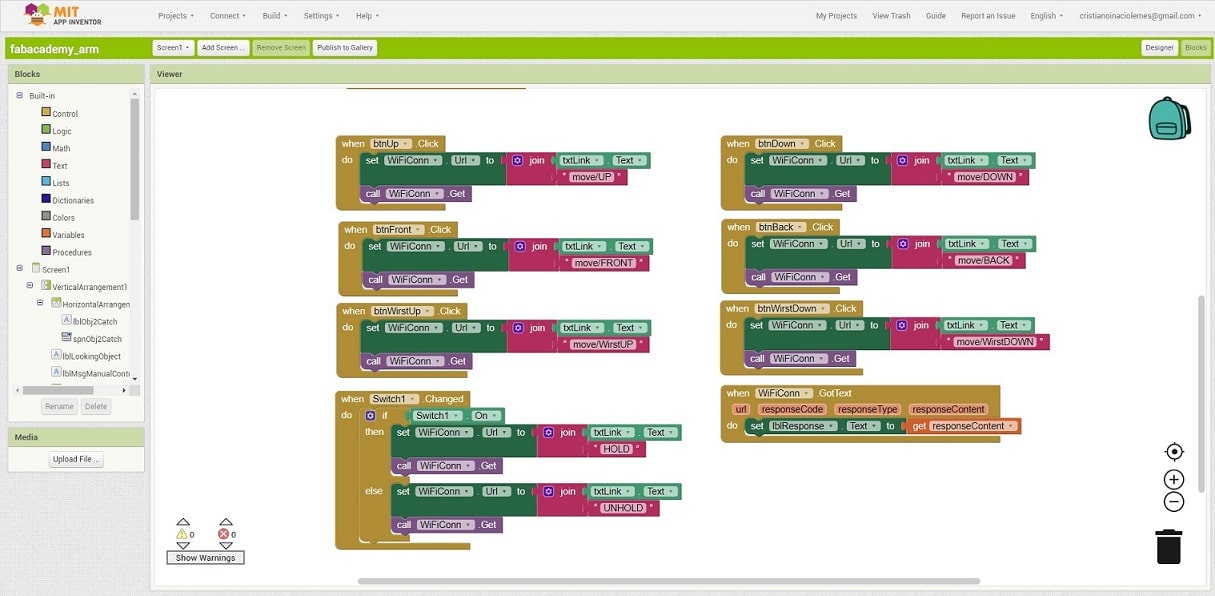

Bellow there are the code that execute the action of the buttons in the mobile application. All the actions for the buttons prepare a link, using codes that will be interpreted by the microcontroller to move the arm, and execute a HTTP request GET to send the command to the server. The beginning of the link is the input field Link in the mobile app. The text is joined with the following code to indicate which movement the arm should do. When the server send a HTTP response, the content of the response will be assigned to the label response.

- to move the base right and left: /move/BL and /move/BR/

- to move up and down: /move/UDL and /move/UDR

- to move front and back: /move/FBL and /move/FBR

- to move the wrist up and down: /move/WL and /move/WR

- to catch: /HOLD and /UNHOLD

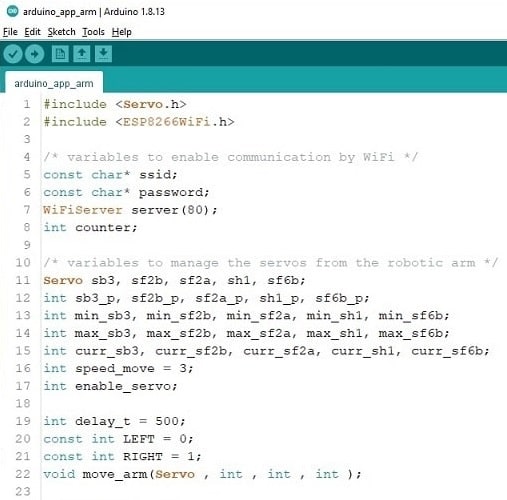

Microcontroler code¶

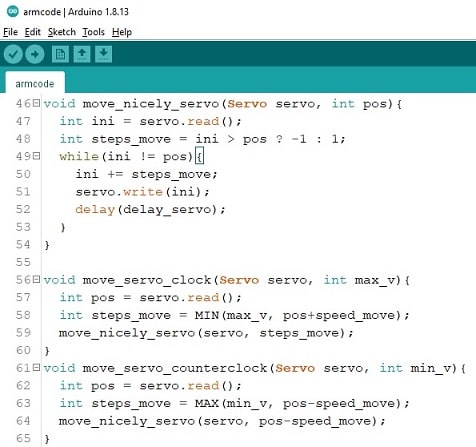

The important parts of the microcontroler code were explained during the assignments. Here it is important to understand the code used to integrate both parts, the mobile application and the robotic arm.

-

Variable and function declaration:

- to connect in the wifi network.

- to manage and move the servos.

-

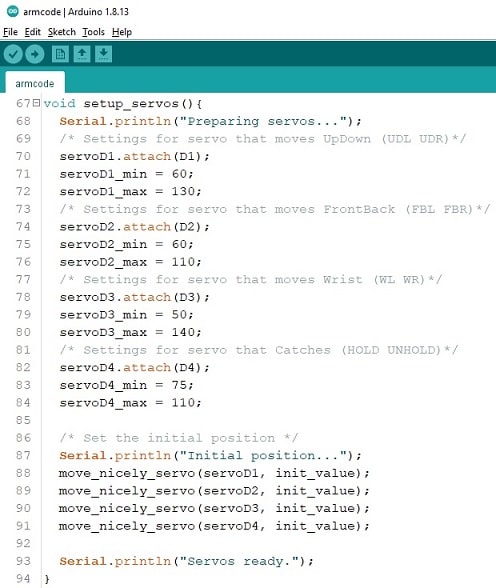

Function to initialize the servos.

-

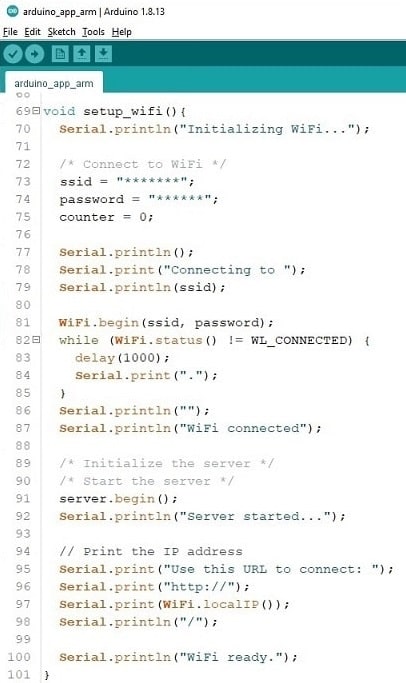

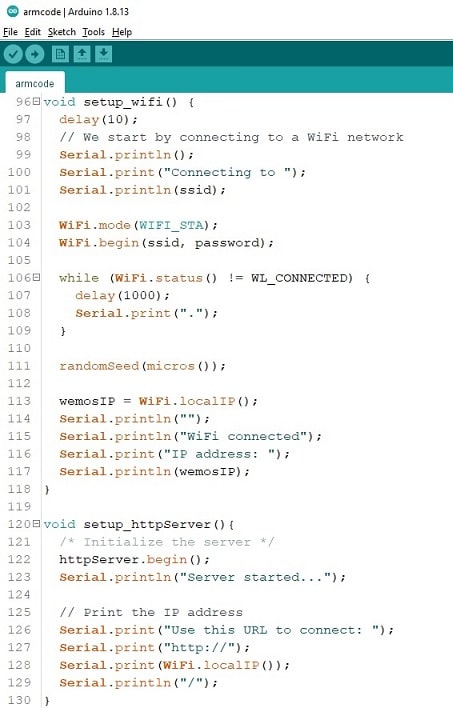

Function to initialize the wifi network. Remember to change the SSID and password of your WiFi network.

-

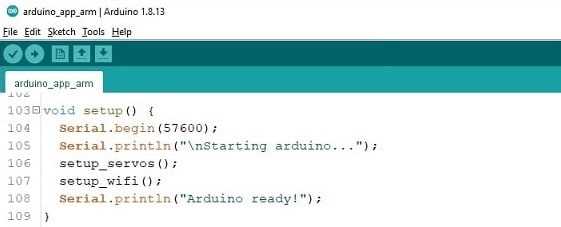

Main function to setup the microcontroller.

-

Implementation of the function to move the arm

-

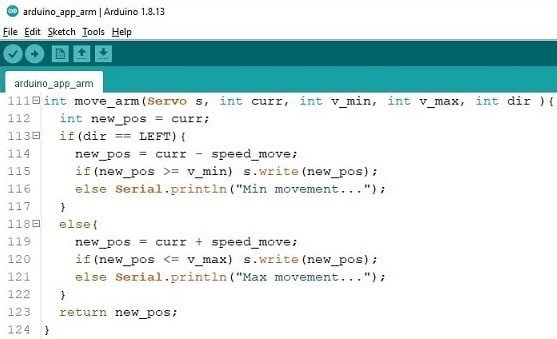

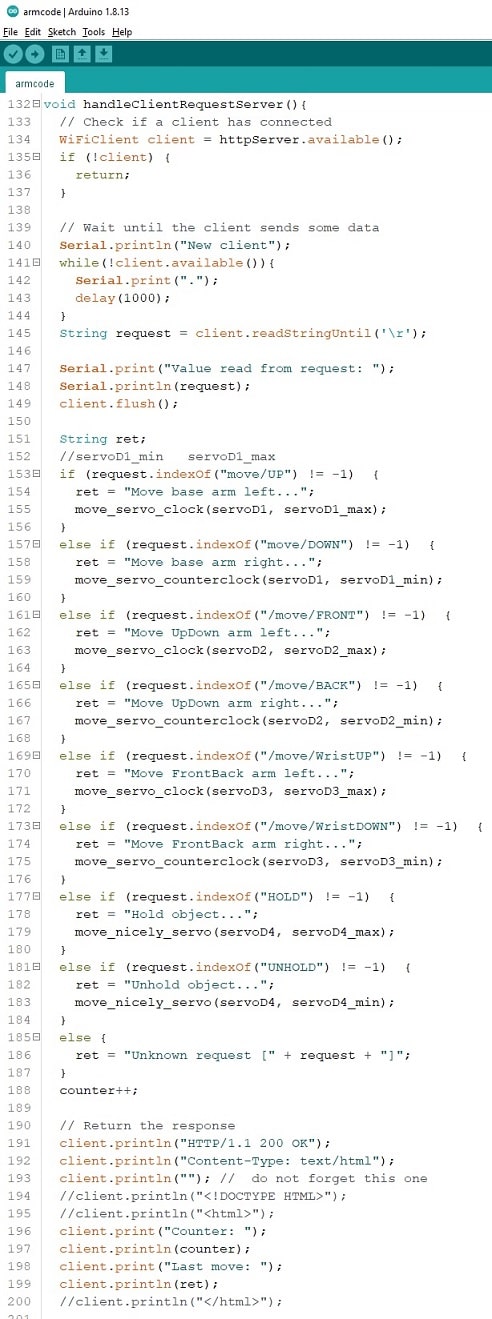

Main function loop, where the requests from the mobile application will be handled. For each request received, it will be checked which code are present in the URL. Depending of the code, a specific servo will be moved. For example, if the URL contains the text /move/BL, the arm will be move left (servo sb3). In the end, it will be sent back a HTML response containing a counter of request received and what was the last movement.

Download the INO file of the microcontroler code.

Drawing PCB¶

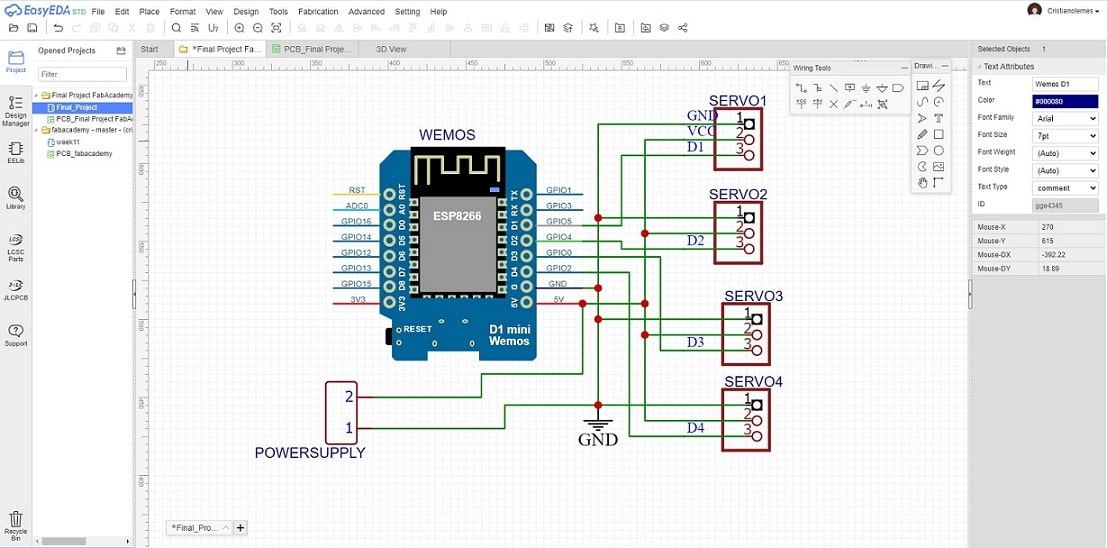

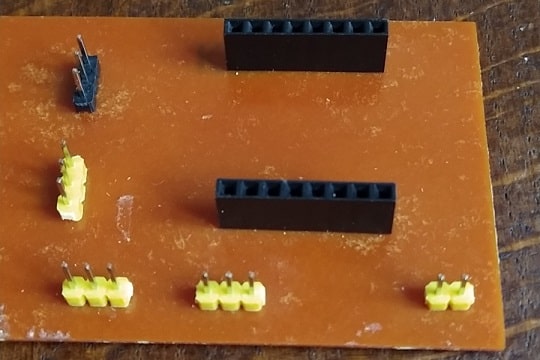

To avoid the amount of wires in the connection and make easy to setup the arm, here it will be shown how the PCB were designed. The list of components did not change from the first prototype, except the microcontroller that I decided to use Wemos because is a cheaper option than arduino Uno. The board will only provide the pin headers to connect the components (wemos and servos). I also added an extra pin for power supply, in the case wemos are not able to provide enough energy to the board.

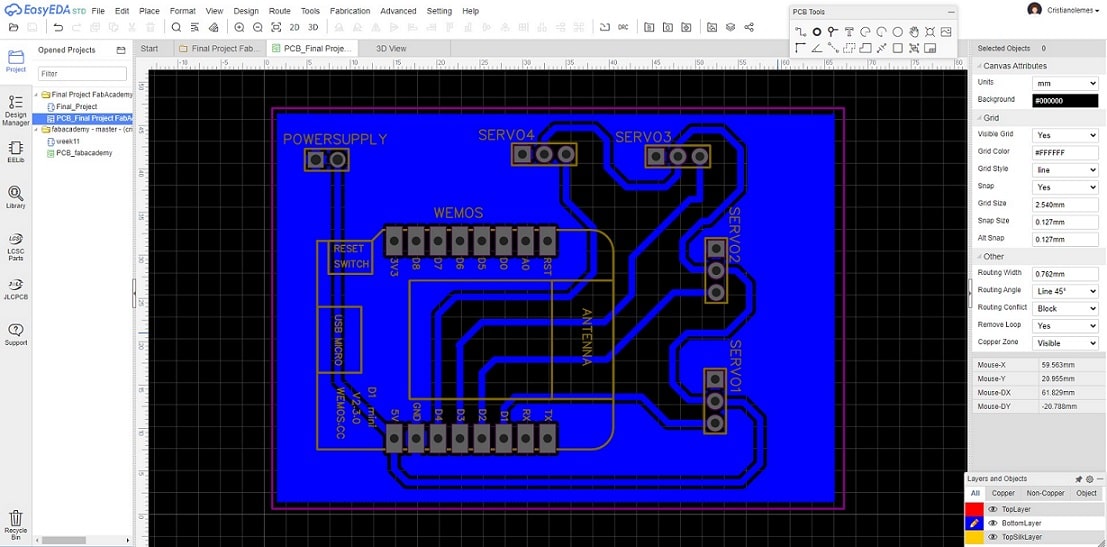

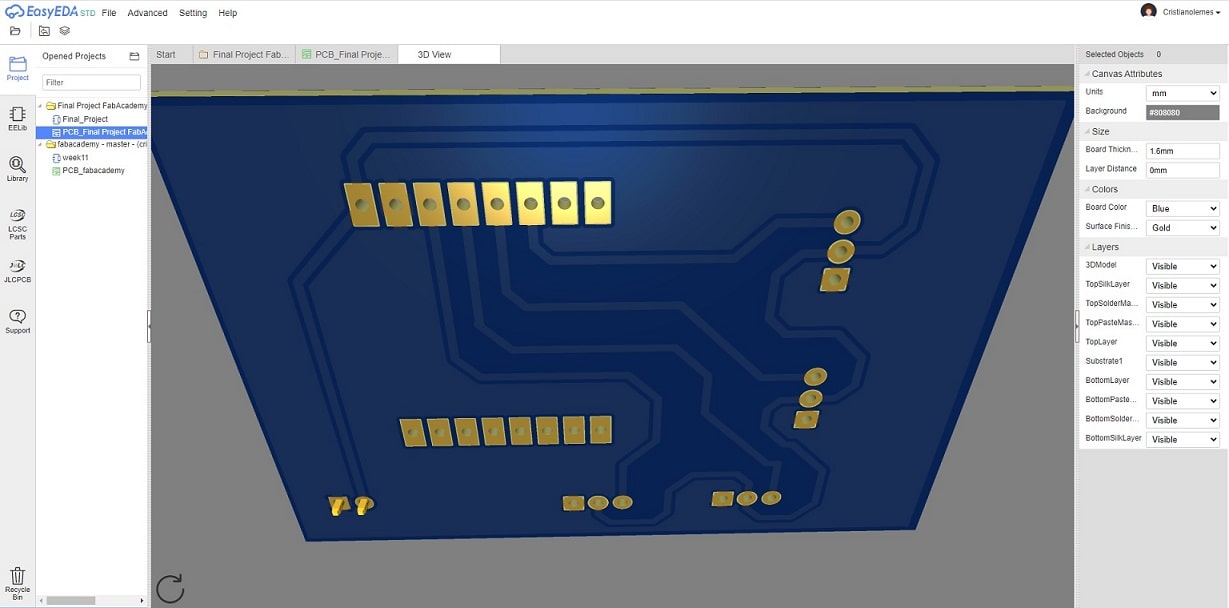

I used the web software EasyEDA that was the software I used during the course to design the PCBs. Our lab acquired one side board and I decided to use it, which will differ slight from the PCB designed during the course. In this case, we need to draw the lines in the bottom of the board, and the components will be place on the top. So, I have to select the bottom layer to draw the lines after finishing the sketch of the circuit.

-

I decided to use Wemos containing ESP8266 board as microcontroller to be able to advance more in the final project. So I added the correct footprint for it and four pinheaders 1x3 to connect the servos. In addition to two pins to enable external power supply, because the Wemos could not be enough to feed the power of all servos in the arm. I also decided to redesign the base, and not use the servo as it was used in the prototype. This will be done later, if I have time left.

-

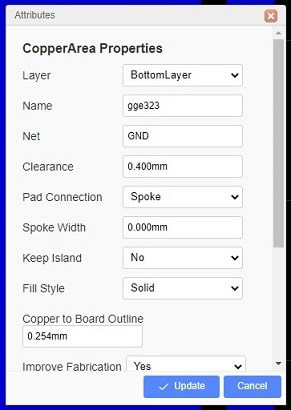

From the previous sketch, I have started to generate the PCB, as mentioned before, using the bottom layer of the board. I have decided to make the lines where it will pass the electricity and the rest of the board as GND. Bellow it will be shown the attributes for the cop area in the EasyEDA.

-

Cop area attributes.

-

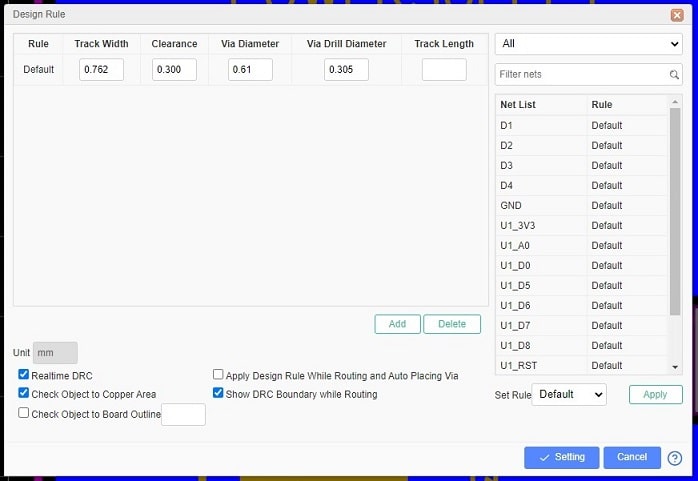

Design rules attributes allow to change the width of the lines, here it is the configuration I have used to design the PCB.

-

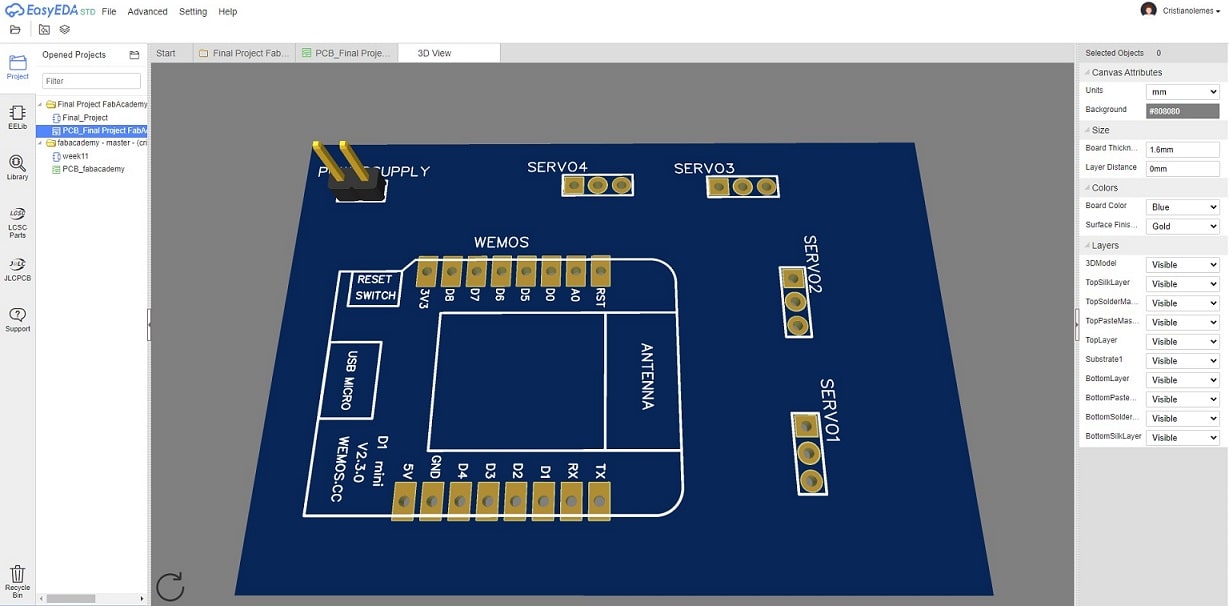

Bellow the 3D view of the PCB top and bottom layers respectively.

-

From this design it was generated the Gerber files from all layers that will be used to mill the board.

Download the ZIP file of the PCB designed in this step.

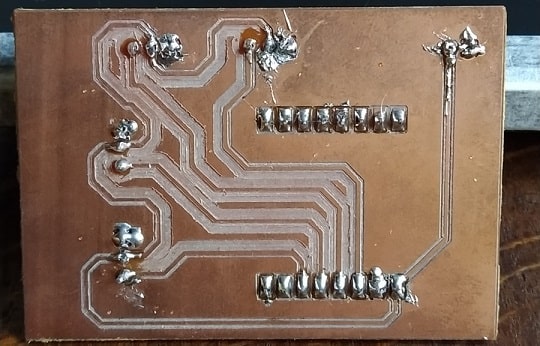

Milling PCB¶

The PCB were milled using two generated files, respectively Gerber_BottomLayer and Gerber_Drill. The steps taken to mill the board was explained in detail in previous assignments of this course. Here, I will explain the steps done for milling this PCB.

-

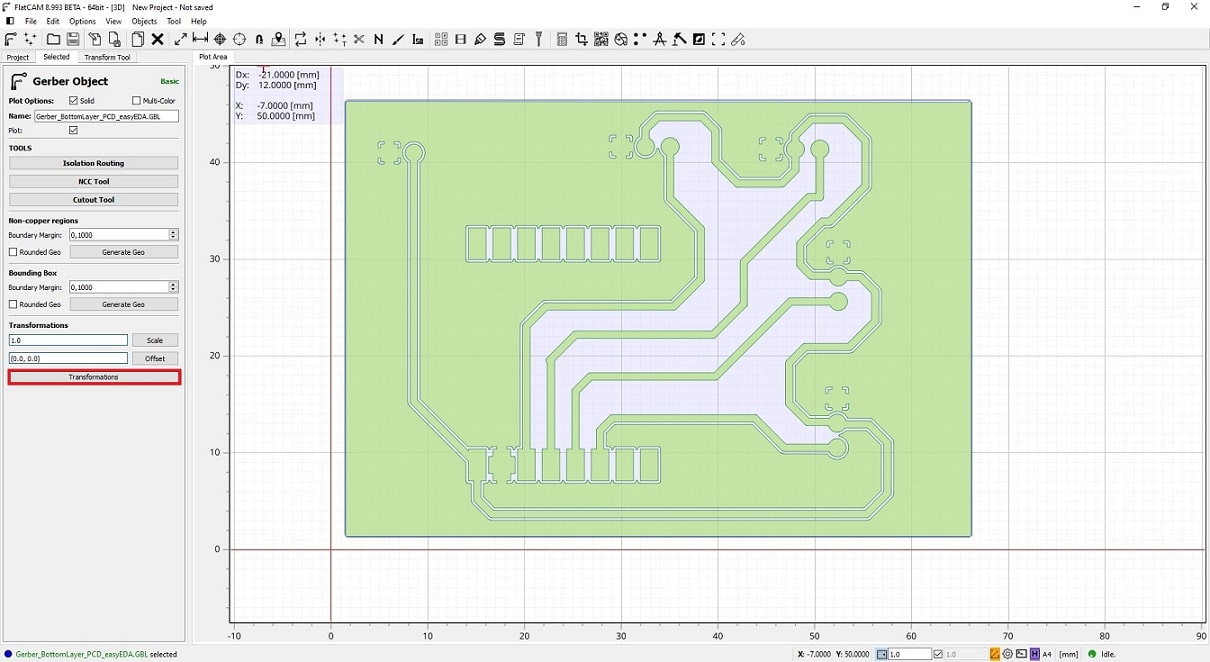

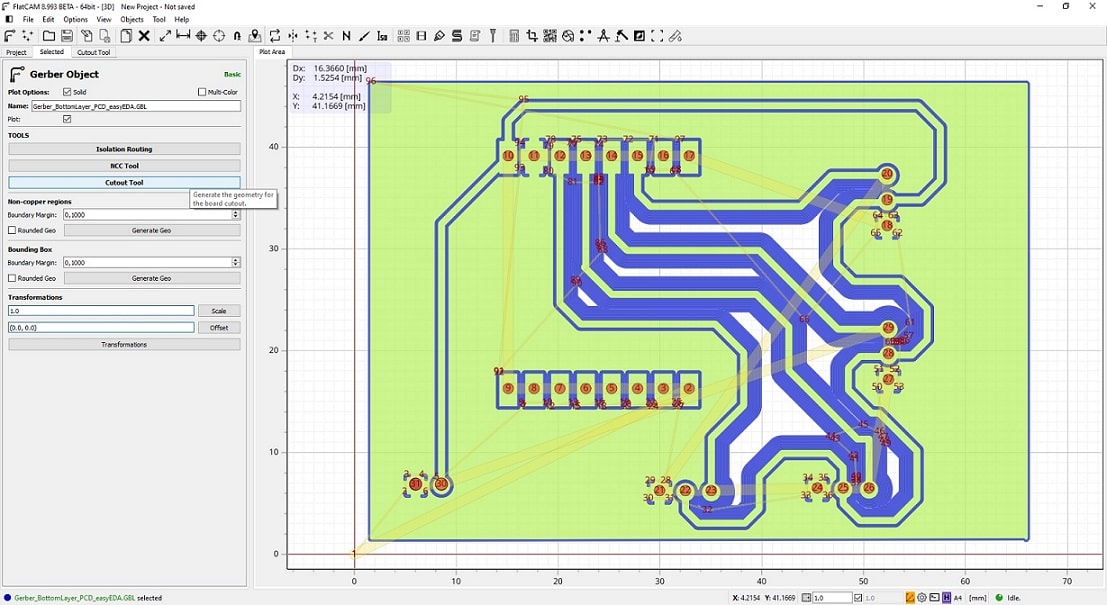

Imported the file Gerber_BottomLayer in the software FlatCAM and clicked in the menu Transformations in the left side.

-

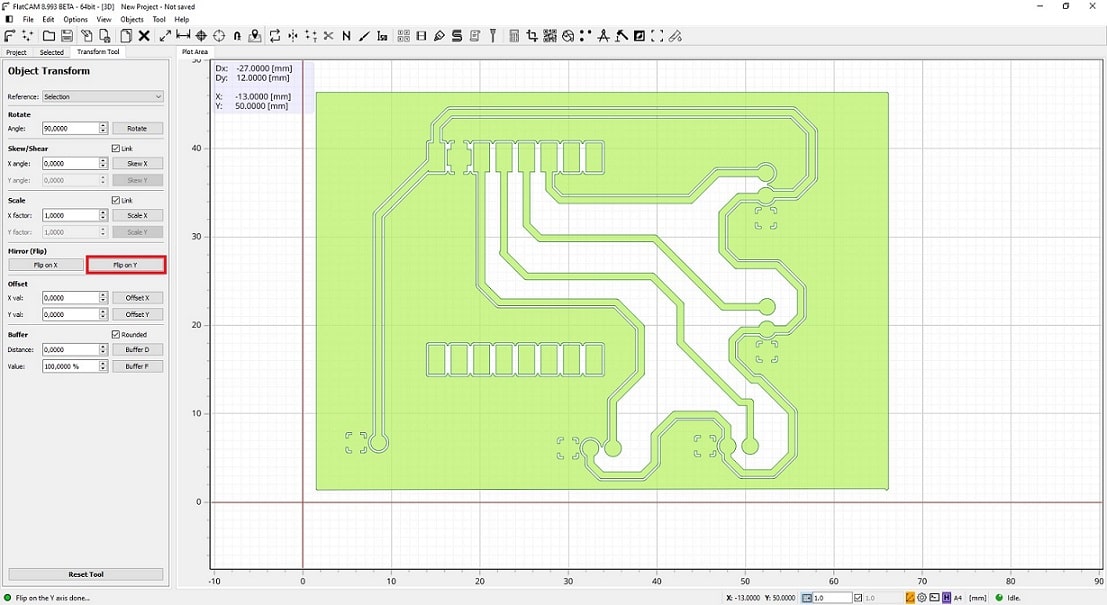

The file imported has the lines as the components would be placed in the top layer of the board. To mill the lines in the bottom of the board it is necessary to mirror the lines first. Then the components will be place correctly. I used the button Flip on Y in the menu section Mirror (Flip) on left side.

-

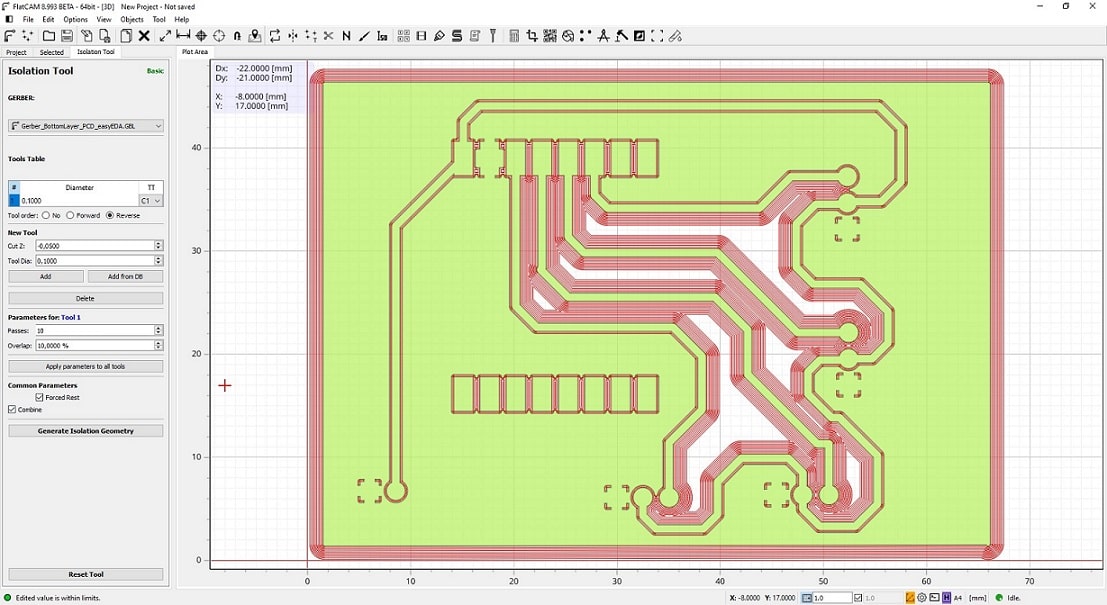

Back in the tab Selected, I used the button Isolation Routing to create the lines for the mill machine. I used 10 passes (that creates ten milling lines around the circuit lines) and checked the property combine (otherwise FlatCAM would generate 10 different objects for each line and it would be very complicate to send it to the machine).

-

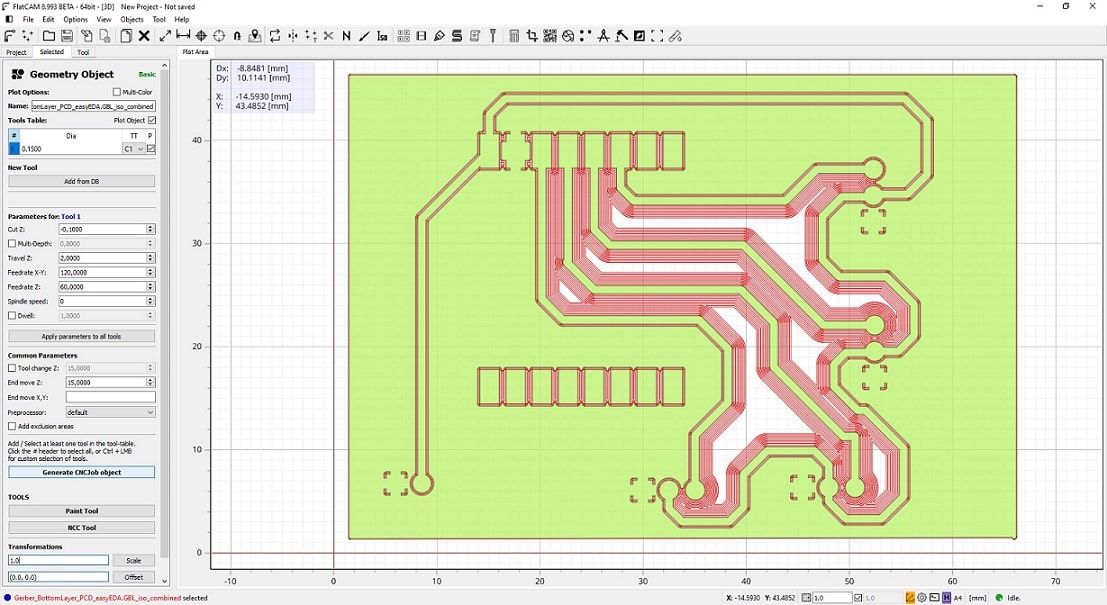

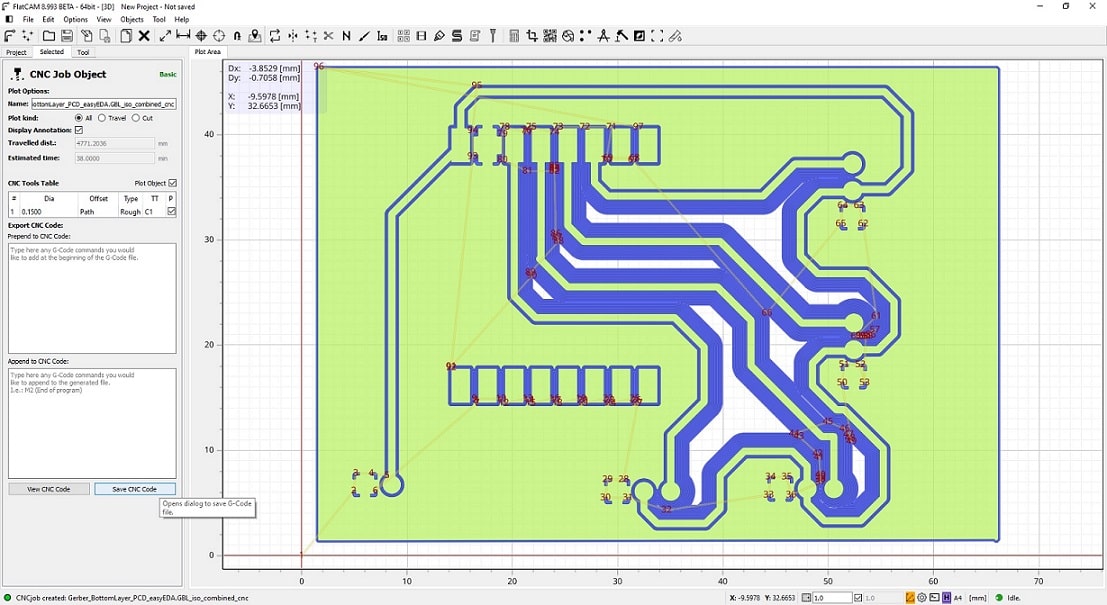

After creating the isolation geometry, FlatCAM switch to the tab Selected but selecting the new object generated in the previous step. This allows to create the CNCJob Object that will be used to mill the board in the machine. The properties here should be adapterd to your machine, this configuration is for the machine we have in our lab.

-

Save the CNC code of the object created in the previous step.

-

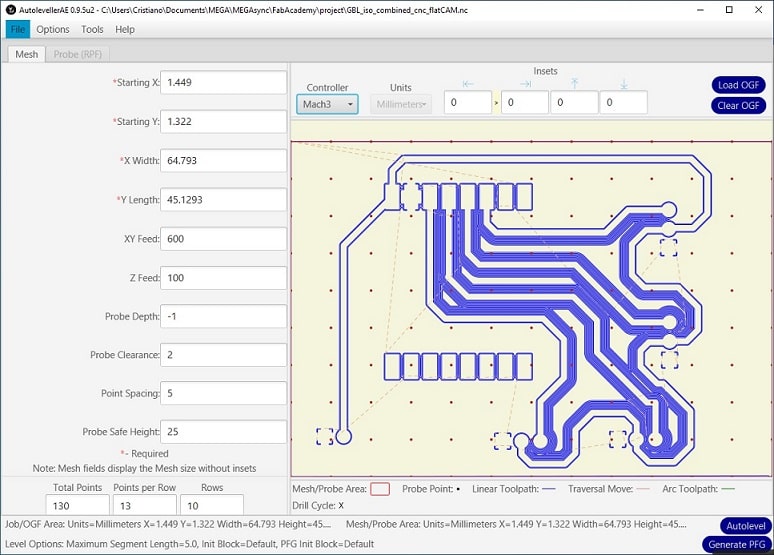

Import the generated file in the software Autoleveller. Used the default property values and Generate PFG. Notice the values of the properties Point Spacing, Points per Row, and Rows. These properties will create a cnc code that will upload in the machine to generate the probing file to adjust the CNC code for milling the board.

-

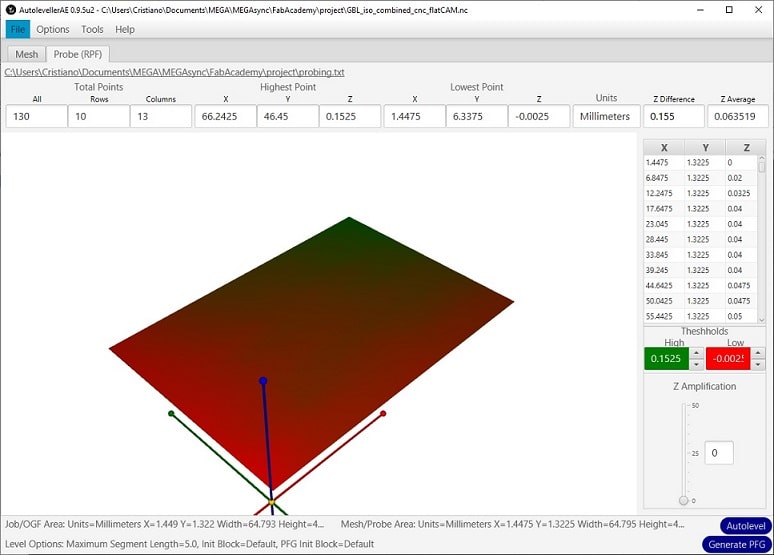

After generating the probing files in the machine, using the previous CNC code, the file was imported in the Autoleveller and it was used the command Autolevel. Check the property Z Difference, if you have a value higher than 0.3, you should check the level of the bottom of the milling machine. For more details about how it was generated the probing file, check the assignment Input Devices.

-

The bottom of the board was milled with the generated code from the autolevel command.

Now, It was necessary to drill the holes to attach the components in the board. Important note is: do not remove the board neither change any parameter in the machine, otherwise you might make the holes in the wrong place.

-

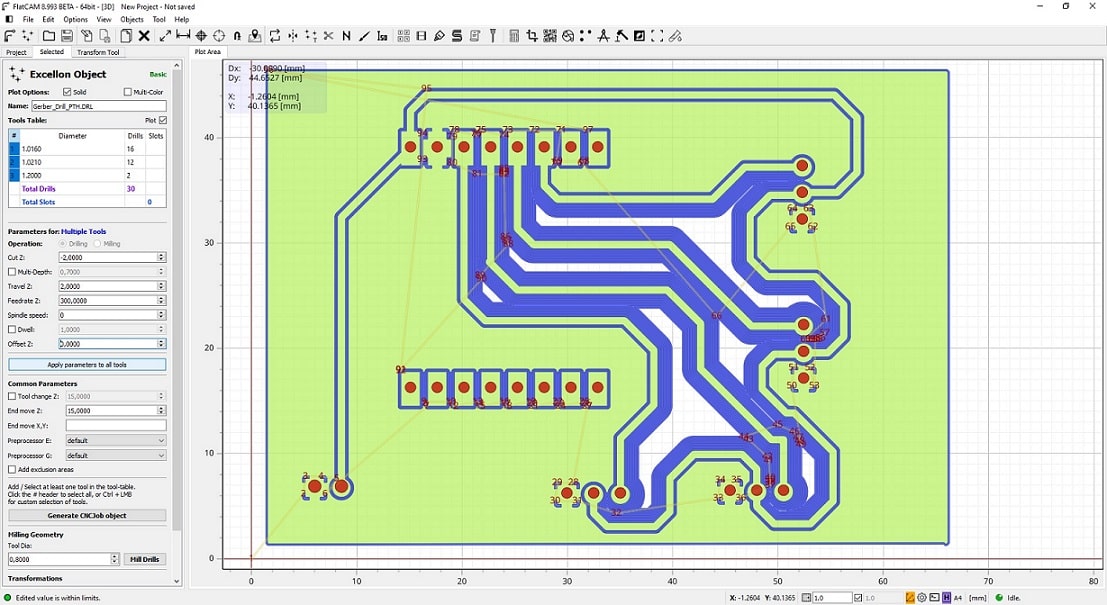

I imported the files Gerber_Drill also generated by EasyEDA in the FlatCAM. I also had to flip the holes to place them in the correct place. Generate the CNCJob object to create the points where it should drill.

-

The file previously generated is enough to drill the holes in the board, no need to be adjusted. This file was imported and the holes were made.

Now it is necessary to cut out the board, using the initial file Gerber_BottomLayer imported in the FlatCAM. Again remember to not move the board or change any configuration in the software of the machine.

-

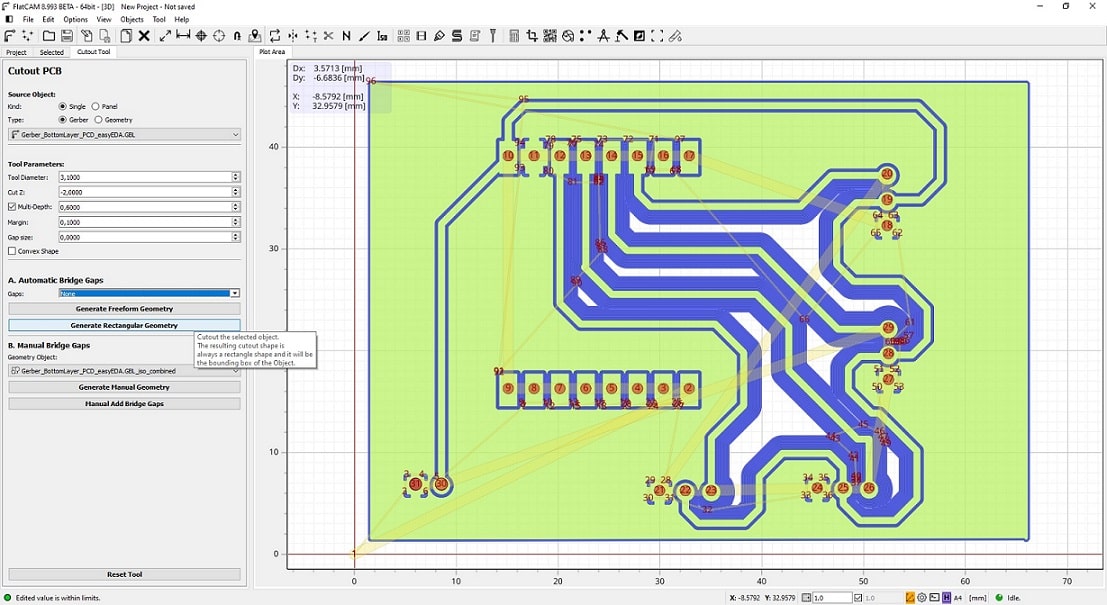

Select the Gerber file and move to tab Selected. Click in the menu Cutout Tool.

-

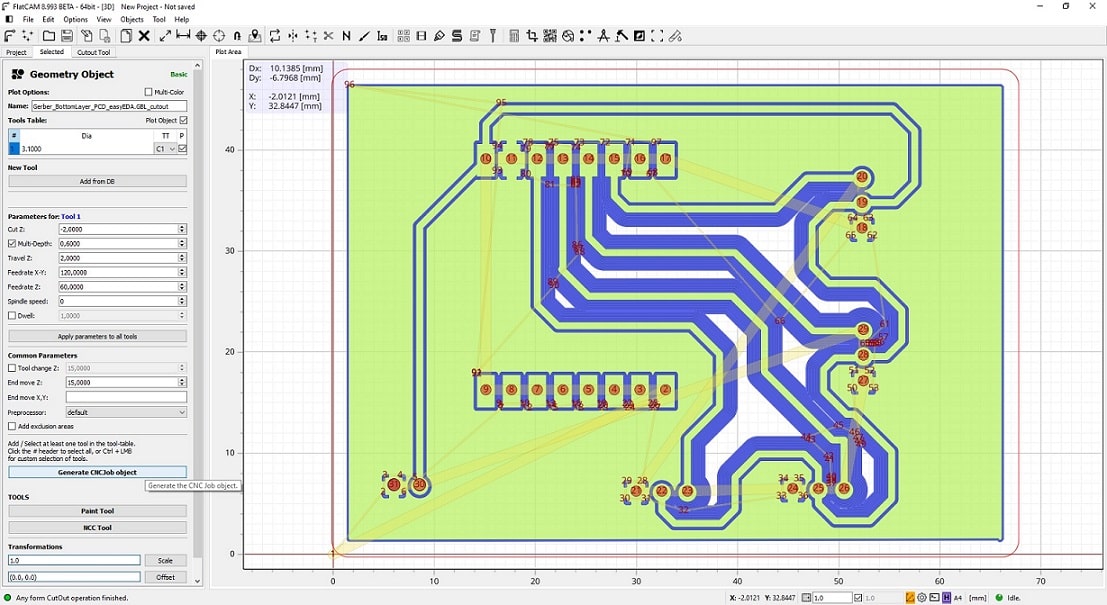

Selecting the objected generated, click in the menu Generate Rectangular Geometry, tab Cutout Tool. This will generate a line around the board that will be used to create the CNC code to cut around the board. Note that for cutting out completely the board the parameter Gaps should have value none.

-

Select the generated object, and in the tab Selected, click in the menu Generate CNCJob object. In the next tab (seleting the CNCJob) click in Save CNC Code.

-

The genereted file is ready to be loaded in the mill machine to cut off the board. It is not necessary any adjustment.

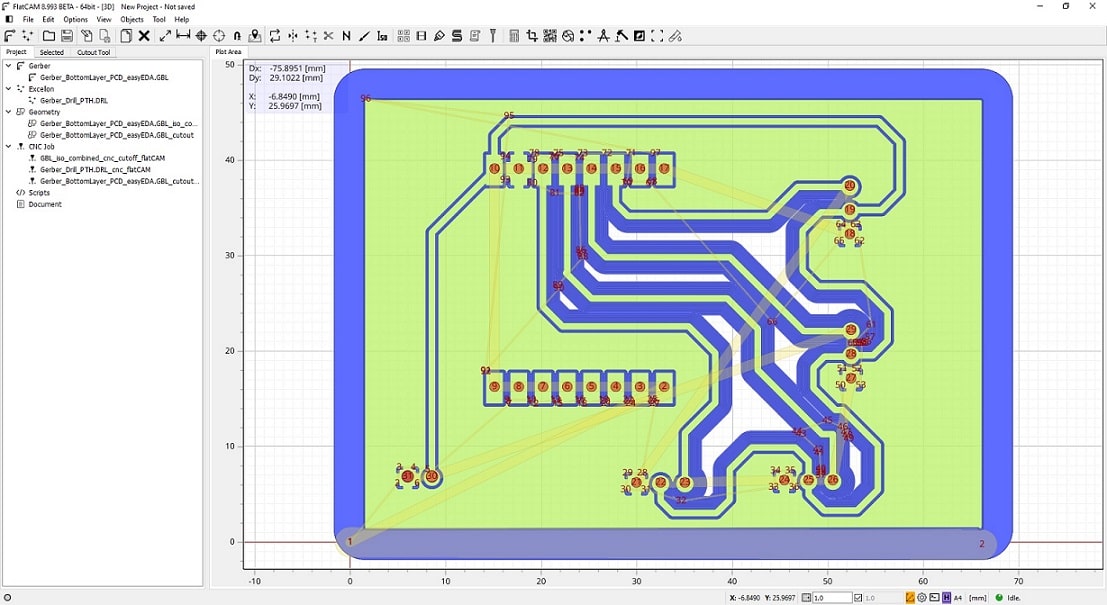

This is the final view of the FlatCAM with all genererated objects for milling the board. Because the generated files were specific for the machine I used, you should follow up the steps described in this section to generate the files adjusted to your machine for then mill the board.

Board.

Board connected to the robotic arm.

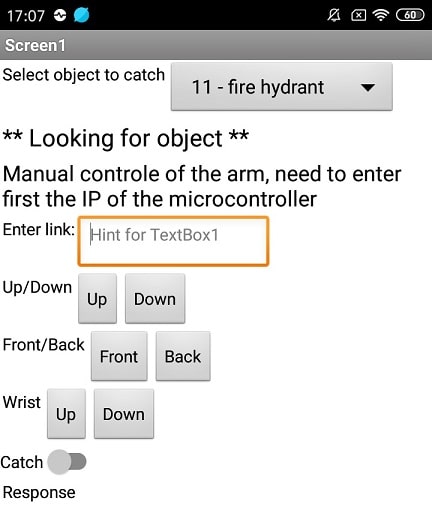

Design Mobile App v2¶

The mobile app will be the application interface between the user and the system. It will allow the user to select the desired object to be caught by the arm automatically. So, the first version of the mobile app enabled the user to control and move the arm. Now, it will be necessary the app communicate with the object detection program. I did not create a new project as I have done already the version 1 of the mobile app. I made some slight changes in the block that communicate with the microcontroller, changing the links that send commands.

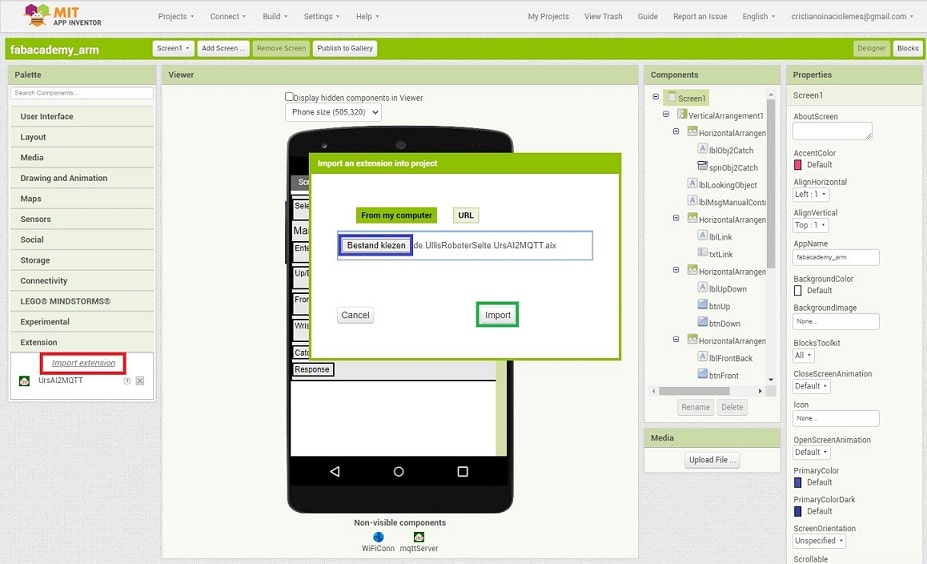

In the assignment Networking and communications, I have used MQTT broker to make two components communicate with each other via WiFi network. I have decided to use the same communication system between the different parts of the final project. I found the tutorial ESP32. MQTT. Broker. Publish. Subscribe. ThingSpeak that explains, among other things, how to integrate MQTT using MIT App Inventor. They make use of the extension UsrAI2PahoMqtt that enable the mobile application exchange messages via MQTT broker.

Here it is how I configurated MIT App Inventor.

- First, I downloaded the library UsrAI2PahoMqtt and unzip the content.

- In the Designer page, I selected the link Import extension (red box).

- Selected the .AIX file downloaded in the step 1 (blue box).

- Clicked in the button Import (green box).

- This included a new component in the left side of AppInventor that can be added in the Designer, similarly how it was done to add the WiFi connection.

In addition to the design from version 1, I have added a list of objects that will be possible to catch using the robotic arm. This list was obtained from the artificial intelligence model that will perform the object detection from the camera. I added also label with a message to indicate if the object detection started. I added the component from UsrAI2PahoMqtt library that will perform the communication via the MQTT Broker. The configuration of the MQTT server is explained at Networking and communications. I used the same IP address of the Access Point configurated before (192.168.4.1) and default port (1883).

In the blocks section I had to make some changes to enable the mobile application to connect to the MQTT server. I had some problems here to connect to the server. Initially, when the type of object was selected, I open the connection to the server, send the message, and disconnect. But this strategy did not work and the app was not sending the message to the object detection sometimes.

To fix this, I open the connection with the server when the app is open, via the event Initialize of the main screen. The procedure mqttConnect is called recursively until the app is connected to the MQTT server. The side effect of this implementation is that if the server is down, the app will not open and keep a white screen until the server is up again. Perhaps it would be better to call the procedure mqttConnect outside of that event, and once connected, enable all the components of the app. To save time, I kept this implementation and I am awared that should be improved later if I have time left.

When the user select the object using the selection box snpObj2Catch, it sends the text of the item to the server. It is assumed that the object detection register a topic named MQTTRecognizeObject. If it was selected a valid object, the label lblLookingObject is enable, otherwise it is disabled.

The second part of the blocks is very similar to the mobile application version 1. I only changed the text of the links that send the command to the microcontroller to move the arm to be more clear.

Object detection¶

Among the possible technologies that provides machine learning models, I decided to use TensorFlow. Why? It is open source and belongs to one of the biggest companies responsible for advancing the field of artificial intelligence. It is also implemented using Python, and I wanted to have some experience using this language.

Here it is the list of components to enable the object detection:

- raspberry pi device

- pi camera

I took advantage from the raspiberry pi I had configurated as AccessPoint (at Networking and communications assignment), providing a private network for the system in the final project. This device was also configurated to work as MQTT server. As I need a device with some processing power, I decided to implement the object detection using it as well. It helps in saving time the configuration of another device to perform this task where I it would be necessary to configurate many frameworks and libraries.

Setup raspiberry pi camera¶

I started this phase by setting up the raspiberry pi cammera. I have found the tutorial Beginner Project: A Remote Viewing Camera with Raspbery Pi which explains very easy how to do it. This tutorial showed how to set up the frameworks necessary to provide a video stream via socket using python. It also provides the basic code to execute and provide the stream over the network. The camera installation was very simple and straightforward, as well as the python configuration. Inittially I thought to adapt this code to get pictures from the camera in a time interval configurated for then execute the image processing. However, it turned out I found a better solution, that will be explained later.

I have executed the following steps to enable the video stream via the camera:

-

Update the operation system configuration

sudo raspi-config- Enable Camera (menu: Interface Options > Camera > Enabled)

- Enable VNC Viewer (menu: Interface Options > VNC > Enabled)

-

Enable SSH (menu: Interface Options > SSH > Enabled)

sudo reboot (to initialize the raspberry pi)

-

Tested if the camera works correctly:

raspistill -o Desktop/image.jpg (for pictures) raspivid -o Desktop/video.h264 (for videos) -

Update the operation system

sudo apt-get update sudo apt-get upgrade -

Installed the necessary libraries and softwares necessary to enable the camera stream

-

libraries

sudo apt-get install libatlas-base-dev sudo apt-get install libjasper-dev sudo apt-get install libqtgui4 sudo apt-get install libqt4-test sudo apt-get install libhdf5-dev -

Python and frameworks

sudo pip3 install Flask sudo pip3 install numpy sudo pip3 install opencv-contrib-python sudo pip3 install imutils sudo pip3 install opencv-python

-

-

Create a clone from the Camera Stream project

git clone https://github.com/EbenKouao/pi-camera-stream-flask -

Executed the main process for the Camera Stream

sudo python3 /home/pi/pi-camera-stream-flask/main.py

This configuration went smooth and it was possible to see a stream from the camera.

Setup tensorflow¶

I have experience with machine learning and the different models that enables image processing. However, I have never worked with Tensorflow before, so I have tried to follow the tutorial Get Started with Image Recognition Using TensoFlow and Raspberry Pi. It provides the basic components to start using image recognition using tensorflow.

Here it is the steps executed here.

-

Installed the TensorFlow

sudo pip3 install --user tensorflow -

Create a test file named tftest.py to check if the tensorflow were configurated correctly. I added the following content:

#tftest.py

import tensorflow as tf

hello = tf.constant('Hello, TensorFlow!')

tfSession = tf.Session()

print(tfSession.run(hello))

-

However, I could not execute because of error in the installation of tensorflow. So I upgrade the framework and could see it was installed correctly.

sudo pip3 install --upgrade tensorflow pip3 show tensorflow (to see if the framework is installed correctly) -

Then I could execute the file tftest.py. However, It returned the following message:

python3 tftest.py libhdfs.so could not open shared object tf.Session is deprecated. Uses tf.compat.v1.Session -

Changing “tf.Session” to “tf.compat.v1.Session()” fixed the previous message.

-

Then I installed the module that contains the datasets that is used by the tensorflow to build the model that will perform the object detection.

sudo pip3 install tensorflow_datasets -

The module tensorflow_datasets requires the TensorFlow version 2.1.0. So I upgrade again the framework, including also the pip from python.

sudo pip install --upgrade pip sudo pip install --upgrade tensorflow -

I have created a virtual environment only for testing. It allow to create a specific environment to install python modules without affect the entire operation system.

python3 -m venv --system-site-packages ./venv_tf source ./venv_tf/bin/activate -

Using the virtual environment, I have tried to upgrade the pip and tensorflow, then I executed a simple command to test the installation.

pip install --upgrade pip pip install --upgrade tensorflow python -c "import tensorflow as tf; print(tf.reduce_sum(tf.random.normal([1000, 1000])))" -

The rest of the tutorial did not work well, and I had to find a better one that explains how to use TensorFlow.

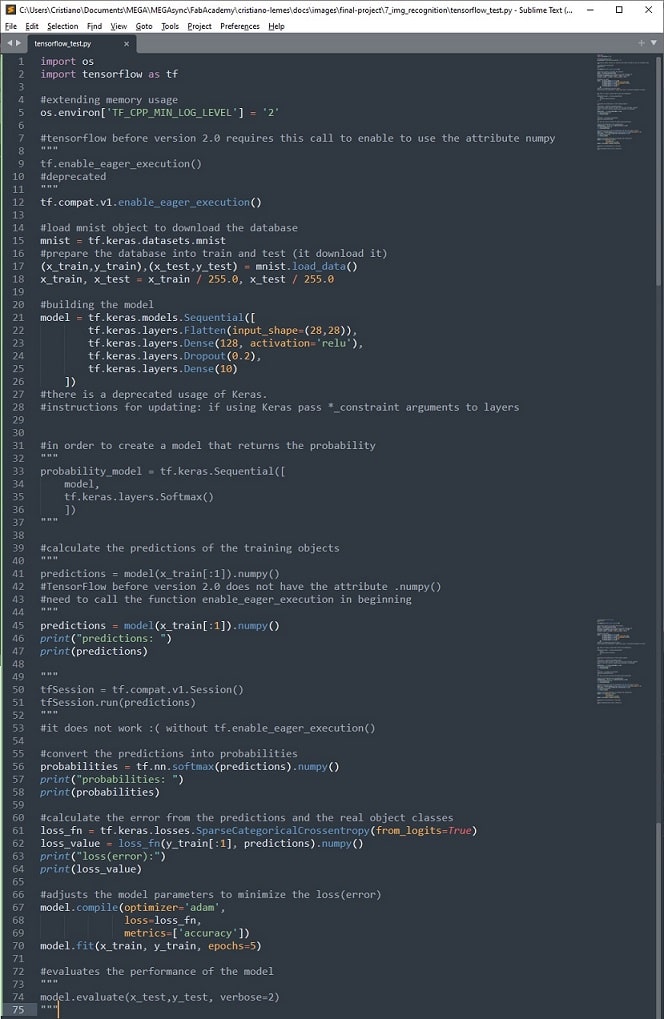

Begining image processing¶

In the main page of the framework, it is possible to see many tutorials on how to use it. Then, I follow up the tutorial TensorFlow 2 quickstart for beginners. Again, this tutorial did not went very well.

-

TensorFlow did not have anymore the attribute numpy, that is essencial in the tutorial. It is used to capture the values of the predictions. After a long research, I could find a work around for it to keep going with the tutorial.

-

The object section tf.compat.v1.Session did not work anymore, due to imcompatibility of versions.

-

But the main point of this tutorial was the error due to memory issue. Apparently the process did not have much memory and even extending the memory, it was not working. I thought that maybe the database was too big, but I decided to spend some time researching a better way to perform the image processing using TensorFlow.

-

Here was the final code generated from this tutorial, including some comments.

Project to perform object detection¶

When I was losing hope and thinking in alternative solutions to have the image processing in my final project, I found another tutorial that would change the way I was working. Actually, it was an accident, since I was looking for a solution of the lack of memory in the process I had, I found a code example that performs object detection in raspberry pi using TensorFlow. The project pi-object-detection appeared to be a better solution and I started to investigate its implementation. It turned out that this was a great solution to have the object detection in my final project, and I took advantage of it to perform the object detection and then send the command to the microcontroller to catch the object.

Following the steps I did.

-

Started a python virtual environment.

python3 -m venv --system-site-packages ./venv_tf source ./venv_tf/bin/activate -

Create a clone of the project and run the scripts install and run under the root folder of the repository.

git clone https://github.com/RattyDAVE/pi-object-detection.git cd pi-object-detection /bin/sh install.sh /bin/sh run.sh -

the first time I tried to run did not work because the module ‘tensorflow’ was not found. After running the following commands I was able to execute the script run and see the object detection from the raspiberry pi camera stream.

sudo pip3 install --user tensorflow sudo pip3 install --upgrade tensorflow sudo apt install libatlas-base-dev pip3 install --user tensorflow

This code performs the detection of all objects that appears in the camera. The file camera_on.py contains the main code that is executed to perform the object detection. When it finds the object, it adds in the camera stream a box with the name of the object detected. It also includes the accuracy of the prediction of the objected detected by the model. After studying the code, i saw that was easy to adapt for my final project. The object detection system should only have the id of the object that should be detected. When this object is detected, it would send the command to the microcontroller to catch it.

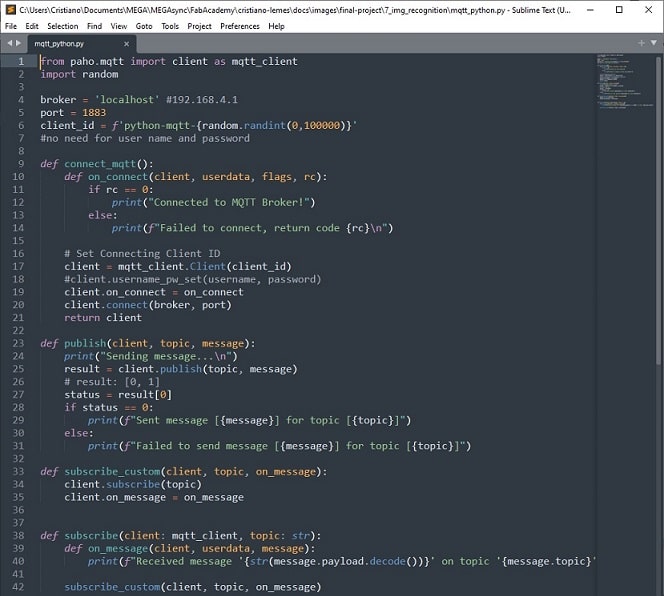

Setup MQTT for Python¶

Before moving forward with the project, I still needed to configurate the MQTT module for python. This would enable it to send the command to the microcontroller via the server. I have found the tutorial How to use MQTT in Python that explains very easy how to use the module paho-mqtt.

I have done the following steps:

-

Configurated the MQTT module for python

pip3 install paho-mqtt -

Executed the command python3 in a command line to test it. I have executed the following instructions in the python environment and it was possible to connect and send message to the MQTT server.

from paho.mqtt import client as mqtt_client client = mqtt_client.Client() client.connect(broker_server, port) client.publish(topic, message) -

Then I wrote a python file containing the necessary functions needed by the object detection program.

- a function to connect with the MQTT server (connect_mqtt).

- two functions to regiser a topic (i) one to only print the message in the console (subscribe) and (ii) to use a custom message to handle the message (subscribe_custom).

- as well as one for receiving messages from the server (publish).

-

Using this designed module, it was possible to send messages via MQTT and also to register topics in the server. Hence I could move forward and start to adapte the main file camera_on.py to receive the message from the mobile application with the object to be catched and sending message to the arm to catch when the object is found.

Testing MQTT integration¶

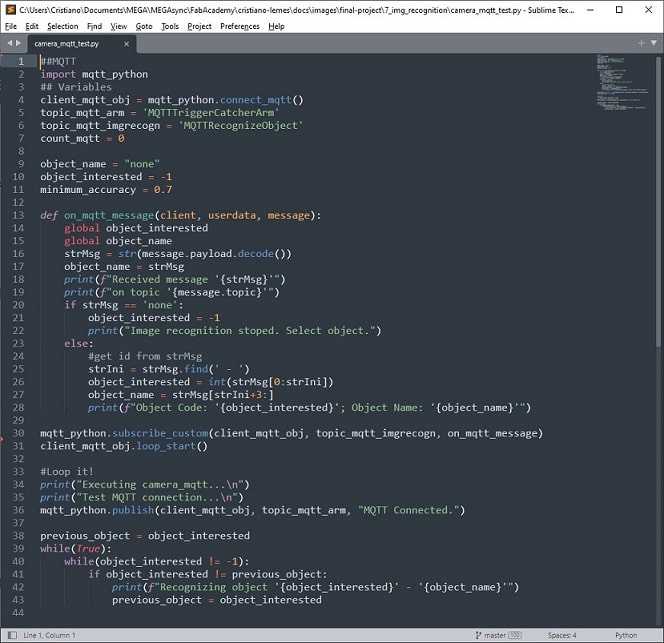

I have an issue to integrate the MQTT module and the object detection module because I need to update a variable when the MQTT receives a message that is declared in the main function. I have made several tests, create different functions trying to update the variable declared in the main process. Fortunately, after a friend help, I could see that the solution was very easy. I just needed to indicate the variable used inside of the function that handle the messages received via MQTT as global. Python will not create a new instance inside of the function, and instead will use the one declared in the main process. I have made a simple code for testing this solution.

-

The mobile app will send the name and code of the object that the object detection should look for. In the function on_mqtt_message that handles the messages received via MQTT, the message is break into two parts: the id and name of the object. The id is stored in the variable object_interested that will be used later to check if the model found it in the camera stream. The name is assigned to object_name only for printing a message in the console.

-

After subscribing the topic using the function on_mqtt_message, I had to added a call to the function loop_start(). This function will start the listener of the MQTT library that will trigger the function to handle the message.

-

I added a simple message to publish to the MQTT server.

Object detection program¶

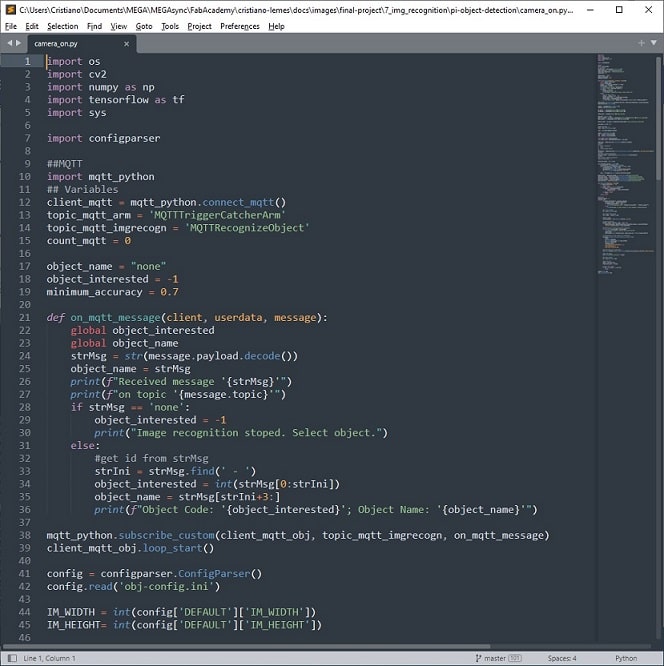

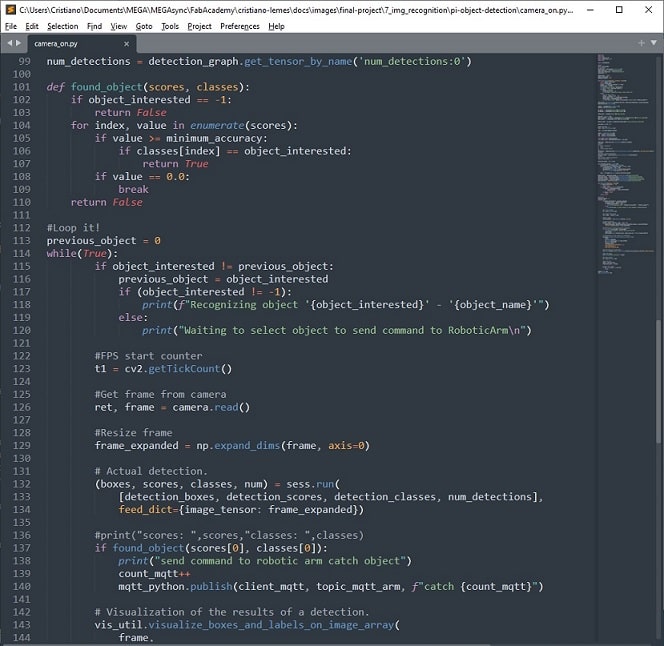

Now that I had all parts read, I updated the code of the object detection module, adding the MQTT communication. I reused most of the code from the project pi-object-detection and I have added the parts of the code I needed to integrate it in the system of the final project.

-

I added the code from the test I have done before in the main process of the object detection. Except for the message that is published to the MQTT server.

-

I have created a function found_object that receives the scores and the classes of the objects detected by the machine learning model. I have defined a variable called minimum_accuracy (equal to 0.7) and if the accuracy from object detected is higher or equal to it, the function checks the type of object. If the class of the object is equal to the class chosen by the user, then it returns True. Otherwise, it will return False.

-

In the main loop, that process the images from the camera stream, I have added a validation, right after the machine learning model returns the result of the model processing, that trigger the function found_object using the scores and classes found.

-

I have added a simple message in the console to indicate which object is being searched by the object detection module.

-

I did not make any change in the original content from camera_on created in the project pi-object-detection. I have only added the parts necessary to integrate it to my final project. For more details about how the implementation was done, I suggest check the repository of the project and their documentation.

New designs¶

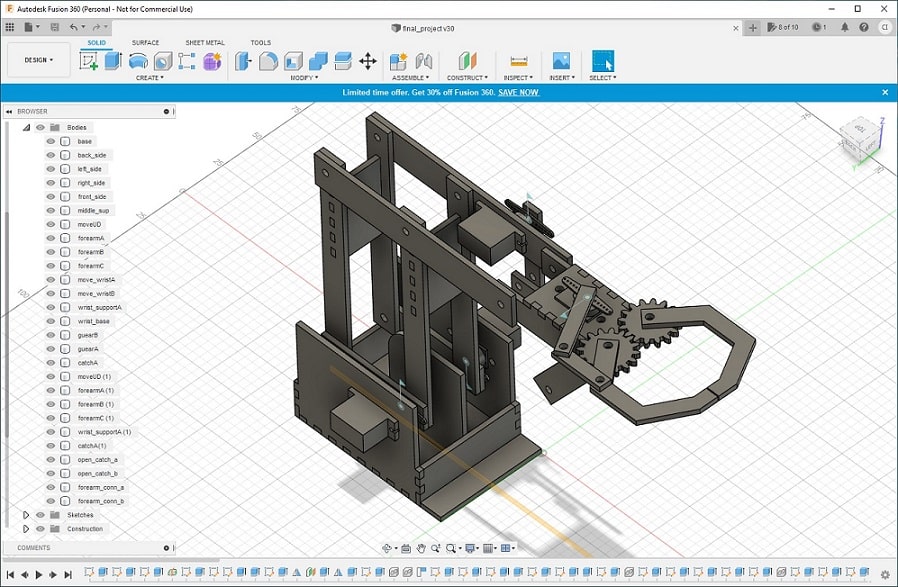

Design second prototype¶

The first prototype was designed using cardboard and I wanted to create a stronger arm. However, the drawing of the first prototype were too complex to change for making a new prototype. I have decided to design a new model, but now using a 3D design software. I have changed the servo in this version, I used the MG90S that is similar to the first one but made by metal which I though would be stronger.

During the course, I have learned to use Fusion 360. Even in some assignments I have used to design parts of the final project. So, I have designed the entire arm, now using parametrization to enable quick changes, for example if the material is thicker or thiner.

To help the design of the arm, I used the design Servo Motor MG90 from the Fusion repository. I needed to place it in my design to be able to design the parts of the arm that would be attached with the servo.

I have provided many details about how to design components in previous assignments, for example Computer Aided design, Computer controlled cutting, and 3D Scanning and printing to name a few. I will show bellow an animation of each step taken to design the arm, for more details on how to design it, please check the assignment pages where it explains Fusion 360.

I have decided to make this second prototype using wood of 3mm. The whole design done was using this thickness of material. From the design I have extract the lines I would use to laser cut the parts of the arm. The process to do it is explained at Computer controlled cutting. I have some different process making this new prototype.

- The piece for the catcher could not be done via laser cut. This piece cannot be done using a substractive method. It requires an addictive method, such as 3D printer. Hence, I generated the STL only of the catcher (using Fusion) and 3D printed them.

- Unfortunatelly Fusion do not have a functionality to make guears. So to design the guears I needed for moving the catcher, I have make them first in the Inkscape (which has a good functionality for it) and imported the drawing in the design. Then I estruded them to make the design.

When I started to assemble all the pieces together I started to see some problems. For example, the piece that would go in the side servo (which would make the movement up and down), were not placed correctly to the opposite servo (movement front and back). I have to adjust them, and also some small changes in the pieces that would move the catcher. Unfortunatelly, I have done the holes were would go the screws bigger than I really wanted. I started designing using the holes with diameter of 5mm and I forgot to change before laser cut the pieces. As I was running out of the time, I dediced to use the pieces I had to make the best for the arm, but I will make a better prototype next time.

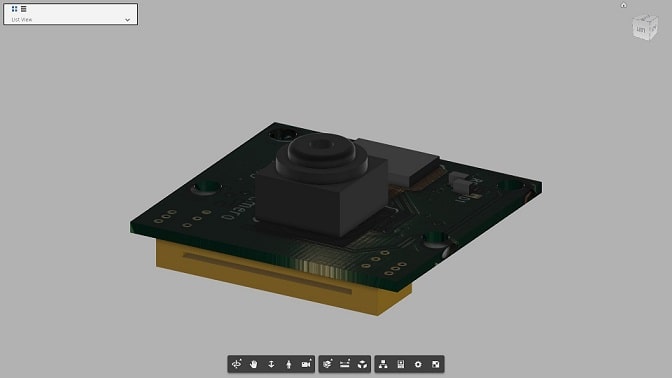

Design support camera¶

The camera of the Raspberry pi does not have a support to keep it straight, so I created a support for it. I have used the design Raspberry Pi Camera from Fusion repository to support the design.

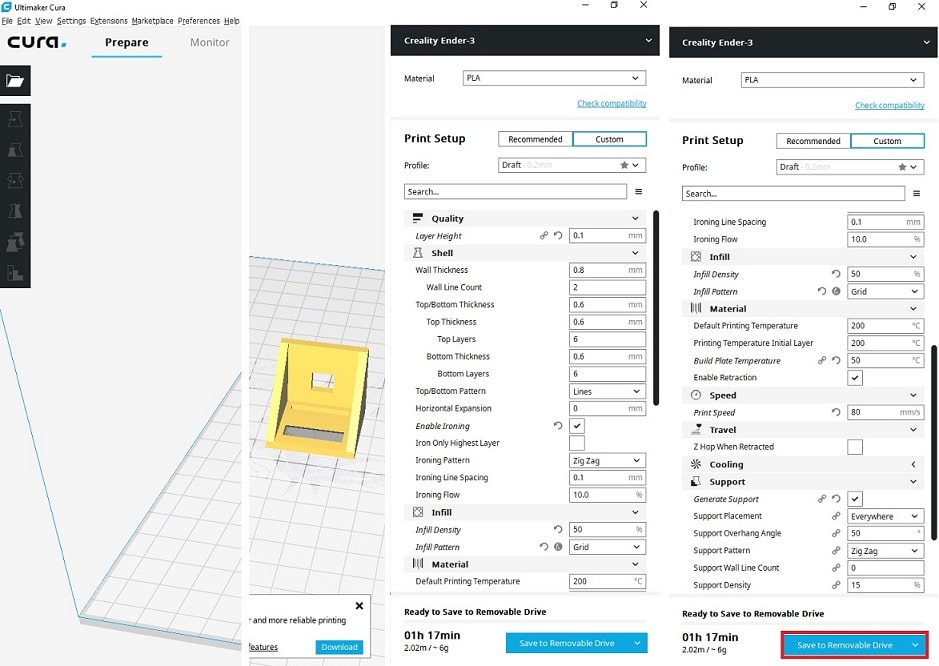

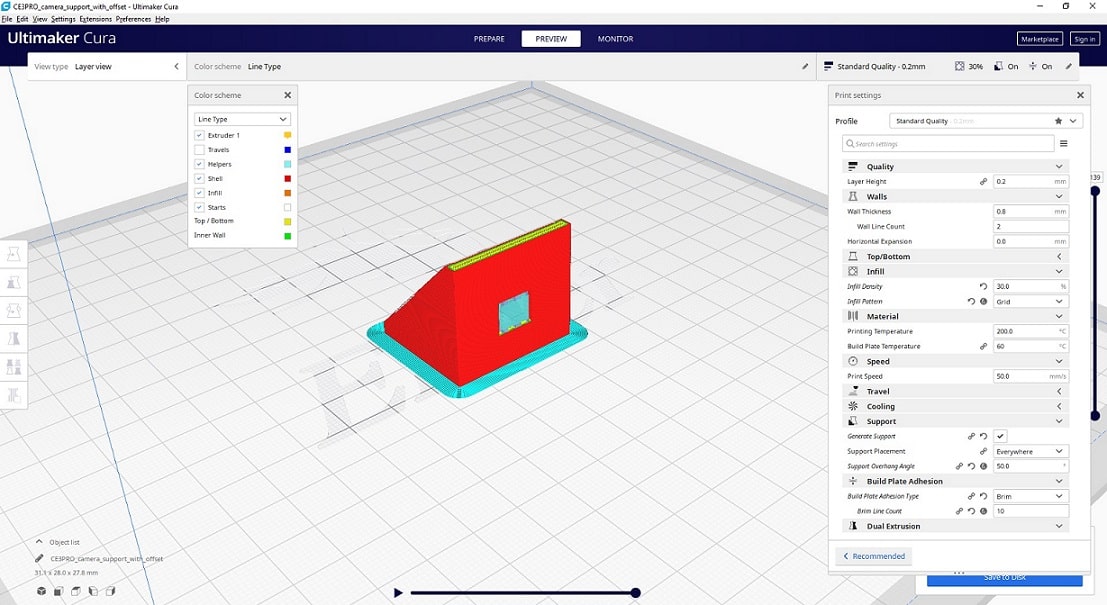

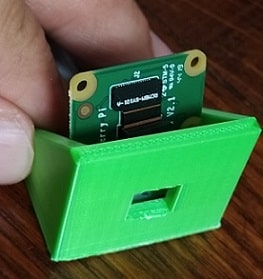

The process of 3D printing was also done before, in the assignment 3D Scanning and printing. Fusion has a functionality that enable you to export the design as STL file to 3D print. I will show here the configurations of the software Cura, used to generate the file used by the 3D printer. I simply added the generated file in the software, adjust the parameters and save the gcode to use in the printer.

I tried to adjust the dimensions of the support using the dimensions of the pi camera, which had the right dimensions of the camera I was going to use in my project. However, I forgot to add a small offset in the drawing and the camera could not fit the first time I tried to 3D print the support. I had to come back to the support design to adjust it adding the offset and other dimensions of the pi camera.

The support for the camera that fits perfectly the raspberry pi camera.

Programming robotic arm¶

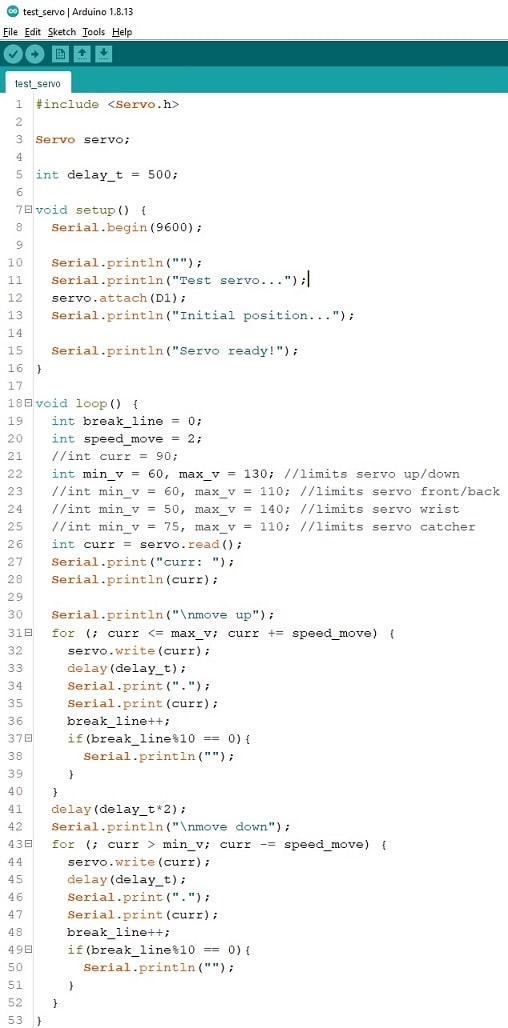

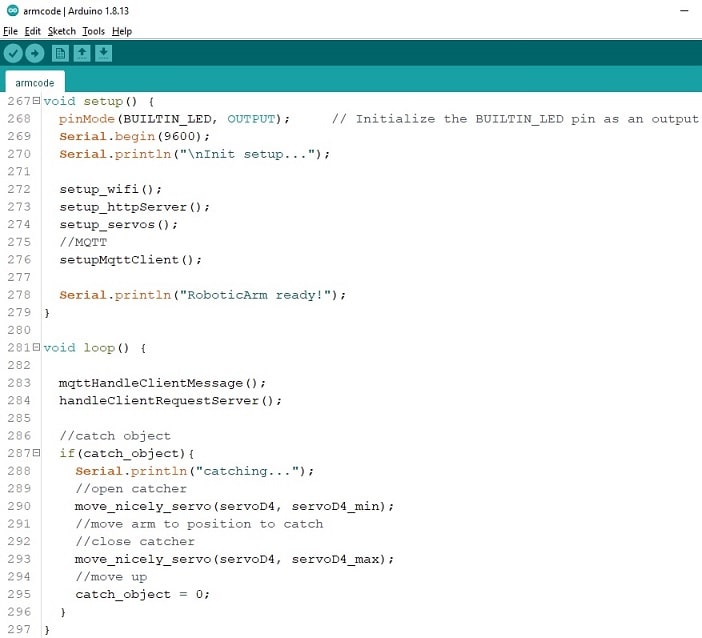

Testing servos¶

Before programming the robotic arm, I have tested the servos to see if they worked or not. Sometimes there are servos not working in our lab that we do not know. So it was important to make sure they were fine. I enjoyed this process to also know the limits of each servo. When the servo reach a certain value, it should not move further.

Testing all servos together¶

One of my concerns was about power supply. I heard from an expert in electronics that the Wemos board was not going to provide enough energy for all servos. So, I decided to test all of them together before move forward.

For my surprise, this test went not very good. Not because the lack of energy, but because the arm was too heavy to perform some of the movements. The movement front and back, for instance, it was not possible to be executed. The servo were not strong enough to lift the arm. Wemos and the servo almost burned while I was testing. But furtunately, I turned them off before something happen. I even tried to change the servos in the top part of the arm for a lighter version. It did not helped either.

To fix this problem, I should use a stronger servo in the side of the arm. For doing that, I should come back to the design, and perform a new study on how to draw the arm with this new component. Unfortunatelly, I did not have enough time to make this change and I decided to continue using the top part of the arm to finish the integration of all the parts of the project. If I have time, I will make this change.

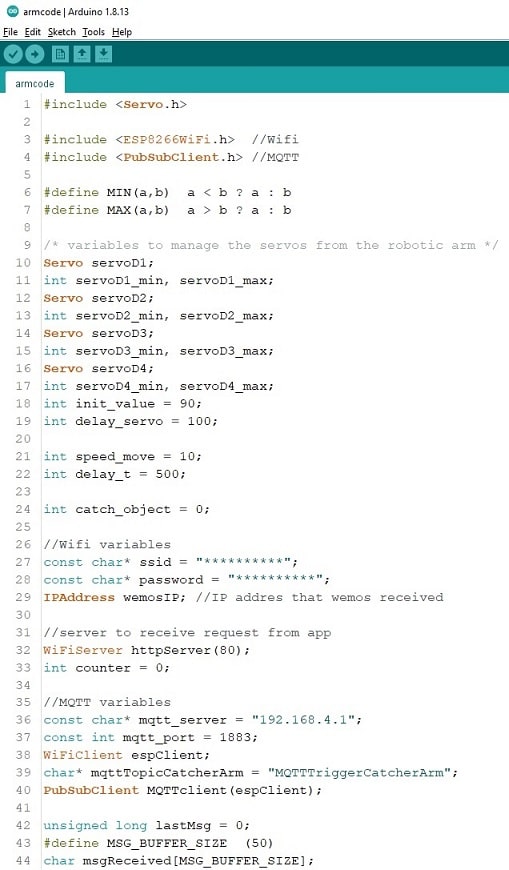

Programming arm¶

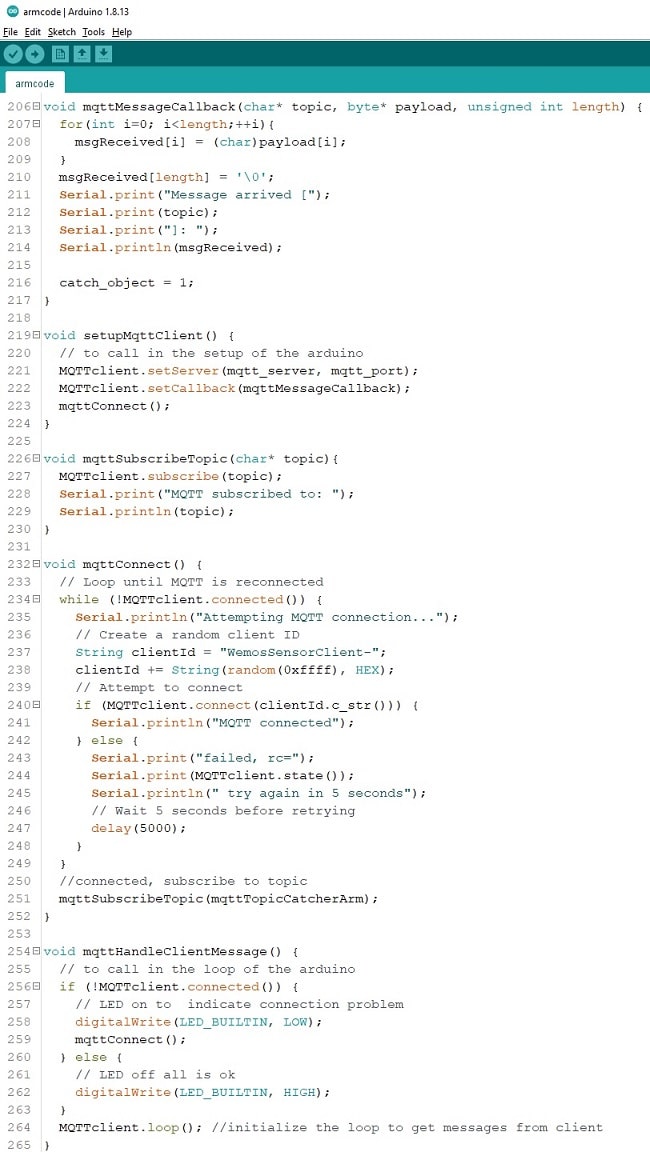

I tried to reuse as much code from the first prototype as possible. I have to make some small changes in the existing code and to add new codes for the MQTT communication with the object detection. The arm should register a topic named MQTTTriggerCatcherArm that will be used by the object detection to send a message to the arm to catch when the object is detected.

-

Inclusion of necessary libraries and declaration of variables. I have declared a variable with the topic name that should be registered in the MQTT server, named mqttTopicCatcherArm. I have declared also a variable called catch_object for the arm knows when it should catch the object.

-

An expert in electronics told me that moving a servo from one value immediately to another could damager the component. I have decided to implement a function to move the arm slowly until it reaches the desired position.

-

The function to setup the servos is simpler. I removed all variables that was being used only once compared to the first prototype. This resulted in less LoC (line-of-code) and it is easier to understand the code either. I used the function declared in step 2 to move nicely the servos to the initial position.

-

The function to setup the WiFi and to initialize the server to receive request from the mobile application (similarly it was done in the first version) were very similar as before. I have added a call to the function randomSeed(micros()) to initialize the random generator of numbers in the microcontroler. I have used a random number to create a unique client name for connecting to MQTT server.

-

Previously I have implemented the code that handle a client request in the function loop. In this version I created a dedicated function for it containing the same code. Except the URL that indicates which servo to move, that was adapted according to the changes in the mobile application.

-

Definition of the necessary functions to connect to the MQTT server and receive message. In the function mqttConnect(), once connected to the MQTT server, the microcontroler register the topic that will receive the signal from the object detection module indicating to catch the object. The function mqttMessageCallback is executed once the microcontroller receives the message. It simply print the message in the serial and set true the flag (catch_object) that indicates to catch the object. The function mqttHandleClientMessage() will trigger the listener from MQTTClient to listen messages sent via the server.

-

As the code was well structured, the functions setup and loop are away simpler than the first version. Notice that once the arm receives the signal to catch the object (flag catch_object = 1), the function loop will trigger the movements of the arm to catch the object. The object should be positioned around 10cm of distance for the arm be able to catch it.

-

The next step of the development is to place a distance sensor in front of the arm and, when it receives the signal to catch the object, move it close the object being able to catch it.

Download the ZIP file containing the two files, test servos and armcode, showed here.

All modules from the project¶

Here it is a video showing all modules working together. User select the type of object to be catched. Object detection send signal to arm when the object is found. Arm catch the object.

Presentation final project¶

License¶

Attribution-NonCommercial-ShareAlike license. This license allows reusers to distribute, remix, adapt, and build upon the material in any medium or format for noncommercial purposes only, and only so long as attribution is given to the creator. If you remix, adapt, or build upon the material, you must license the modified material under identical terms.

Download and BOM¶

Here it is the average of price of each component:

- 4 servos – each servo 5€, total of 20€

- raspberry pi

- camera

- Wemos microcontroller

- distance sensor

- wood: 3€ (50x30 cm and 3mm thickness)

- screws: 3€ (package of screws)

Acknowledgment¶

This project was possible to be done thanks to the Ingegno Maker Space and Dekimo Experts Gent. I honestly thank both companies that gave me the opportunity to be part of FabAcademy 2021 and provided a great support for developing the activities of the course.