Week 15 Interface and Application Programming

This week we were charged with the following assignments:

individual assignment:

- write an application that interfaces a user with an input &/or output device that you made

group assignment:

- compare as many tool options as possible

As I am remote, I will complete the group assignment on my own.

Hero Image

First steps

I started out working through the examples on this week’s page. The number and variety is actually overwhelming. I realized that reviewing every example is not a good use of time- but did so until, after spending a few days working through them, I realized that I needed to actually make something.

I figured that the best place to start would be in visualization. I reviewed the examples in graphics/visualizations and reviewed Nadieh’s documentation (link).

I then used chatgpt using the following prompt

Use the following programs as templates, create examples of a bouncing ball

complete in canvas, svg, webgl, three.js

<html>

<head>

<title>canvas line test</title>

</head>

<body>

<div id="div" style="position:absolute;top:0%;height:100%;left:0%;width:100%;text-align:center;">

<canvas id="canvas"></canvas>

</div>

<script type="text/javascript">

//

// canvasline.html

// Neil Gershenfeld 1/28/17

// demonstrates drawing canvas lines by animating sin(k*x)/k*x

//

var div = document.getElementById("div")

var height = div.clientHeight

var width = height

var canvas = document.getElementById("canvas")

canvas.width = width

canvas.height = height

var npts = 500

var nloop = 100

var loop = 1

var step = 1

var linewidth = 0.005

var dtms = 10

var ctx = canvas.getContext("2d")

ctx.lineWidth = linewidth*width

window.setInterval("animate()",dtms);

function animate() {

var x,y,r

ctx.clearRect(0,0,width,height)

ctx.beginPath()

point = 0

r = 100*(2*point-npts)/(npts*nloop)

x = width*point/npts

y = height*(1-Math.sin(loop*r)/(loop*r))/2

ctx.moveTo(x,y)

for (var point = 1; point < npts; ++point) {

r = 100*(2*point-npts)/(npts*nloop)

x = width*point/npts

y = height*(1-Math.sin(loop*r)/(loop*r))/2

ctx.lineTo(x,y)

}

ctx.stroke()

loop += step

if ((loop == 1) || (loop == nloop))

step = -step

}

</script>

</body>

or using svg

https://fabacademy.org/2026/classes/interface_application_programming/programs/svgline.html

<html>

<head>

<title>svg line test</title>

</head>

<body>

<div id="div" style="position:absolute;top:0%;height:100%;left:0%;width:100%;text-align:center;">

<svg id="svg" xmlns="http://www.w3.org/2000/svg" version="1.1"

viewBox="0 0 1 1">

</svg>

</div>

<script type="application/javascript">

//

// svgline.html

// Neil Gershenfeld 1/28/17

// demonstrates drawing SVG lines by animating sin(k*x)/k*x

//

var div = document.getElementById("div")

var height = div.clientHeight

var width = height

var svgns = "http://www.w3.org/2000/svg"

svg = document.getElementById("svg")

svg.setAttribute("width",width)

svg.setAttribute("height",height)

var npts = 1000

var nloop = 100

var loop = 1

var step = 1

var linewidth = 0.005

var dtms = 10

window.setInterval("animate()",dtms)

line = document.createElementNS(svgns,"polyline")

line.setAttribute("stroke-width",linewidth)

line.setAttribute("stroke","black")

line.setAttribute("fill","none")

svg.appendChild(line)

function animate() {

var x1,y1,x2,y2,r1,r2

var points = ""

for (var point= 0; point < npts; ++point) {

r1 = 100*(2*point-(npts+1))/(npts*nloop)

x1 = point/npts

y1 = (1-Math.sin(loop*r1)/(loop*r1))/2

r2 = 100*(2*(point+1)-(npts+1))/(npts*nloop)

x2 = (point+1)/npts

y2 = (1-Math.sin(loop*r2)/(loop*r2))/2

points += x1+','+y1+' '+x2+','+y2+' '

}

line.setAttribute("points",points)

loop += step

if ((loop == 1) || (loop == nloop))

step = -step

}

</script>

</body>

</html>The result is four different pages that made a ball move:

HTML Canvas Example

Link to four files

I reviewed the responses to get an understanding of how they work.Pretty cool. I think that I prefer the HTML canvas.

I then reviewed my notes on MQTT from week 11. Link to that page.

I opened Neil’s examples of MQTT, which is where I wanted to start.

hello.MQTT-blink.C3.py, hello.MQTT-blink.C3.html, and video.

I used chatgpt to explain how Neil’s example worked. The prompt I used is:

I am learning how to program interfaces applications as part of fab academy. I would like to experiment with reading sensor data from a camera and post this information to be viewed. I would like to use an XIAO ESP32 C3 sense board, python (or micropython) and MQTT. I would first like to understand how this program works. Explain its functionality, identifying each part and explaining what that part does in terms of the overall functionality of the program. My goal is to better understand how to work with MQTT. (and posted Neil’s # hello.MQTT-blink.C3.py).

I read through the response so that I understood how the MQTT script worked so that I could use them as a basis for visualizing data served from the fab academy MQTT server.

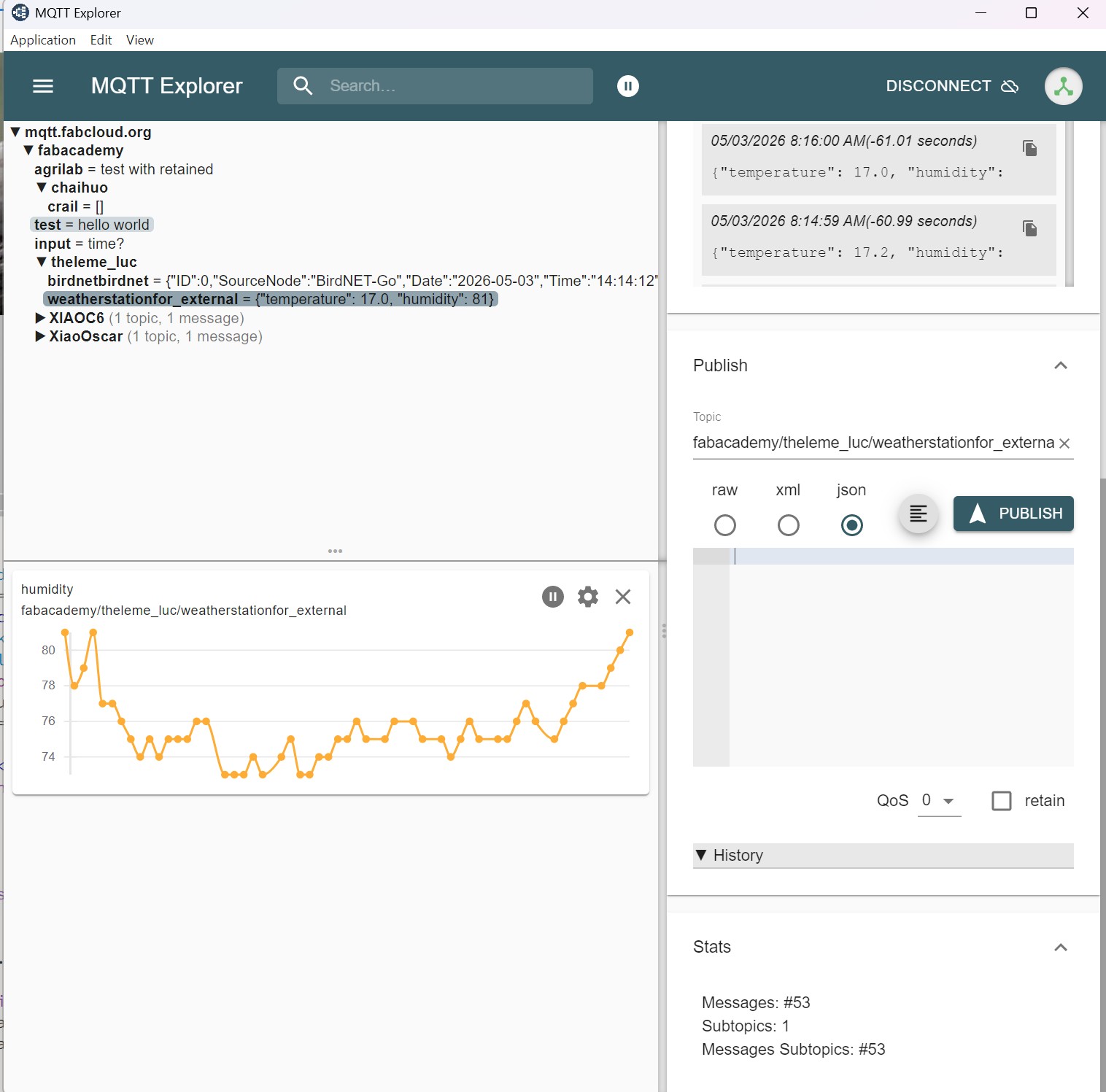

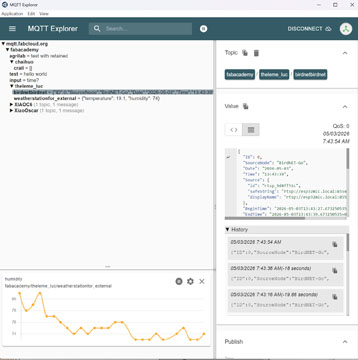

I first opened MQTT Explorer (link to my discussion of it) and selected Luc’s data.

I used the following prompt to create a test to just read the data that Luc's sensor was posting.

I would like to revise these bouncing ball programs to visualize data posted to an MQTT server. Ask me questions to clarify my goals and the context of my request. My ultimate goal is to create a project that posts data to an MQTT server and then has other interfaces that read this data. The information regarding the MQTT server is: Based on https://mosquitto.org/ server: mqtt.fabcloud.org port: 1883 username: XXX password: XXX channel/topic: fabacademy/theleme_luc/birdnetbirdnet Websocket support: wss://mqtt.fabcloud.org:8083

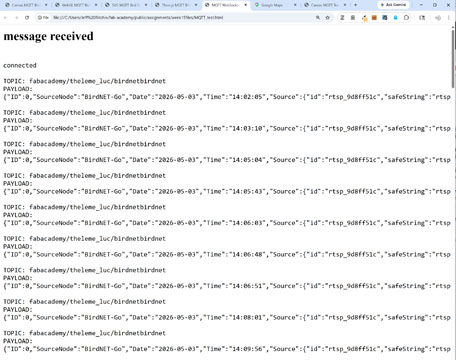

I ran into problems subscribing to the topic and I had to work through the way to subscibe. I worked with chatgpt to first create a test of the MQTT subscriber program and settings, before I worked on creating visualizations.

The first thing I found out was the MQTT doesn’t necessarily allow browsers to connect.

Browser clients cannot use ordinary MQTT/TCP directly, so they need Mosquitto WebSocket support.

The files chatgpt and I created relies on the paho.client "https://unpkg.com/paho-mqtt@1.1.0/paho-mqtt.js". Paho.Client is the JavaScript object used in the sketch to create an MQTT client in the browser. In the Eclipse Paho MQTT library, Paho.Client represents: “A client that connects to an MQTT broker, subscribes to topics, and sends/receives messages over WebSockets.”

Paho.Client is a browser-side MQTT client that:

- acts as a subscriber.

- reads the data and posts it as text on the page. Success.

In working with chatgpt, I created a test file just to make sure that the MQTT subscription would work. The html page that I created using chatgpt was:

link to basic MQTT test program.

I then used the different methods in my earlier bouncing ball tests to visualize the data a little better. I used HTML Canvas, SVG, Webgml, and three js.

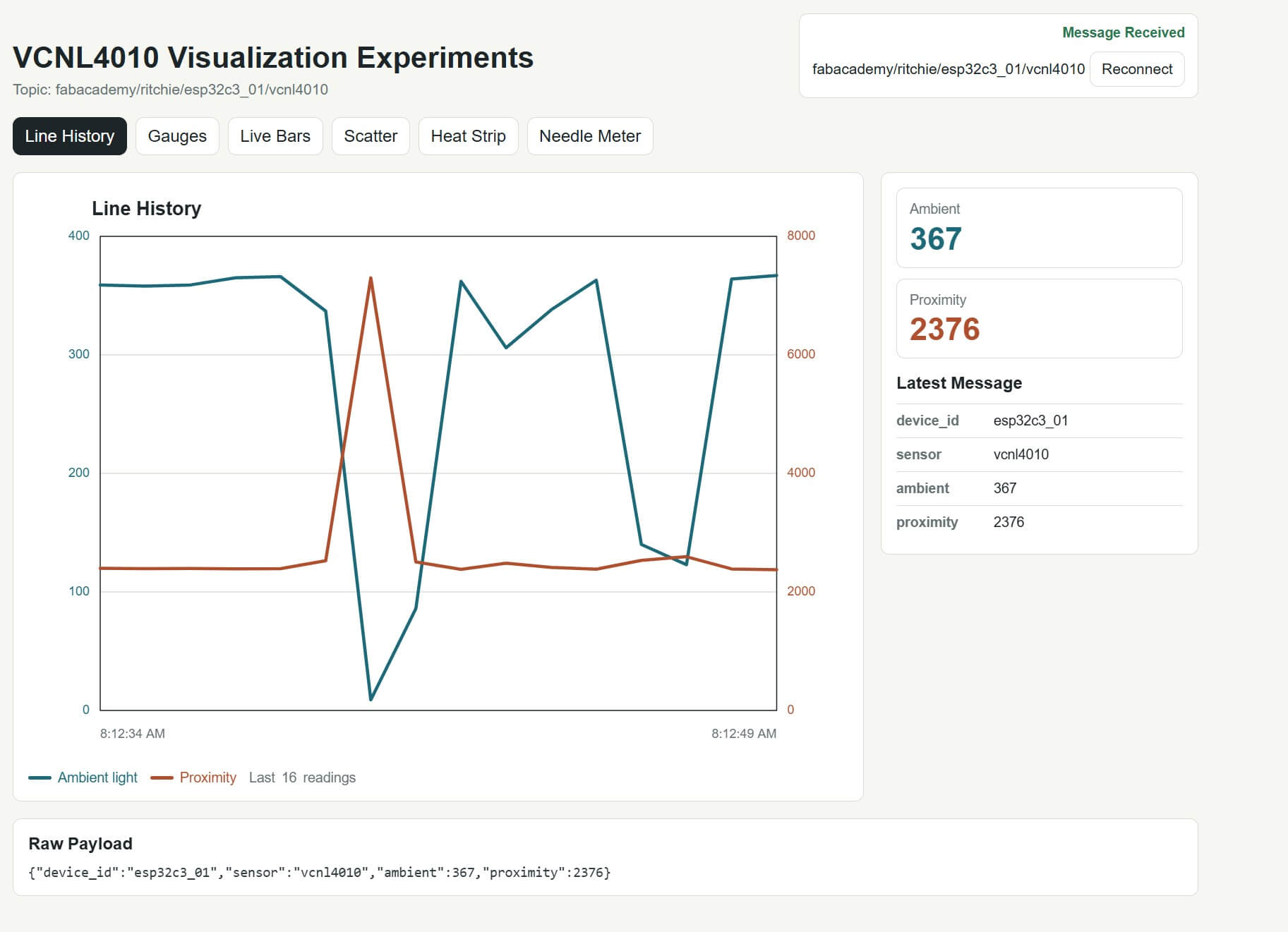

I decided to work with a new AI tool to build out the visualization of the data- openAI's Codex (at least it is a tool new to me- link here) to create the programs.

The prompt I used was

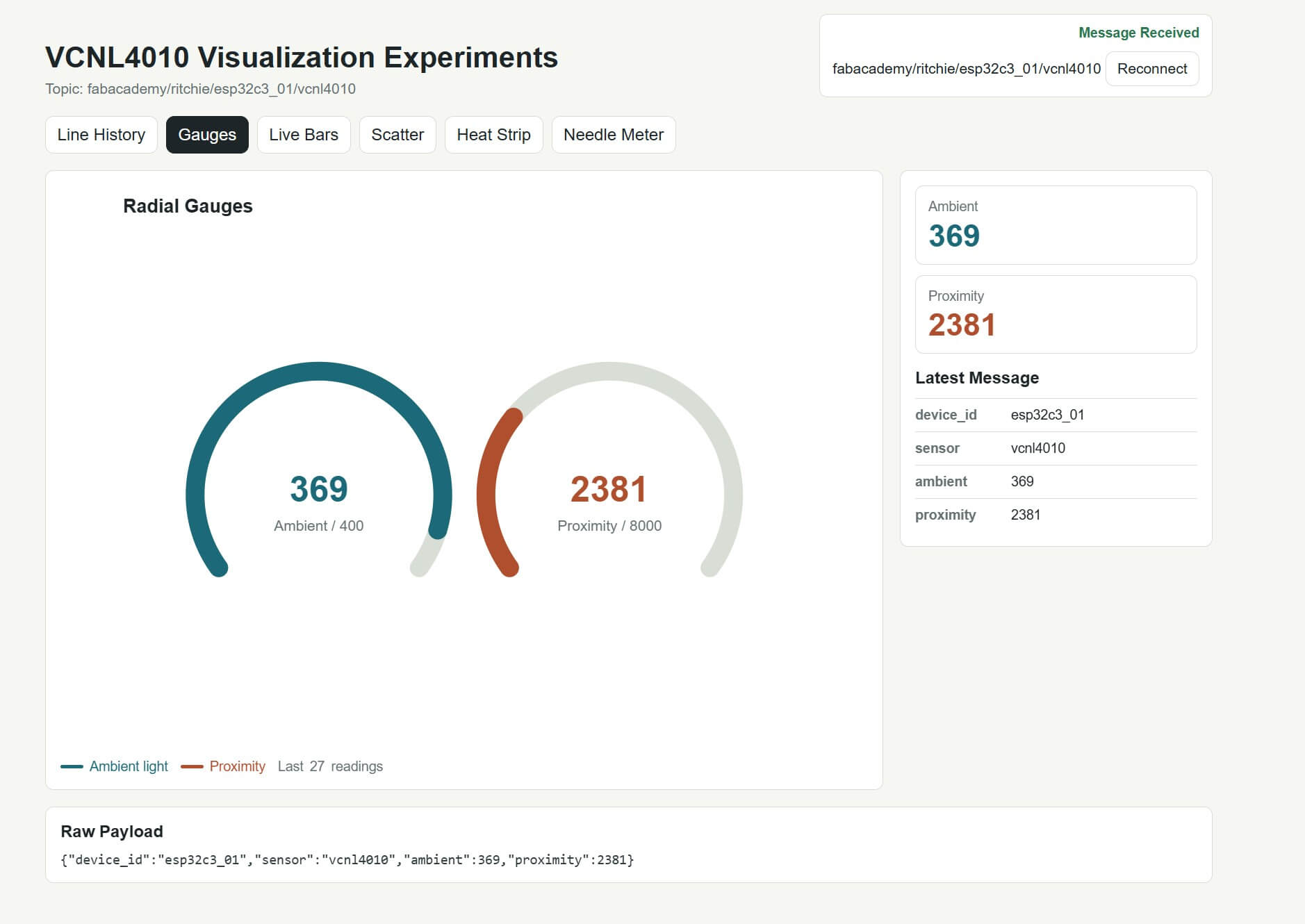

I'm now using the adafruit VCNL4010 sensor with an esp32c3. I've uploaded the following program to the board #include #include "Adafruit_VCNL4010.h" Adafruit_VCNL4010 vcnl; void setup() { Serial.begin(115200); Serial.println("VCNL4010 test"); if (! vcnl.begin()){ Serial.println("Sensor not found :("); while (1); } Serial.println("Found VCNL4010"); } void loop() { Serial.print("Ambient: "); Serial.println(vcnl.readAmbient()); Serial.print("Proximity: "); Serial.println(vcnl.readProximity()); delay(1000); } The board primxity seems reversed- it gives higher numbers when I place an object on the sensor- however the ambiet decreases teh closer an object is it- indicating perhaps that hte light is being blocked.

instead, I would like to post this information to the mqtt server that we had been using earlier.

change the topic. Include a new directory- ritchie and then a directory within that - ambientandproximity

i plan to publish other sensors.

I may work with mulitple esp 32 devices. I do need to build a dashboard. Timestamp from broker would be nice.

The topic paths would then be:

fabacademy/ritchie/esp32c3_01/vcnl4010

fabacademy/ritchie/esp32c3_01/vcnl4010/ambient

fabacademy/ritchie/esp32c3_01/vcnl4010/proximity

For this code to work, we need both an arduino program running on the ESP32 that publishes to the MQTT server and a web page that subscribes to this topic. I started with the following test file to just print to serial the data pulled from the sensor.(VCNL4010 Test) The programs I co-wrote to run are:

- The Arduino Sketch that I co-created with Chatgpt is: Publish to MQTT- A secrets.h file- which contains sensitive information. I opted to not share that file but instead share this example- see this file. For a further discussion of secrets.h files, see Adafruit's discussion of secret's files .

- The three subscriber/visualization web pages I created were

Ionic visualizationMQTT HTML Visualization

React Visualization

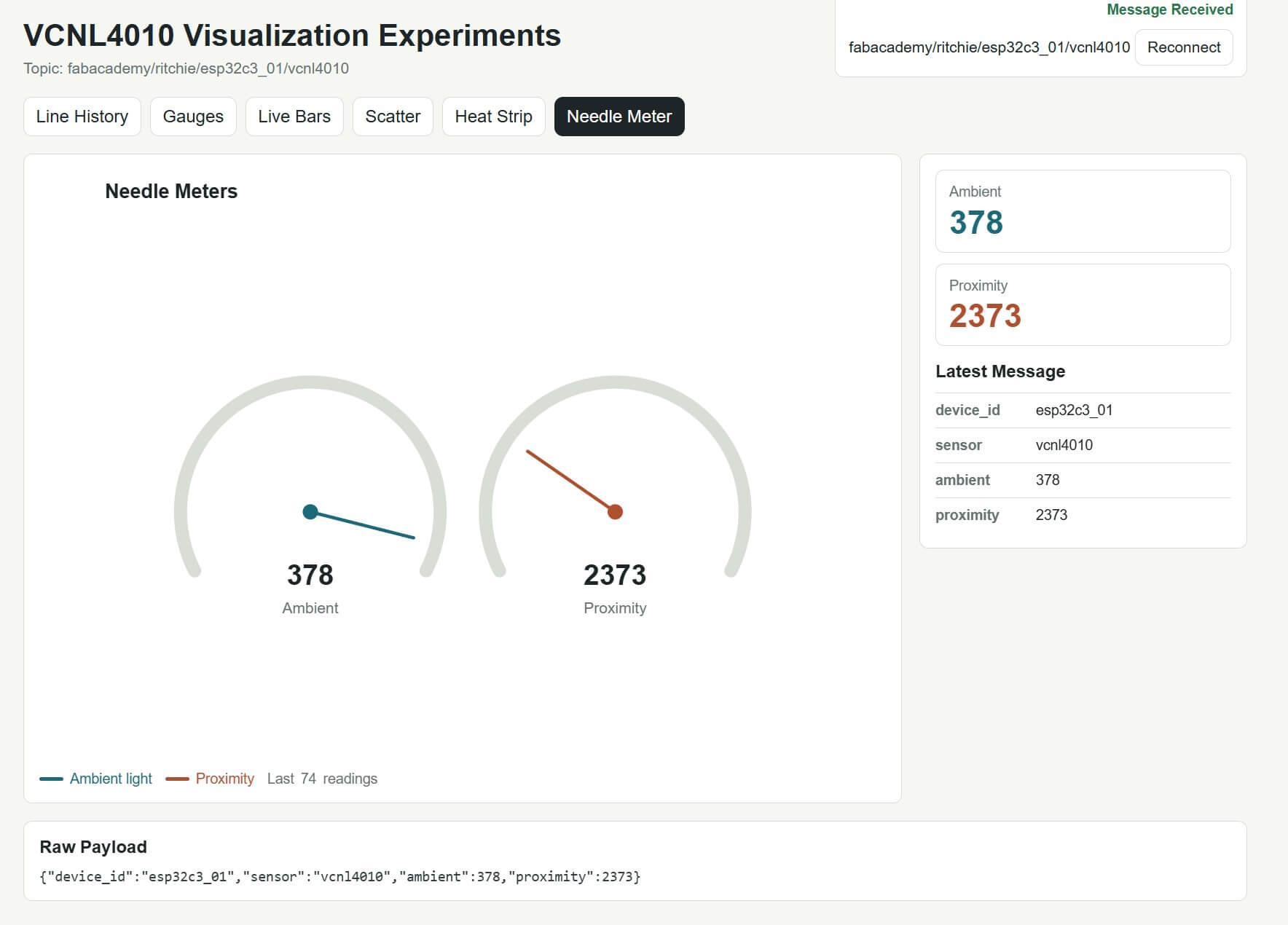

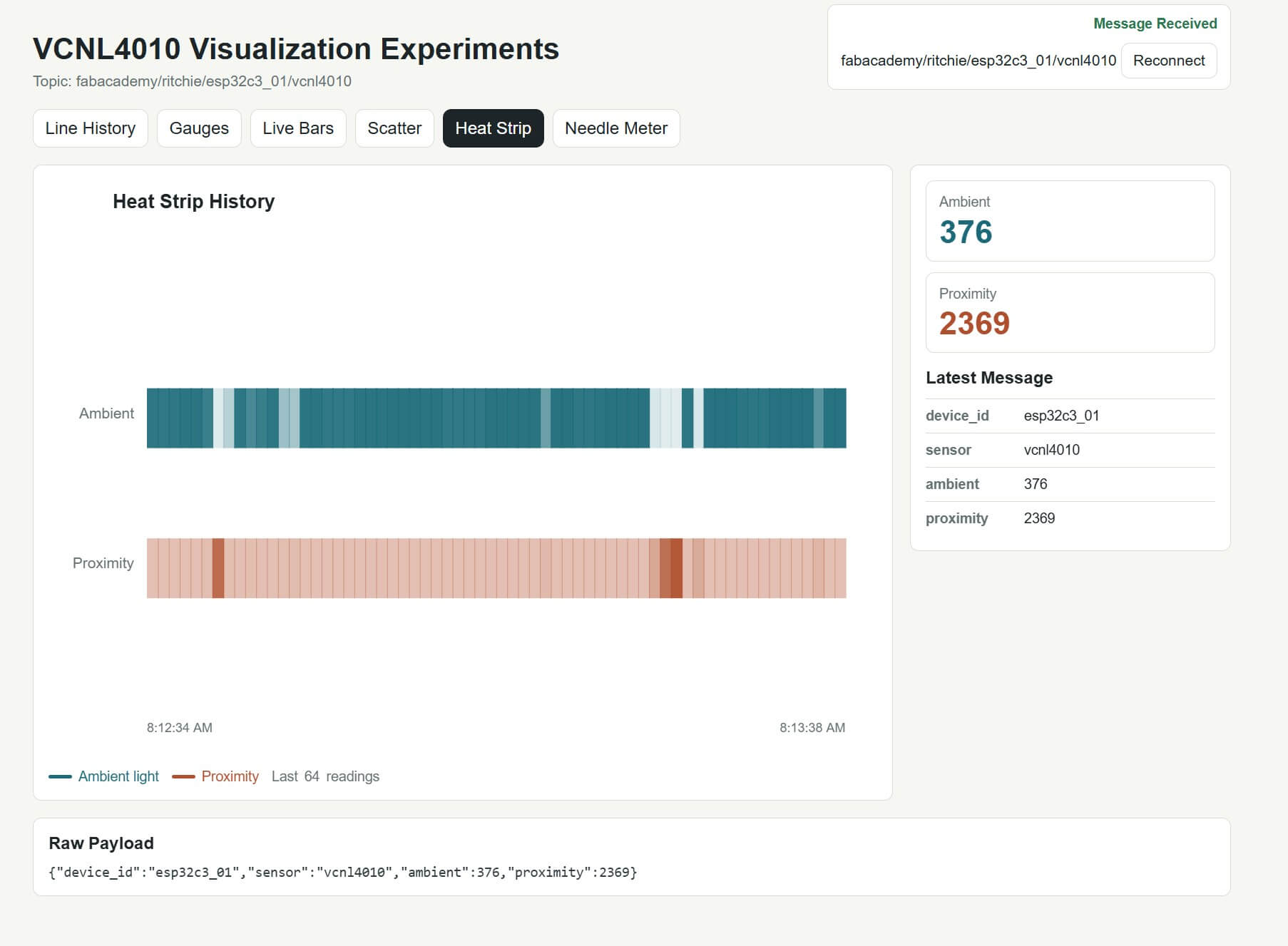

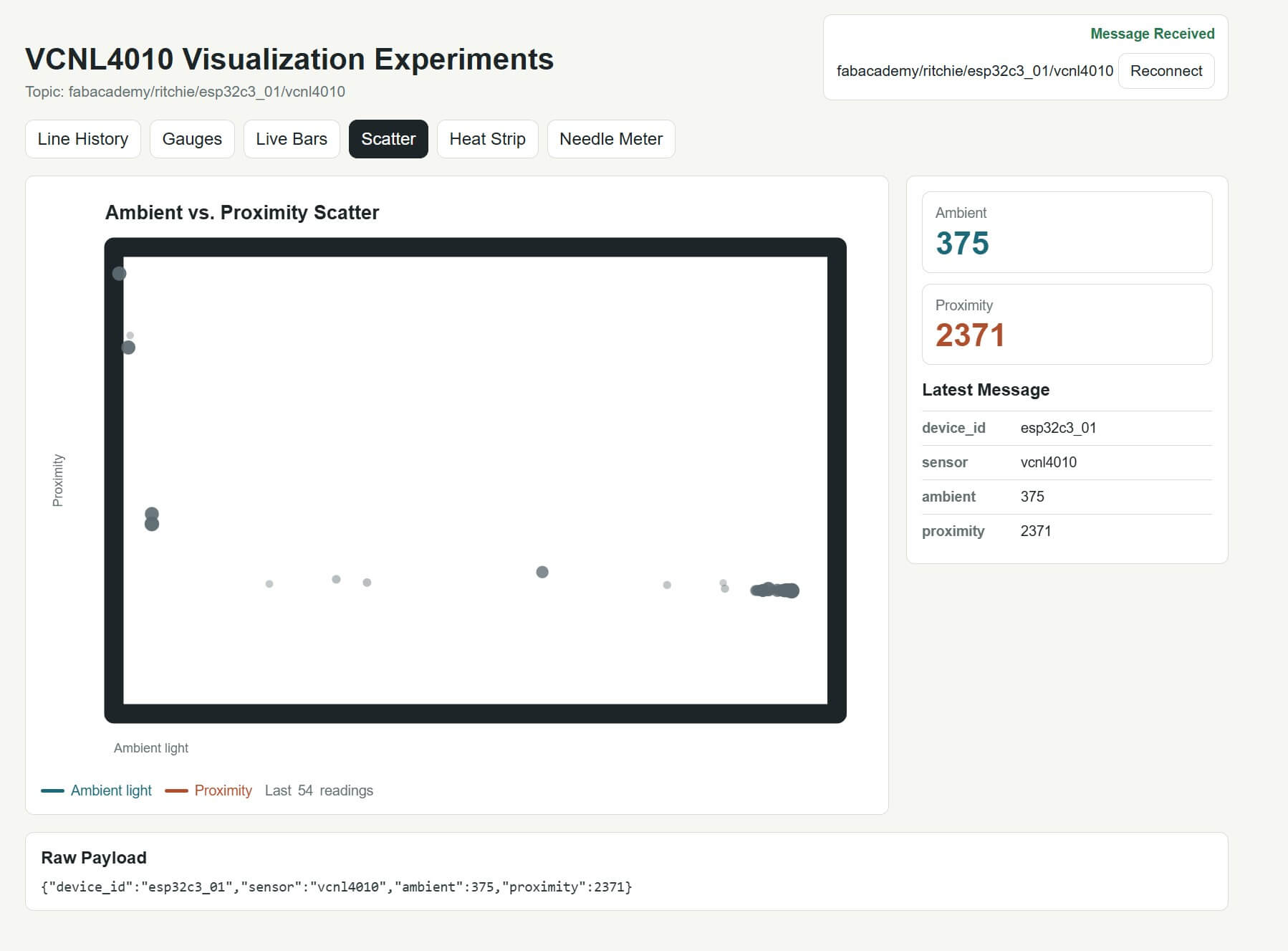

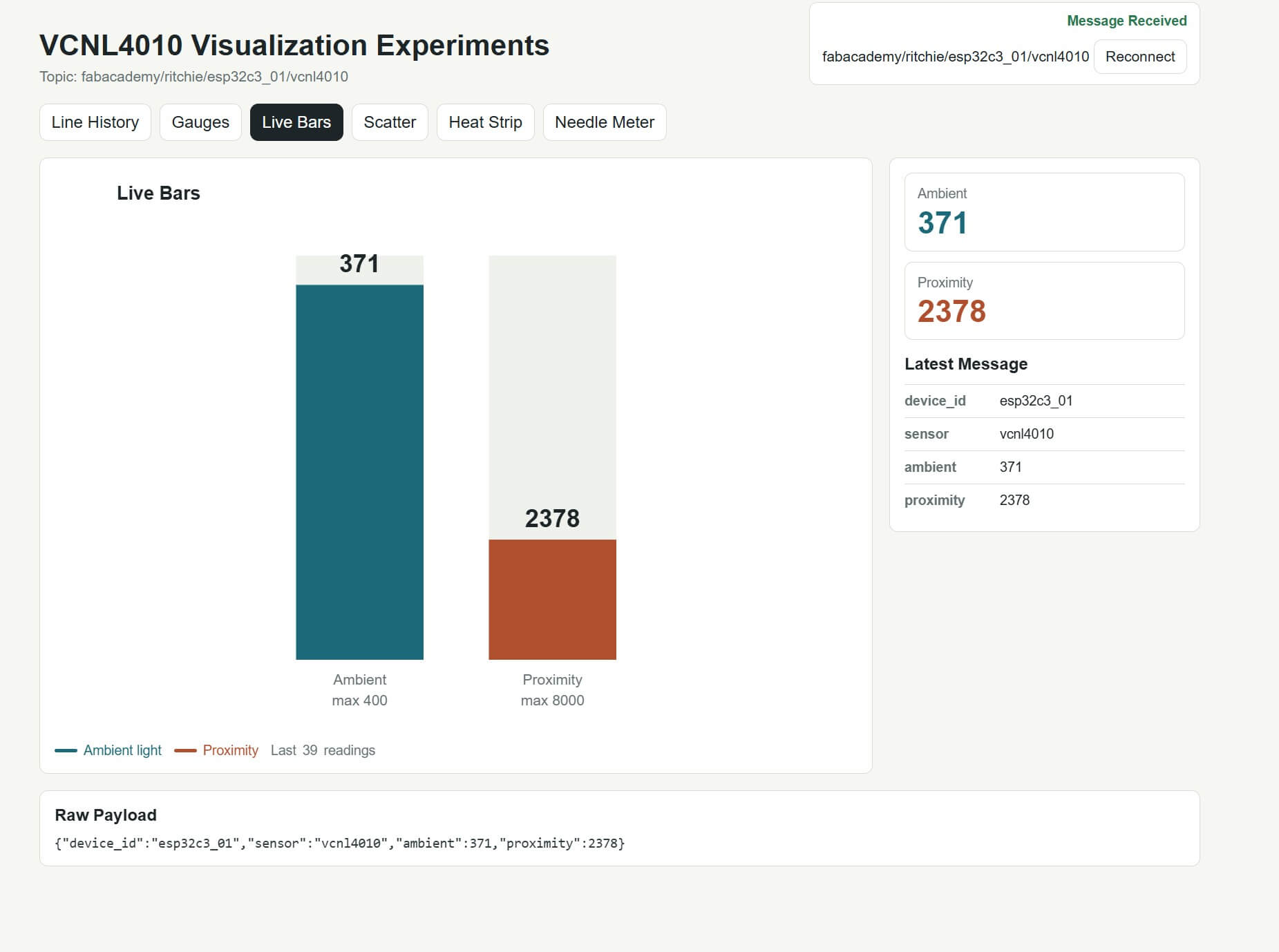

I then played around with adding more forms of visualization- in this case, I wanted to experiment with different means of visualizing the data from the VCNL sensor, but keep it on the same page. I used codex and used this prompt:

"Let's experiment with different ways to visualize this data."

This page subscribes to the same VCNL4010 MQTT topic as the original

dashboard and lets the viewer switch between multiple visualization modes.

I am, again, so very impressed by the power of these AI authoring tools. I created a webpage that allows the user to tab between different visualizations of the data. The goal was not to create a highly usable interface, but rather to experiement with how the visualizations might work with the proximity and ambient light data that I was publishing.

This is the file that I created using codex.

VCNL4010 visualization experimentFinally, I added the functionality of the LED program (see below) with the visualization experiments I had worked through.

The prompt I used was:

now create a new HTML document that is based on the the VCNL4010visualizationexperiment file, but also adds the functionality of the xiaoesp32cMMQTT program.

This page subscribes to the same VCNL4010 MQTT topic as the original

dashboard, lets the viewer switch between multiple visualization modes,

and publishes LED on/off commands for the XIAO ESP32C3.

Now, I would like to create a new html page that runs a program that uses an interface that allows me to press a button on the webpage and the XIAO esp32c3 LED turns on or off.

please create the ino sketch that would control the led on the esp32c3

Revise this new program so that it continues to read and post data from the VCNL4010 sensor AND allows the user to turn on or off the led.

the led is connected to pin d0

make the button a toggle- on/off button rather than have two different buttons

After a bit of revising and working with Codex, this is the result:

The HTML file publishes on/off commands to the MQTT server and subscribes to the data that the VCNL4010 and XIAO32 publish.

The arduino file runs on the XIAO32C3 that controls and reads the VCNL4010 sensor and publishes to the MQTT server.

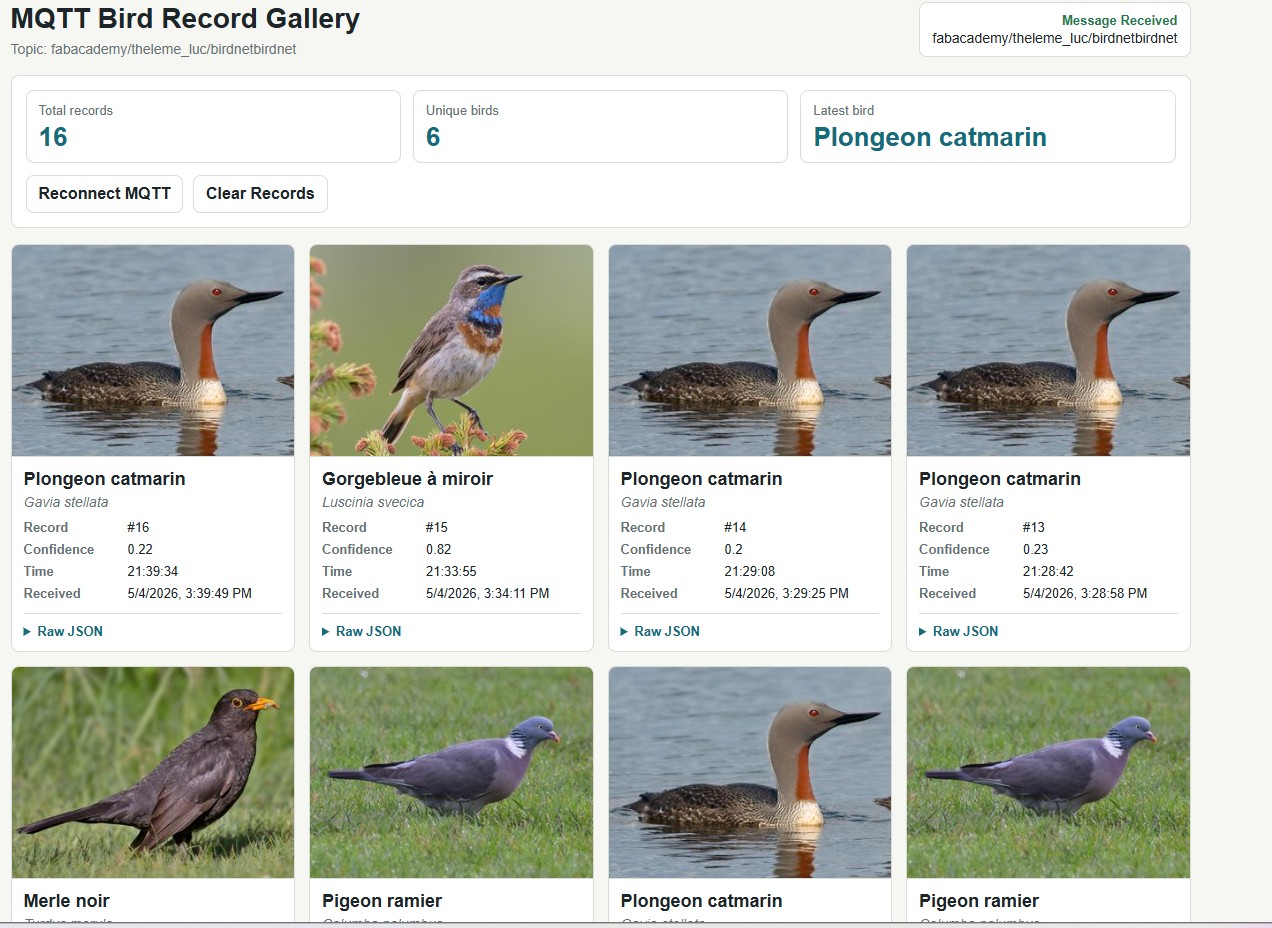

Bird Data Visualization

I was really intrigued by the idea that I could view the bird data that Luc was gathering. I wanted to experiment with how this data was presented and created four ways to visualize this data. I wrote the following prompt, but the AI didn't understand the MQTT topic I was attemping to subscribe to.

I would like to revise these bouncing ball programs to visualize data posted to an MQTT server. Ask me questions to clarify my goals and the context of my request. My ultimate goal is to create a project that posts data to an MQTT server and then has other interfaces that read this data. The information regarding the MQTT server is: Based on https://mosquitto.org/

server: mqtt.fabcloud.org

port: 1883

username: xxxx

password: xxxx

channel/topic: fabacademy/

Websocket support: wss://mqtt.fabcloud.org:8083

The data published on the site is:

{"ID":0,"SourceNode":"BirdNET-Go","Date":"2026-05-03","Time":"13:20:10","Source":{"id":"rtsp_9d8ff51c","safeString":"rtsp://esp32mic.local:8554/audio","displayName":"rtsp://esp32mic.local:8554/audio"},"BeginTime":"2026-05-03T13:19:56.313780636+02:00","EndTime":"2026-05-03T13:20:11.313393214+02:00","SpeciesCode":"cowpig1","ScientificName":"Columba palumbus","CommonName":"Pigeon ramier","Confidence":0.95,"Latitude":49.5401673,"Longitude":2.0566106,"Threshold":0.8,"Sensitivity":1,"ClipName":"2026/05/columba_palumbus_95p_20260503T132012Z.wav","ProcessingTime":448771574,"occurrence":0.9952566623687744,"Results":null,"Review":null,"Comments":null,"Lock":null,"Verified":"","Locked":false,"BirdImage":{"URL":"https://static.avicommons.org/cowpig1-66037963-320.jpg","ScientificName":"Columba palumbus","LicenseName":"CC BY-NC 2.0","LicenseURL":"","AuthorName":"A Emmerson","AuthorURL":"","CachedAt":"0001-01-01T00:00:00Z","SourceProvider":"avicommons"}}I opened MQTT explorer and took a screen shot of it. I then uploaded this screenshot to give it a better idea of the topic and directory path.

After working through a number of variations, we came up with the following four pages to visualize the MQTT bird data that Luc was publishing.

HTML CanvasSVG

ThreeJs

WebGl

Note- the HTML canvas method appears to be the most effective.

Experiment with User interfaces

I then worked with codex on using other UI tools

This is the prompt I used:

Now, choose three of the user interface protocols listed below to recreate what was created in the vcnl4010MQTTvisualization file. jQuery, dat.GUI, Bootstrap, Flat UI, Material Backbone, Require, Angular, Handlebars, Ember, Webpack Meteor, Babel, React, React Native, Cordova, Ionic, Electron, Blynk, Firebase

I uploaded the vcnl4010MQTTvisualization.html doc as a template, which provides the details on subscribing to the MQTT publisher.

I then added the following prompt:

Please describe the appropriateness of the following: jQuery, dat.GUI, Bootstrap, Flat UI, Material Backbone, Require, Angular, Handlebars, Ember, Webpack Meteor, Babel, React, React Native, Cordova, Ionic, Electron, Blynk, Firebase

The Codex response:

- Best Fits

- React - Very appropriate. Good for a live dashboard with changing state: connection status, latest values, graph data, raw payload, tables.

- Ionic - Appropriate if you want the page to feel like a mobile app. Still HTML-based, and could later become a mobile app.

- Bootstrap - Appropriate for quick clean layout: cards, grid, buttons, responsive behavior. Easier than React if you want plain HTML/JS.

- Material - Appropriate if you want a polished Google-style interface. Good cards, buttons, tabs, toggles, app bars.

- Firebase - Appropriate as a companion, not the main UI. Useful if you want to store sensor history, host the page, or share data without relying only on live MQTT.

- Useful But More Specialized

- jQuery - Works, especially for simple DOM updates. Less modern, but very understandable for teaching.

- dat.GUI - Useful for live controls, like changing graph scale, colors, thresholds, or MQTT topic. Not ideal as the whole interface.

- Babel - Useful if you want modern JavaScript or React JSX in browser. Not a UI framework by itself.

- Webpack - Useful for bundling a larger project. Overkill for one or two HTML demos.

- Handlebars - Useful for templating tables or repeated UI. Less useful for live graph-heavy interaction unless paired with other JS.

- Cordova - Appropriate if you want to package your HTML dashboard as a mobile app. Not needed for a regular webpage.

- Blynk - Appropriate for IoT dashboards, especially quick phone interfaces. Less appropriate if your goal is learning/custom-building web interfaces.

- Possible, But Probably Too Heavy

- Angular - Can absolutely do this, but it is a lot of framework for a small MQTT dashboard.

- Meteor - Interesting for realtime apps, but probably too much setup unless you want a full app with server/database/user accounts.

- Ember - Capable, but heavy and opinionated for this kind of small visualization.

- Backbone - Historically important, but dated. Could be educational, not my first choice.

- Require - Module loader, not a UI framework. Mostly useful in older JS architecture.

- Poor Fit For This Specific Goal

- React Native - Not HTML/web-page oriented. Good for native mobile apps, but not for embedding in your Fab Academy webpage.

- Electron - Good for desktop apps, but not appropriate if your target is webpage publishing.

- Flat UI - Mostly a visual style kit, not a strong app framework. Could influence look, but not structure.

- Recommended Set for Exploration

- Bootstrap - for plain responsive HTML

- React - for component/state-based UI

- Ionic - for mobile-app-style web UI

- jQuery - for beginner-friendly DOM manipulation

- Material - for polished design-system UI

- Firebase - if you want to add storage/history later

I told codex to create the visualization in ion, html, electron, and react.

They all pretty much look the same, but I wanted to see if there was any real difference in how they presented the data.

Link to four MQTT visualization files

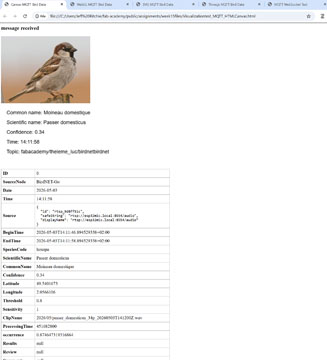

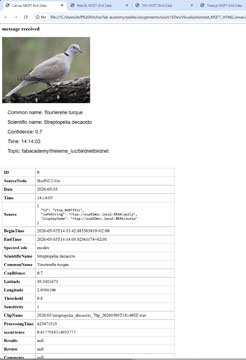

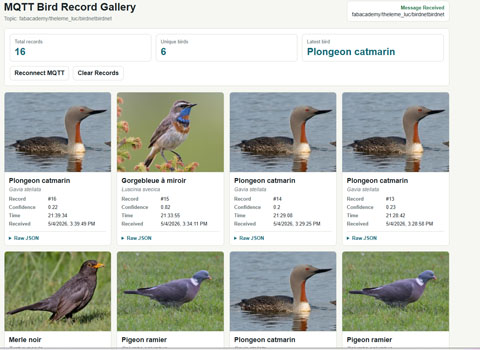

I wanted to add photos of the birds as I don't know what they look like. I had to run through a number of revisions before we solved the problem, but the pages now publish the data from Luc’s MQTT feed. Because the MQTT data feed for the sensor includes the URL for images of the birds identified, I used chatgpt to include the image of the bird from the URL.

Visualizing other types of data

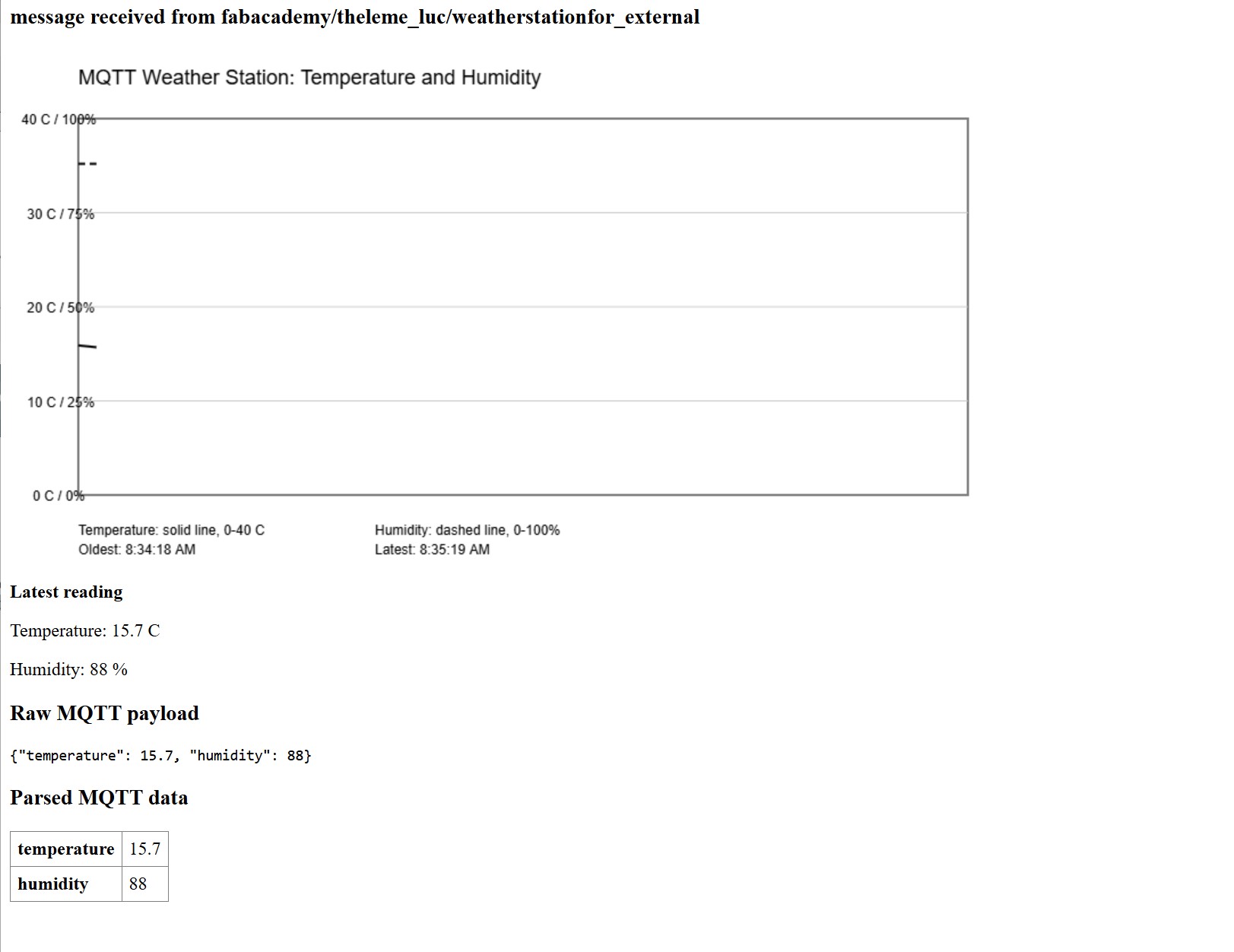

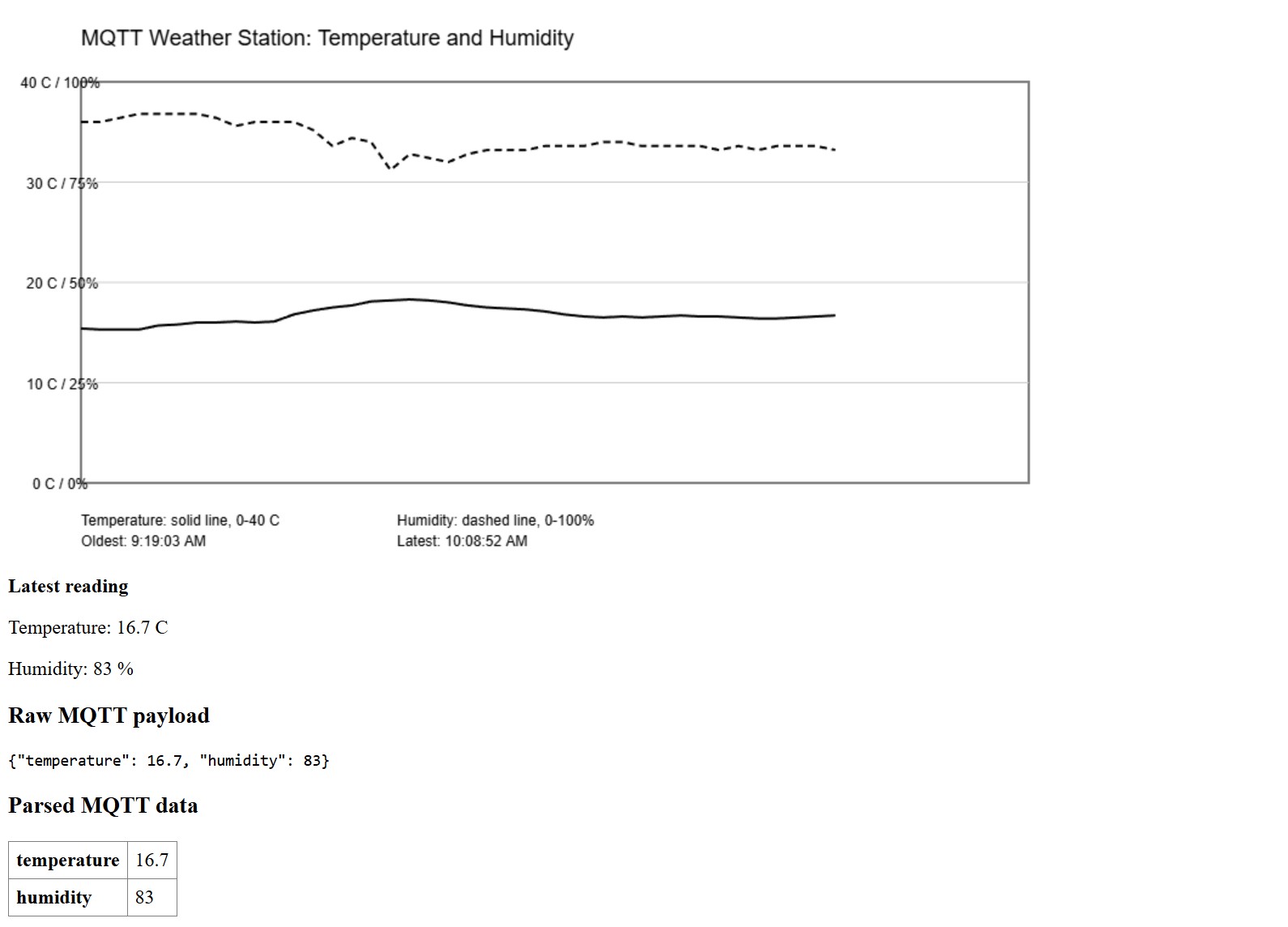

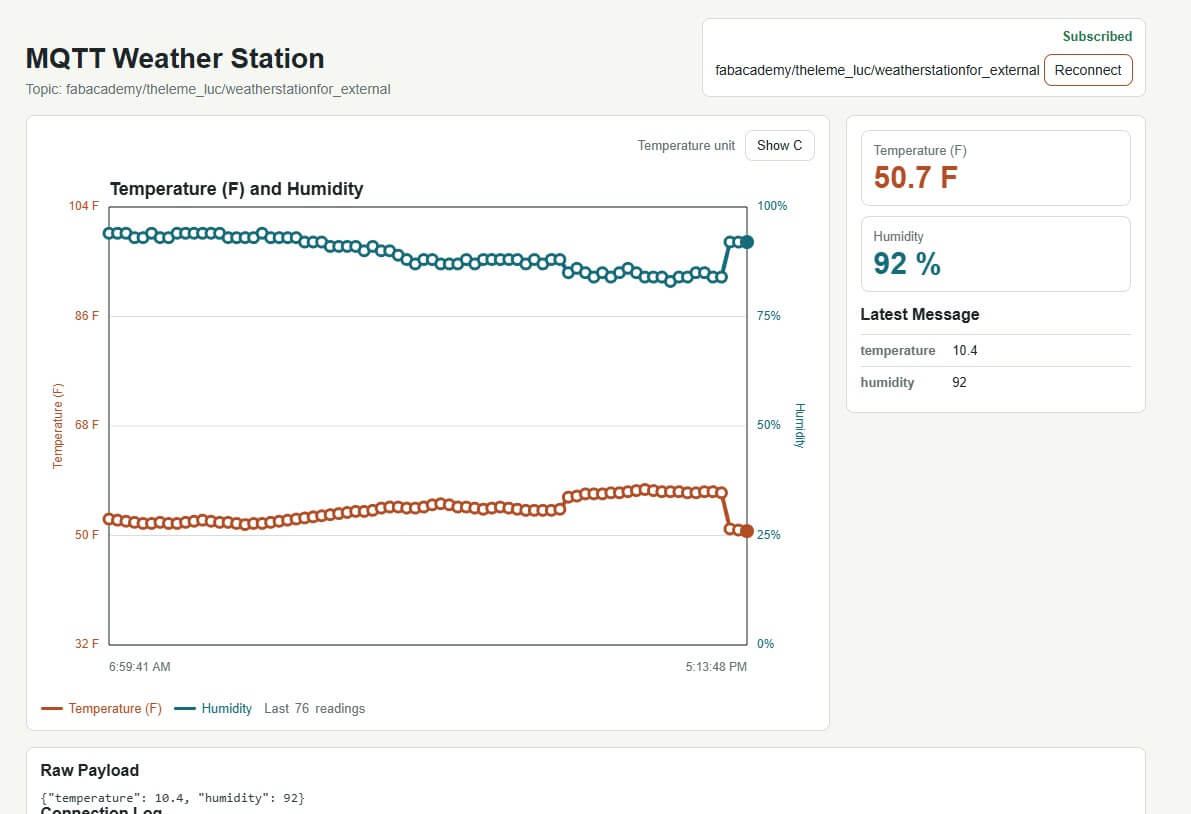

Because codex has a limit on usage, I switched back to chatgpt. I then used chatgpt to create a line graph of the temp and humidity data from Luc’s sensors.

The Prompt I used was:

Now just using the HTML5 canvas MQTT sketch, create a new sketch that makes a line graph of the temperature and humidity data published to MQTT fabacademy/theleme_luc/weatherstationfor_external

I worked with chatgpt to come up with the following visualization of data:

Link to temperature and humiditytesting sketch

How the markup works

- Load page

- connect to MQTT broker

- subscribe to weather topic

- wait for JSON message

- extract temperature and humidity

- store values

- redraw canvas graph

- show latest values

- show raw payload and parsed table

The page /markup code again uses the paho.client to create an MQTT client to access the MQTT server. It subscribes to fabacademy/theleme_luc/weatherstationfor_external using and connects to the Fab Academy MQTT broker and topic using WebSockets. (Note- as I had mentioend before-browser JavaScript ostensibly cannot use normal MQTT port 1883; it needs the WebSocket port, here 8083.)

var client = new Paho.Client(

MQTT_HOST,

MQTT_PORT,

MQTT_PATH,

"canvas_weather_" + Math.random().toString(16).substring(2)

)

It then connects to the broker using

client.connect({

userName: MQTT_USER,

password: MQTT_PASSWORD,

useSSL: true,

timeout: 5,

onSuccess: function() { ... }

})The graph uses javascript to store recent readings from the MQTT server in arrays. The script keeps only the most recent 50 readings. Older values are removed.

This is actually pretty cool- The page dynamically creates elements on the canvas and graphs the data it receives onto the HTML Canvas using the drawGraph() javascript function. The individual lines are created using the

function drawLine(data,minValue,maxValue,margin,graphWidth,graphHeight,dashed) { ... }.

This is very cool.

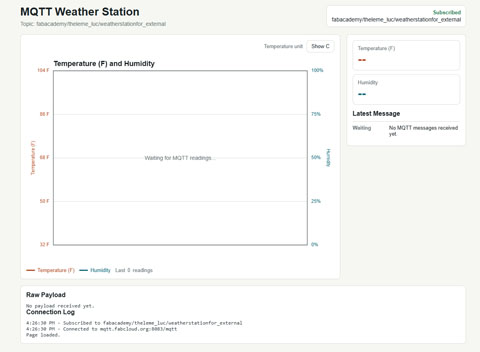

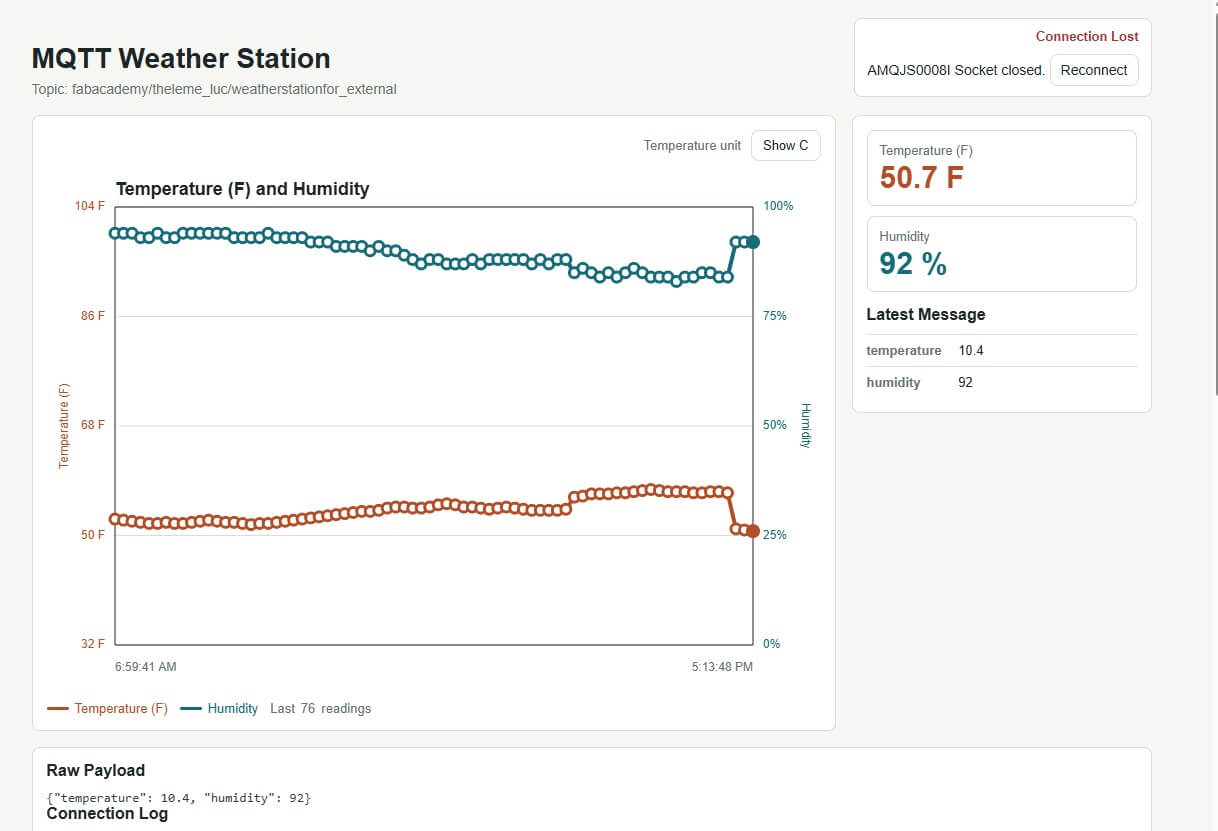

I wanted to experiment with the visualization of this data, so I built out two additional versions of this visualization. The first is what I am calling v2. I used Codex and gave it the following prompt:

I would like to take the data that is being published to MQTT in the program in this directory and create a visualization of the data, similar to the attached file.

I attached the tempandhumiditytest.html file as an example.

I worked through the file. Because I am rusty understanding what 17 degrees Celsius means, I used codex to add a toggle switch to switch between F and C. As an aside, this is the first time that I’ve used a dedicated AI programming tool like Codex. I am just awe struck by how powerful these tools are—and how much we can now do. I have a rudimentary at best understanding of programming. These tools allow me to complete in minutes what would have taken me hours if not days.

The second version includes a toggle switch that switches between F and C.

Link to v2

One thing that I did do, which I hope becomes standard, as I asked the tool to embed the original prompt into the comments in the program.

I then revised the progam again to include a reconnect/connect button, in order to reconnect the connection without losing the data that is being displayed.

The prompt I used was:

prompt- Create a copy of the tempandhumiditytest_v2 and call it tempandhumiditytest_v3. I't like to add a reconnect button to it as we had on other pages. When you press the reconnect button, I want it to not delete the previous data. I would also like markers on the line graph that show the data points when they are entered on the graph. The visualization of the data is difficult to see at this level.

Link to the web page

Publishing my own data

Okay- the next step would be to publish my own data.

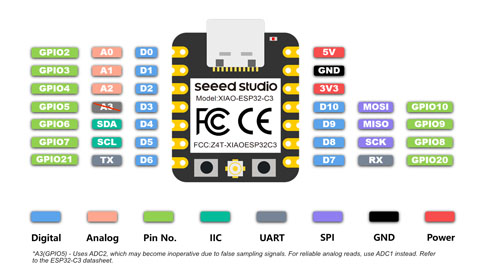

So the original board I had built was based on the XIAORP2040, however that board doesn’t have wifi capability. As a result, I used aa XIAO ESP32c3, which has 2.4hz wifi. The pinout of the XIAO ESP32c3 is:

My final project will use a TOF sensor or use a camera using tensor flow to identify user behaviors as an input that would control the output of the project. I will spiral from the proximity sensor I'm using this week, to in future weeks a Time of flight VL53L5CXVoGC/1, to hopefully a camera (XIAOESP32S3 Sense) if there is a time.

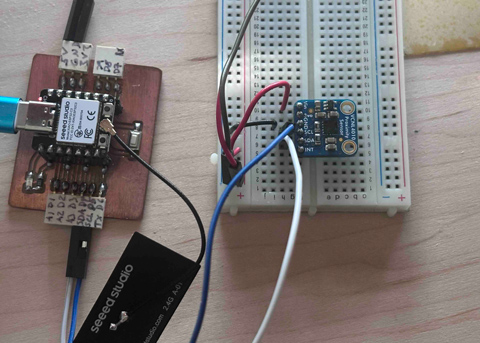

I have not yet built the TOF sensors I’d planned to use, but I have an Adafruit VCNL4010 distance sensor that returns ambient light and proximity and which is I2C capable. I’ll use this sensor to publish to the fabacademy MQTT server

I acknowledge that Neil doesn't like breadboards

Adafruit VCNL4010 distance sensor details can be found on its datasheet and the adafruit VCNL page.

I again reviewed the MQTT programs that Neil provided as examples:

The topic structure I will use for this will be: fabacademy/ritchie/esp32c3_01/vcnl4010

Topic structures need to incorporate a hierarchical design to allow for expanding what I publish to include other devices or sensors under a directory in my name. Doing this reduces the likelihood of “namespacing” problems, where data that my sensor is publishing will overwrite (or be overwritten or mix with) the data that others are publishing- or subscribing to.

Rule of thumb to avoid Namespacing :

-

Hierarchy: use a prefix/tenant/device/type structure.

Unique Identifiers: Use universally unique identifiers for device IDs within the namespace to ensure uniqueness.

I then decided to experiment with different ways to visualize this data. My prompt in codex was:

"Choose three of the user interface protocols listed below to recreate what

was created in the vcnl4010MQTTvisualization file."I then decided to experiment with three different ways to visualize this data

link to ionic file link to React file Link to HTML CanvasControlling a device through an online interface

Finally, I wanted to control my board using an online interface. The board I am using, as mentioned before, is a board I built that uses a XIAO ESP32C3 and that affords wifi connectivity.

I set up the board

I used the following prompt on codex:

Now, I would like to create a new html page that runs a program that uses an interface that allows me to press a button on the webpage and the XIAO esp32c3 LED turns on or off.

Codex responded:

Created the new LED control page: xiao_esp32c3_led_mqtt_control.html

It connects to mqtt.fabcloud.org over WebSockets and publishes: on or off

to this topic: fabacademy/ritchie/esp32c3_01/led

The page has Turn LED On, Turn LED Off, Reconnect MQTT, a visual LED indicator, and a small message log.

One important note: the XIAO ESP32C3 also needs Arduino code that subscribes to fabacademy/ritchie/esp32c3_01/led and turns the LED on/off when it receives those payloads. The current sensor sketch only publishes data, so the webpage is ready, but the board needs the matching subscriber behavior.

My next prompts were:

please create the ino sketch that would control the led on the esp32c3

Revise this new program so that it continues to read and post data from the VCNL4010 sensor AND allows the user to turn on or off the led.

the led is connected to pin d0

make the button a toggle- on/off button rather than have two different buttonsSuccess!

Controlling a device through a web portal/interface results

link to files

arduino file

html file

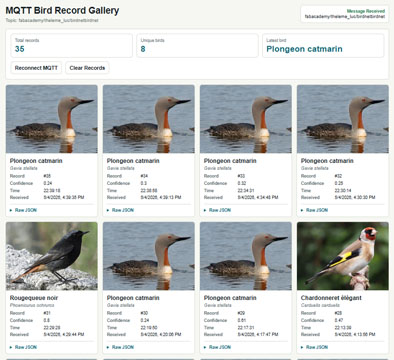

Bird visualization

Finally, I wanted to apply some of what I'd done on the VCNL sensor data to the bird data. I know- I really am intrigued by this idea of remote data....I used the following prompt on Codex (because I have some remaining time available on it):

based on this file, create a new HTML document that makes a record and shows the picture of every bird.

and included the file: Visualizationtest_MQTT_HTMLCanvas.html

This is what resulted:

Then I used the prompt:

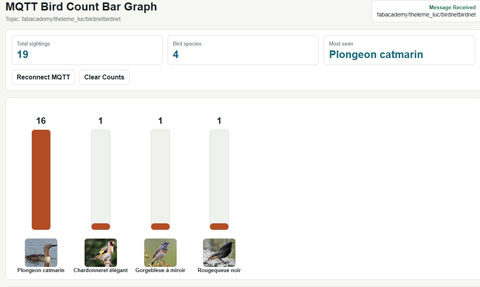

Can we create a new file that reads the data from the MQTT topic and then tabulates how many times each bird is seen? I would like to visualize this in a bar graph, with the image and name of the bird underneath the number of tims it appears.

This is what resulted:

Link to MQTT Bird Frequency Counter/Bar Graph

Overall, I had a blast this week.

This week's Files

- bouncingballhtmlcanvas.html

- bouncingballsvg.html

- bouncingballthreejs.html

- bouncingballwebgl.html

- tempandhumiditytest.html

- tempandhumiditytest_v2.html

- tempandhumiditytest_v3.html

- vcnl4010_ionic_visualization.html

- vcnl4010_mqtt_visualization.html

- vcnl4010_react_visualization.html

- vcnl4010_visualization_experiments.html

- vcnl4010_visualization_led_control.html

- vcnl4010_electron

- MQTT_test.html

- Visualizationtest_MQTT_HTMLCanvas.html

- Visualizationtest_MQTT_SVG.html

- Visualizationtest_MQTT_ThreeJS.html

- Visualizationtest_MQTT_WebGL.html

- bird_count_bar_graph_mqtt.html

- bird_record_mqtt_gallery.html

- xiao_esp32c3_led_mqtt_control.html